TrackingWorld: World-centric Monocular 3D Tracking of Almost All Pixels

Jiahao Lu, Weitao Xiong, Jiacheng Deng, Peng Li, Tianyu Huang, @frankzydou.bsky.social, Cheng Lin, Sai-Kit Yeung, Yuan Liu

arxiv.org/abs/2512.08358

TrackingWorld: World-centric Monocular 3D Tracking of Almost All Pixels

Jiahao Lu, Weitao Xiong, Jiacheng Deng, Peng Li, Tianyu Huang, @frankzydou.bsky.social, Cheng Lin, Sai-Kit Yeung, Yuan Liu

arxiv.org/abs/2512.08358

Submitting a paper is just a snapshot of ongoing work. The final stretch often uncovers overlooked details, and true clarity usually comes after the deadline—making post-deadline improvements even more efficient. But before that, don’t forget to enjoy a well-earned rest! 😄

😄

Hi, 🇸🇬! At #ICLR2025, I’ll be presenting our work 🎲DICE!

With DICE, one can explore hand-face interactions 📷 — this feedforward method simultaneously estimates hand and face poses, contact points, and deformations from a single image.

📍 Hall 3+Hall 2B #130 Poster Session 6

🕒 Sat 26 Apr, 3–5:30 p.m.

Can Video Diffusion Model Reconstruct 4D Geometry?

Jinjie Mai, Wenxuan Zhu, Haozhe Liu, Bing Li, Cheng Zheng, Jürgen Schmidhuber, Bernard Ghanem

tl;dr: pretrained video VAE->finetune pointmap VAE; finetune Open-Sora within latent space of video&pointmap

arxiv.org/abs/2503.21082

The AI for Content Creation workshop #CVPR2025 is accepting paper submissions. ai4cc.net Deadline March 21st 2025 midnight PST. 4 page extended abstracts, 8 pagers, and previously published work (ECCV, NeurIPS, even CVPR)! Many topics 📷📹🎬🎲✒️📃🖼️👗👔🏢 - come spend the day with us!

Hmmmmm...... I like it black

Libraries and museums are integral to scientific advancement and to our core mission of #scienceforall. Stand Up for Science stands with @amlibraryassoc.bsky.social!

Please call your representatives and let them know you STRONGLY oppose this attack on access to knowledge.

#standupforscience

If you are aware of grad program reduction due to cuts in science funding in the USA -- please help catalog it! docs.google.com/forms/d/e/1F... 🧪🌿🐸

Happy Pi Day!

The submission deadline for the 4D Vision Workshop is in two weeks on March 28.

Both 4-page and 8-page submissions are welcome. Check the website for more detail: 4dvisionworkshop.github.io

Hope to see many of you at CVPR! @cvprconference.bsky.social

#CVPR2025 Area Chairs (ACs) identified a number of highly irresponsible reviewers, those who either abandoned the review process entirely or submitted egregiously low-quality reviews, including some generated by large language models (LLMs).

1/2

Applications due in less than a week!

Excited to bring the 5th CV4Animals Workshop to #CVPR2025

We welcome submissions in 2 tracks:

1) unpublished work up to 4 pages

2) papers published within last 2 years

Submit by Mar 28 & join us with amazing speakers in Nashville:

www.cv4animals.com

🦒🪼🐬🐿️🦩🐢🦘🦜🦥🦋

@cvprconference.bsky.social

DICE 🎲was accepted by #ICLR2025.

With DICE, one can learn more about hand-face interactions 👤👋. This end-to-end method also enables better scalability to learn and model hand-face interaction. Congrats to our amazing intern Peter QingXuan Wu!

Project page: frank-zy-dou.github.io/projects/DIC...

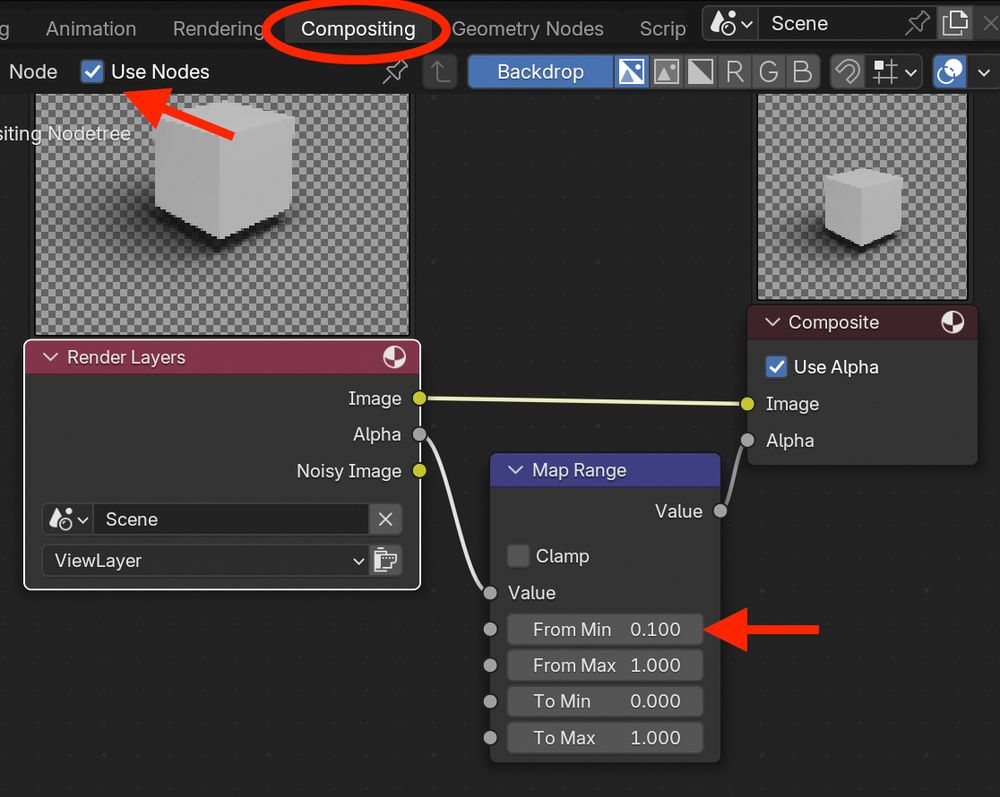

You don't want edges on shadows in your beautiful #Blender #SIGGRAPH paper figures. An easy way to get rid of them is to go to the compositing tab, check the box 'Use Nodes' and add a 'Map Range' node on the alpha channel. Then set 'From Min' to a low value👌

🤦

Oh Hi!

I think a lot of technical people (me included) always felt this was the case, that ML image generation approaches, untethered from some interpretable, controllable model of the world (like a game engine) would never work. It's cool to see that Jensen agrees.

www.pcworld.com/article/2570...

Genesis has now released an official speed benchmarking report, community channels, and a new version 0.2.1, which features faster kernel loading, smoke simulation, more RL training modules, etc.

github.com/zhouxian/gen...

#Genesis #Robotics #Simulation

✨It sets the SOTA in self-supervised methods—outperforming even supervised ones on several benchmarks—and excels in occlusion, low-feature areas, and challenging scenarios! 💡🔗

🚀 ProTracker delivers accurate and robust Tracking Any Point (TAP) with a Kalman filter-inspired Probabilistic approach, seamlessly fusing optical flow and semantic cues for smoother, more accurate trajectories!

Project page: michaelszj.github.io/protracker/

Paper: arxiv.org/abs/2501.03220

ProTracker: Probabilistic Integration for Robust and Accurate Point Tracking

Tingyang Zhang, @cwchenwang.bsky.social, @frankzydou.bsky.social, Qingzhe Gao, Jiahui Lei, Baoquan Chen, @lingjieliu.bsky.social

arxiv.org/abs/2501.03220

Announcing SGI 2025! Undergrads and MS students: Apply for 6 weeks of paid summer geometry processing research. No experience needed: 1 week tutorials + 5 weeks of projects. Mentors are top researchers in this emerging branch of graphics/computing/math. sgi.mit.edu

You are welcome!:)

Yes. But this is a preview of Genesis features. They haven't been released yet, and we will make them available later.

Good point! The ultimate goal of Genesis is to achieve generation for various assets. Yet, currently, assets are typically retrieved and using preset physical parameters. :) It would be the future steps to generate different articulated objects and shapes with different physical properties.

Physics simulation serves as a foundational platform, but simulation alone is insufficient—we need various assets for simulation. This is why Genesis not only offers a versatile simulation platform but also integrates a model to create assets essential for simulation.