A factor of 10 billion since 2010 😮

A couple of eye-opening slides form @sloeschcke.bsky.social's presentation at today’s @belongielab.org meeting (1/2)

@sloeschcke

Working on Efficient Training, Low-Rank Methods, and Quantization. PhD at the University of Copenhagen 🇩🇰 Member of @belongielab.org, Danish Data Science Academy, and Pioneer Centre for AI 🤖 🔗 sebulo.github.io/

A factor of 10 billion since 2010 😮

A couple of eye-opening slides form @sloeschcke.bsky.social's presentation at today’s @belongielab.org meeting (1/2)

🇳🇱 𝗤𝘂𝗮𝗹𝗰𝗼𝗺𝗺 𝗔𝗜 𝗥𝗲𝘀𝗲𝗮𝗿𝗰𝗵 𝗜𝗻𝘁𝗲𝗿𝗻𝘀𝗵𝗶𝗽 🇳🇱

Excited to join @qualcomm.bsky.social in Amsterdam as a research intern in the Model Efficiency group, where I’ll be working on quantization and compression of machine learning models.

I’ll return to Copenhagen in December to start the final year of my PhD.

📯 Best Paper Award at CVPR workshop on Visual concepts for our (@doneata.bsky.social + @delliott.bsky.social) paper on probing vision/lang/ vision+lang models for semantic norms!

TLDR: SSL vision models (swinV2, dinoV2) are surprisingly similar to LLM & VLMs even w/o lang 👀

arxiv.org/abs/2506.03994

Thanks to my co-authors David Pitt, Robert Joseph George, Jiawwei Zhao, Cheng Luo, Yuandong Tian, Jean Kossaifi, @anima-anandkumar.bsky.social, and @caltech.edu for hosting me this spring!

Paper: arxiv.org/abs/2501.02379

Code: github.com/neuraloperat...

We also show strong results on other PDE benchmarks, including 𝐃𝐚𝐫𝐜𝐲 𝐟𝐥𝐨𝐰 and the 𝐁𝐮𝐫𝐠𝐞𝐫𝐬 equation, demonstrating TensorGRaD’s broad applicability across scientific domains.

We test TensorGRaD on large-scale Navier–Stokes at 1024×1024 resolution with Reynolds number 10e5, a highly turbulent setting. With mixed-precision and 75% optimizer state reduction, it 𝐦𝐚𝐭𝐜𝐡𝐞𝐬 𝐟𝐮𝐥𝐥-𝐩𝐫𝐞𝐜𝐢𝐬𝐢𝐨𝐧 𝐀𝐝𝐚𝐦 while cutting overall memory by up to 50%.

We also propose a 𝐦𝐢𝐱𝐞𝐝-𝐩𝐫𝐞𝐜𝐢𝐬𝐢𝐨𝐧 𝐭𝐫𝐚𝐢𝐧𝐢𝐧𝐠 strategy with weights, activations, and gradients in half precision and optimizer states in full precision, and empirically show that storing optimizer states in half precision hurts performance.

We extend low-rank and sparse methods to tensors via a 𝐫𝐨𝐛𝐮𝐬𝐭 𝐭𝐞𝐧𝐬𝐨𝐫 𝐝𝐞𝐜𝐨𝐦𝐩𝐨𝐬𝐢𝐭𝐢𝐨𝐧 that splits gradients into a low-rank Tucker part and an unstructured sparse tensor. Unlike matricized approaches, we prove our tensor-based method converges.

Recent methods reduce optimizer memory for matrix weights. This includes Low-rank and sparse methods from LLMs that work on matrices. But to use them for Neural Operators, we’d need to flatten tensors, which destroys their spatial/temporal structure and hurts performance.

These Neural Operators use tensor weights. However, optimizers like Adam store two full tensors per weight, making memory the bottleneck at scale.

TensorGRaD reduces this overhead by up to 75% (𝑑𝑎𝑟𝑘 𝑔𝑟𝑒𝑒𝑛 𝑏𝑎𝑟𝑠), without hurting accuracy.

Scientific computing operates on multiscale, multidimensional (𝐭𝐞𝐧𝐬𝐨𝐫) 𝐝𝐚𝐭𝐚. In weather forecasting, for example, inputs span space, time, and variables. Neural operators can capture these multiscale phenomena by learning an operator that maps between function spaces.

Check out our new preprint 𝐓𝐞𝐧𝐬𝐨𝐫𝐆𝐑𝐚𝐃.

We use a robust decomposition of the gradient tensors into low-rank + sparse parts to reduce optimizer memory for Neural Operators by up to 𝟕𝟓%, while matching the performance of Adam, even on turbulent Navier–Stokes (Re 10e5).

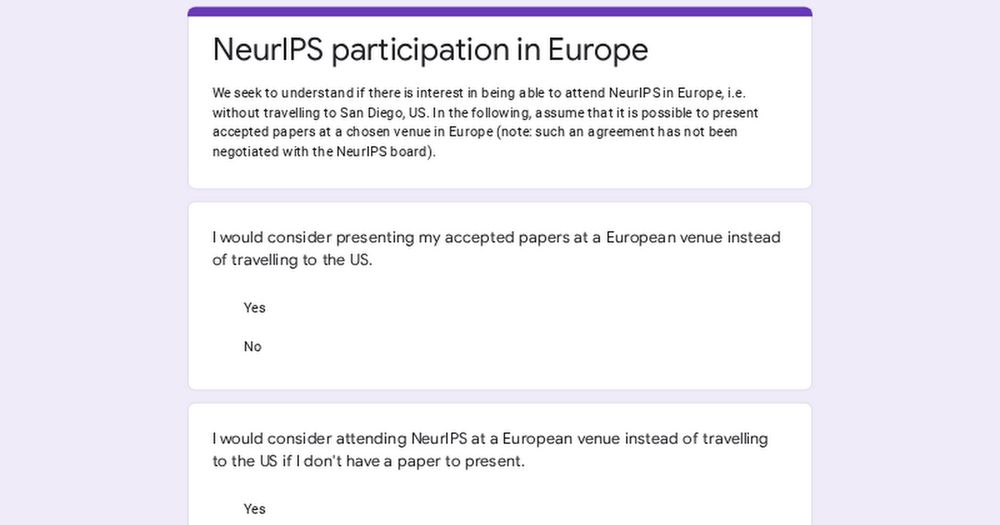

Would you present your next NeurIPS paper in Europe instead of traveling to San Diego (US) if this was an option? Søren Hauberg (DTU) and I would love to hear the answer through this poll: (1/6)

Visited the beautiful UC Santa Barbara yesterday.

Thrilled to announce "Multimodality Helps Few-shot 3D Point Cloud Semantic Segmentation" is accepted as a Spotlight (5%) at #ICLR2025!

Our model MM-FSS leverages 3D, 2D, & text modalities for robust few-shot 3D segmentation—all without extra labeling cost. 🤩

arxiv.org/pdf/2410.22489

More details👇

While Pasadena will be my home, I’ll also be making trips to Austin, the Bay Area, and San Diego. If you’re nearby and up for a chat, reach out—let’s meet up!

View from the office building

☀️ Moved to Pasadena, California! ☀️

For the next five months, I’ll be a Visiting Student Researcher at Anima Anandkumar's group at Caltech, collaborating with her team and Jean Kossaifi from NVIDIA on Efficient Machine Learning and AI4Science.

Screenshot of the course website for "SSL4EO: Self-Supervised Learning for Earth Observation"

Recordings of the SSL4EO-2024 summer school are now released!

This blog post summarizes what has been covered:

langnico.github.io/posts/SSL4EO...

Recordings: www.youtube.com/playlist?lis...

Course website: ankitkariryaa.github.io/ssl4eo/

[1/3]

New Starter Pack: Pioneer Centre for AI researchers

Come by our poster session tomorrow!

🗓️ West Ballroom A-D #6104

🕒 Thu, 12 Dec, 4:30 p.m. – 7:30 p.m. PST

@madstoftrup.bsky.social and I are presenting LoQT: Low-Rank Adapters for Quantized Pretraining: arxiv.org/abs/2405.16528

#Neurips2024

Copenhagen University and Aarhus University meet-up in Vancouver 🇩🇰🇨🇦

#NeurIPS2024

On my way to NeurIPS in Vancouver 🇨🇦

Looking forward to reconnecting with friends and meeting new people. Let me know if you are interested in efficient training, quantization, or grabbing a coffee!

#NeurIPS2024

Check out the work our lab in Copenhagen will be presenting at #NeurIPS2024 🌟

@neuripsconf.bsky.social @belongielab.org

Here’s a starter pack with members of our lab that have joined Bluesky

Pre-NeurIPS Poster Session in Copenhagen.

Thanks to the Pioneer Centre for AI and @ellis.eu for sponsoring.

@neuripsconf.bsky.social

#neurips2024

Check out the ELLIS Pre-NeurIPS Fest event today in...🇩🇰Copenhagen!

ELLIS Unit Copenhagen is holding their event at the Pioneer Center for AI showcasing #NeurIPS posters and other Denmark-affiliated papers in #AI and #ML.

More info: bit.ly/4fRFrAh

A photo of Boulder, Colorado, shot from above the university campus and looking toward the Flatirons.

I'm recruiting 1-2 PhD students to work with me at the University of Colorado Boulder! Looking for creative students with interests in #NLP and #CulturalAnalytics.

Boulder is a lovely college town 30 minutes from Denver and 1 hour from Rocky Mountain National Park 😎

Apply by December 15th!

Thanks to the 𝗣𝗶𝗼𝗻𝗲𝗲𝗿 𝗖𝗲𝗻𝘁𝗿𝗲 𝗳𝗼𝗿 𝗔𝗜 for organizing this event as part of the 𝗘𝗟𝗟𝗜𝗦 𝗣𝗿𝗲-𝗡𝗲𝘂𝗿𝗜𝗣𝗦 𝗙𝗲𝘀𝘁! 🎉

#NeurIPS2024

Join us for the 𝗣𝗿𝗲-𝗡𝗲𝘂𝗿𝗜𝗣𝗦 𝗣𝗼𝘀𝘁𝗲𝗿 𝗦𝗲𝘀𝘀𝗶𝗼𝗻 in Copenhagen!

🗓️ 𝗪𝗵𝗲𝗻: 16:00–18:00, Nov. 22, 2024

📍 𝗪𝗵𝗲𝗿𝗲: Entrance Hall, Gefion, Øster Voldgade 10, 1350 København K.

Present or explore European contributions to NeurIPS 2024 and connect with colleagues.

👉 𝗜𝗻𝗳𝗼 & 𝘀𝗶𝗴𝗻-𝘂𝗽: www.aicentre.dk/events/pre-n...

LoQT will be presented at NeurIPS 2024! 🎉

This research was funded by @DataScienceDK, and @AiCentreDK and is a collaboration between @DIKU_Institut, @ITUkbh, and @csaudk