DLRMv3: Generative recommendation benchmark in MLPerf Inference - MLCommons

MLCommons debuts DLRMv3, the first sequential recommendation benchmark in MLPerf Inference v6.0. 20X larger, 6500X more compute. Submit by Feb 13

[3/3] This work would not be possible w/o Linjian Ma, Xing Liu and Carole-Jean Wu initiating & driving this effort, as well as many contributors across Meta, NVIDIA, AMD, and Stanford (full list in MLPerf post).

Hope to see your results, and please reach out if you are interested in collaborations!

13.02.2026 12:35

👍 0

🔁 0

💬 0

📌 0

[2/3] The MLPerf RecSys benchmark is uniquely challenging: it needs to represent production workloads while maximally preserving user privacy. Our synthetic data approaches have enabled 20x model size increase, 6,500x FLOPs increase -- while keeping everything open-source.

13.02.2026 12:35

👍 0

🔁 0

💬 1

📌 0

DLRMv3: Generative recommendation benchmark in MLPerf Inference - MLCommons

MLCommons debuts DLRMv3, the first sequential recommendation benchmark in MLPerf Inference v6.0. 20X larger, 6500X more compute. Submit by Feb 13

[1/3] Excited to share that HSTU/Generative Recommenders has been selected w/ DeepSeek-R1, GPT-OSS, etc as part of MLPerf!

Since our initial results in 2024, we’ve seen widespread adoption of related techniques, from LinkedIn, Shopify, Twitter to RedNote, Meituan, Yandex, and Alibaba.

13.02.2026 12:34

👍 0

🔁 0

💬 1

📌 0

Scaling GPU-Accelerated Databases beyond GPU Memory Size

For the first time, we show that GPU-accelerated database systems can be both faster AND cheaper than their CPU counterparts, with a proof-of-concept on Microsoft SQL Server in Azure running TPC-H 1TB with a single A100/H100! Check out our VLDB'25 paper (www.vldb.org/pvldb/vol18/...) for details!

31.08.2025 21:47

👍 3

🔁 1

💬 0

📌 0

At #TheWebConf2025 today? Join our talk on next-gen retrieval paradigm at 10:30am in C3.3! Also moderating Responsible Web session (2:30-4pm, C3.4) on misinformation demonetization, cookie compliance & factchecking - crucial topics for building a better internet. See you there!

29.04.2025 20:04

👍 3

🔁 0

💬 0

📌 0

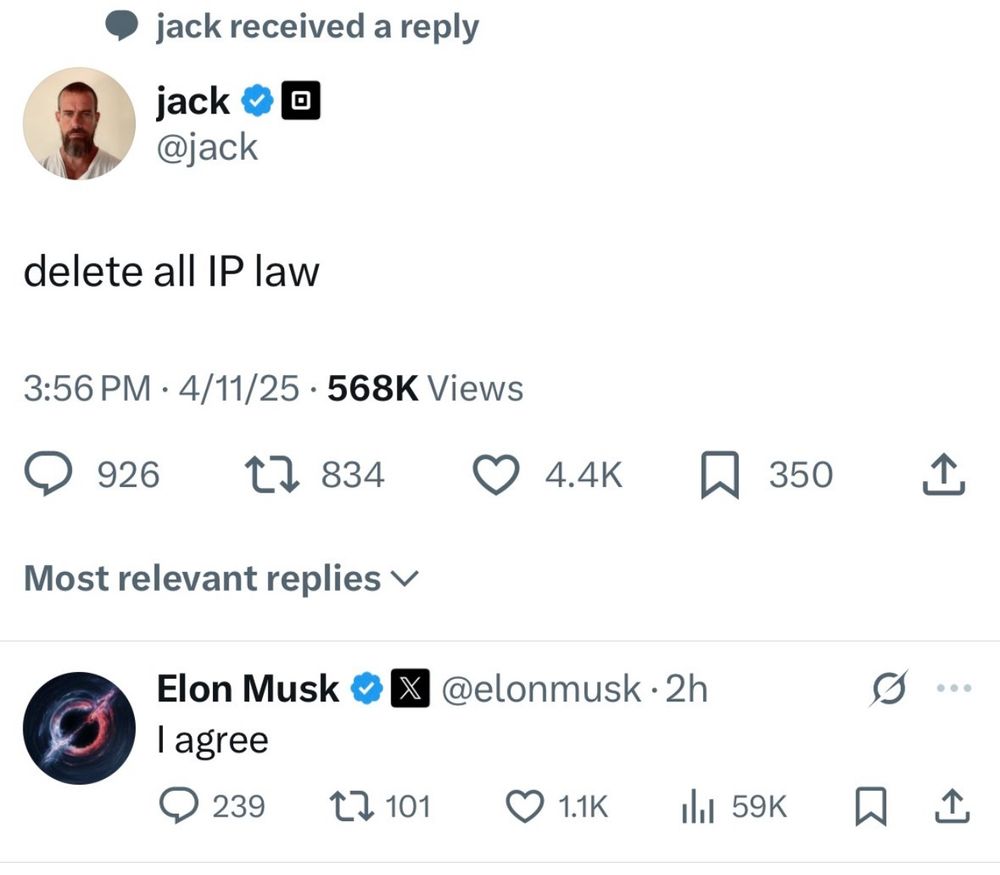

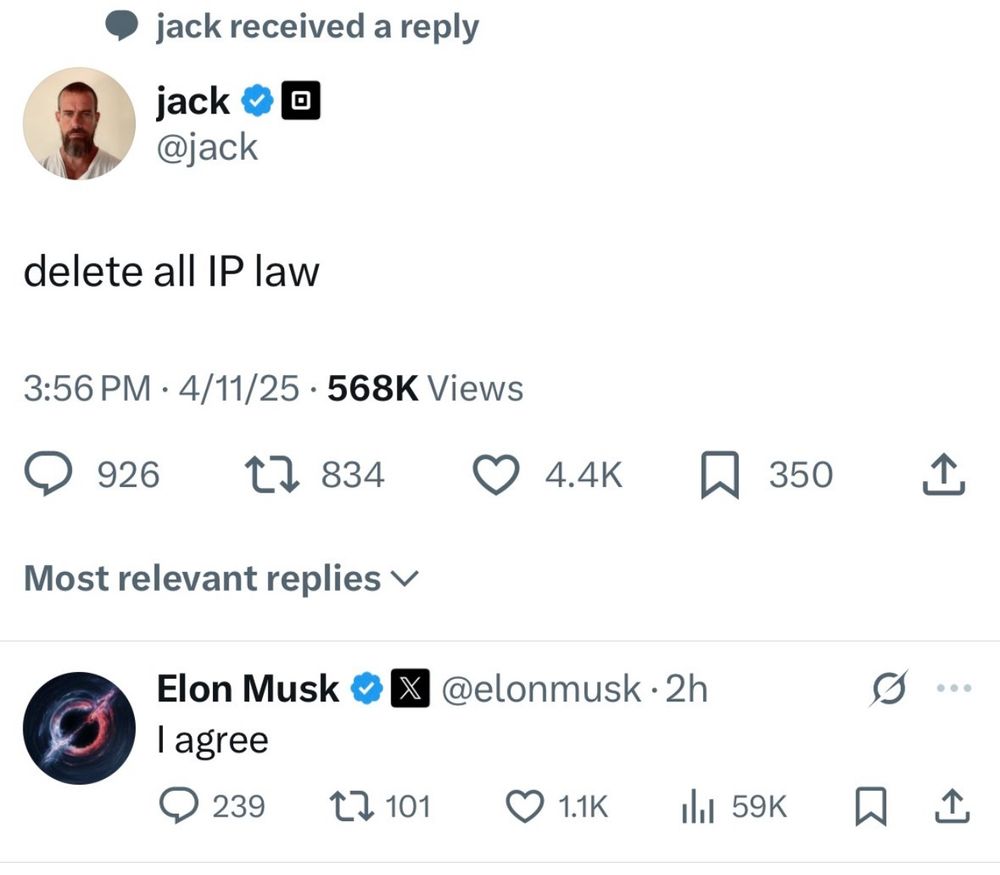

To some, AGI may be just consolidation of intellectual capital under existing power structures

12.04.2025 21:20

👍 2

🔁 0

💬 0

📌 0

If bigco knows an image is likely bad, they would not have allowed it to be generated in the first place. So the attacks are most likely coming from (finetuned) oss models anyway.

10.04.2025 00:37

👍 2

🔁 0

💬 0

📌 0

Retrieval with Learned Similarities

Retrieval plays a fundamental role in recommendation systems, search, and natural language processing (NLP) by efficiently finding relevant items from a large corpus given a query. Dot products have b...

🧵(4/4) Learn more:

Paper: arxiv.org/abs/2407.15462

Code: github.com/bailuding/rails

Interested in pushing the boundaries of foundational technologies together? Find @bailuding.bsky.social or I at WWW 2025 in Sydney or reach out!

#TheWebConf25 #WWW2025 #RAG #RecSys #LLMs #NeuralRetrieval

03.02.2025 17:08

👍 5

🔁 0

💬 0

📌 0

🧵(3/4) MoL achieves dense retrieval-level speed on GPUs, while delivering 20-30% better Hit Rate@50-400 on 100M+ item DBs (arxiv.org/abs/2306.04039, arxiv.org/abs/2407.13218). These gains make a compelling case for migrating web-scale vector databases to Retrieval with Learned Similarities (RAILS).

03.02.2025 17:08

👍 1

🔁 0

💬 1

📌 0

🧵(2/4) Our paper introduces Mixture-of-Logits (MoL) - a universal approximator of similarity functions unifying sparse/dense retrieval, multi-embeddings, and generative approaches. Beyond theory, MoL achieves SotA from sequential retrieval on Transformers/HSTU to finetuning LMs for RAG/QA.

03.02.2025 17:08

👍 1

🔁 0

💬 1

📌 0

🧵(1/4) Interested in the next generation retrieval paradigm or making your recommendations, search, or RAG/LLMs applications 20-30% better? Check out Retrieval with Learned Similarities, a collaboration with Microsoft Research, accepted as an oral presentation (155 out of 2062 papers) at WWW 2025!

03.02.2025 17:08

👍 3

🔁 0

💬 1

📌 1

I don’t really have the energy for politics right now. So I will observe without comment:

Executive Order 14110 was revoked (Safe, Secure, and Trustworthy Development and Use of Artificial Intelligence)

21.01.2025 00:34

👍 96

🔁 37

💬 2

📌 6

Issue is, we've already had a historic opportunity to make social media fully user-controlled and unbiased, yet our lawmakers chose to optimize for tech oligarchs' net worth instead...

19.01.2025 10:24

👍 0

🔁 0

💬 0

📌 0

The flood of papers in turn pushes flashy marketing and rushed publishing over substance. A self-reinforcing cycle that we should break.

18.01.2025 20:55

👍 2

🔁 0

💬 0

📌 0

Recent controversies with BlueSky data being scraped for training GenAI models demonstrates that no one has thought through how decentralized social networks are supposed to work at all. Treating all internet engagements as public still seems like a safe default option.

28.11.2024 16:02

👍 2

🔁 0

💬 0

📌 0

Exhibit 13 – #32, Att. #14 in Musk v. Altman (N.D. Cal., 4:24-cv-04722) – CourtListener.com

AMENDED COMPLAINT VERIFIED FIRST AMENDED COMPLAINT against Aestas Management Company, LLC, Aestas, LLC, Samuel Altman, Gregory Brockman, OAI Corporation, LLC, OpenAI GP, L.L.C., OpenAI Global, LLC, Op...

Source email is under Exhibit 13 for anyone else wondering www.courtlistener.com/docket/69013...

16.11.2024 18:07

👍 0

🔁 0

💬 0

📌 0

Do you happen to have links to the actual emails?

16.11.2024 06:33

👍 0

🔁 0

💬 1

📌 0

Strange how clarity strikes when flying above the attention economy's reach. The ground is nothing but a notification prison now.

27.10.2024 14:49

👍 3

🔁 0

💬 0

📌 0