Presenting this at the poster session this morning (11-2pm) at #5109

Presenting this at the poster session this morning (11-2pm) at #5109

Looking forward to #NeurIPS25 this week 🏝️! I'll be presenting at Poster Session 3 (11-2 on Thursday). Feel free to reach out!

I'll also be presenting this paper with @catherinearnett.bsky.social

at #CogInterp!

Excited to announce that I’ll be presenting a paper at #NeurIPS this year! Reach out if you’re interested in chatting about LM training dynamics, architectural differences, shortcuts/heuristics, or anything at the CogSci/NLP/AI interface in general! #Neurips2025

I’m in Vienna all week for @aclmeeting.bsky.social and I’ll be presenting this paper on Wednesday at 11am (Poster Session 4 in HALL X4 X5)! Reach out if you want to chat about multilingual NLP, tokenizers, and open models!

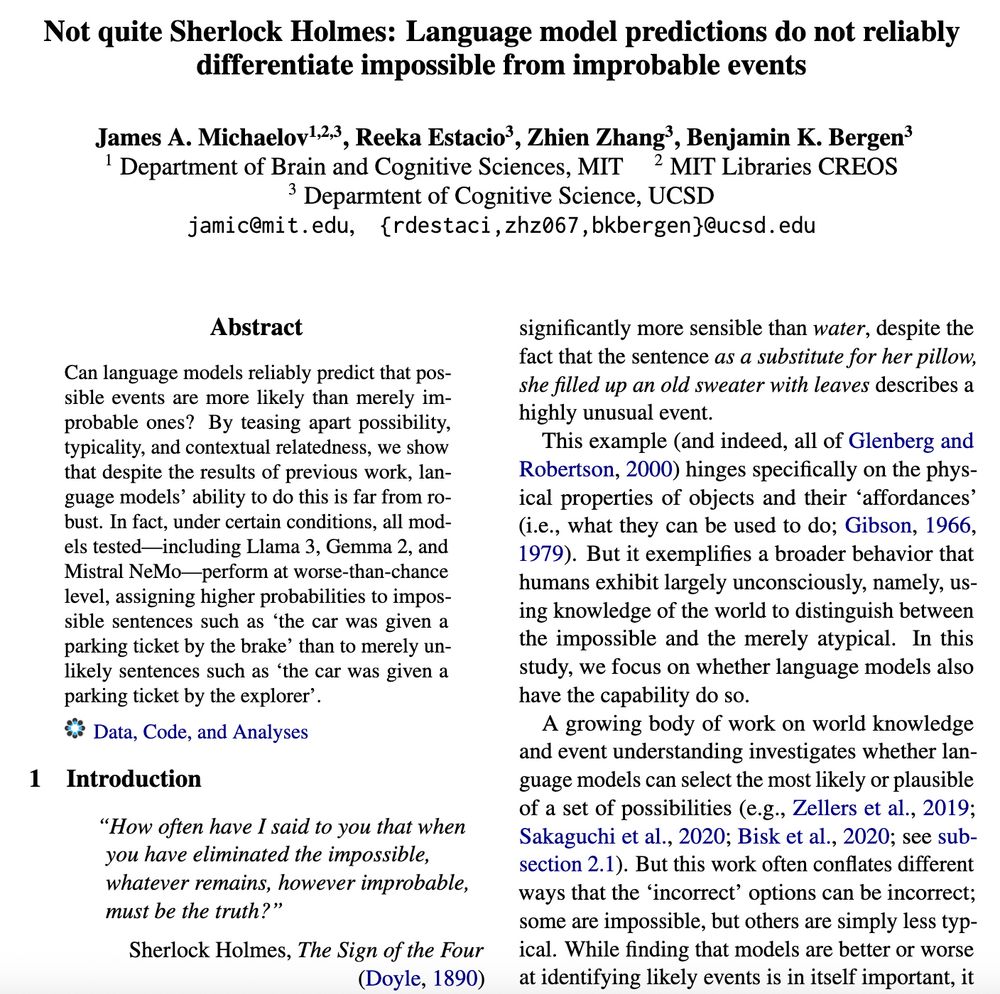

In the most extreme case, LMs assign sentences such as ‘the car was given a parking ticket by the explorer’ (unlikely but possible event) a lower probability than ‘the car was given a parking ticket by the brake’ (animacy-violating event, semantically-related final word) over half of the time. 2/3

New paper accepted at ACL Findings! TL;DR: While language models generally predict sentences describing possible events to have a higher probability than impossible (animacy-violating) ones, this is not robust for generally unlikely events and is impacted by semantic relatedness. 1/3

My paper with @tylerachang.bsky.social and @jamichaelov.bsky.social will appear at #ACL2025NLP! The updated preprint is available on arxiv. I look forward to chatting about bilingual models in Vienna!

✨New pre-print✨ Crosslingual transfer allows models to leverage their representations for one language to improve performance on another language. We characterize the acquisition of shared representations in order to better understand how and when crosslingual transfer happens.

I’ve had success using the infini-gram API for this (though it can get overloaded with user requests at times): infini-gram.io

I don’t think this is quite what you’re looking for, but @camrobjones.bsky.social recently ran some Turing-test-style studies and found that some people believed ELIZA to be a human (and participants were asked to give reasons for their responses)

With all the new people here on Bluesky, I think it’s a good time to (re-)introduce myself. I’m a postdoc at MIT carrying out research at the intersection of the cognitive science of language and AI. Here are some of the things I’ve worked on in the last year 🧵:

Seems like a great initiative to have some of these location-based ones! I’d love to be added if possible!

Excited to be at #EMNLP #EMNLP2024 this year! Especially interested in chatting about the intersection of cognitive science/psycholinguistics and AI/NLP, training dynamics, robustness/reliability, meaning, and evaluation

If there’s still space (and you accept postdocs), could I be added?

Thanks for creating this list - looks great! I’d love to be added if there’s still room

Thank you!

If there’s still room, is there any chance you could add me to this list?

Also, I’m going to be attending EMNLP next week - reach out if you want to meet/chat

Anyway, excited to learn and chat about about research along these lines and beyond here on Bluesky!

Of course, none of this work would have been possible without my amazing PhD advisor Ben Bergen, and my other great collaborators: Seana Coulson, @catherinearnett.bsky.social, Tyler Chang, Cyma Van Petten, and Megan Bardolph!

5: Recurrent models like RWKV and Mamba have recently emerged as viable alternatives to transformers. While they are intuitively more cognitively plausible, when used to model human language processing, how do they compare transformers? We find that they perform about the same overall:

4: Is the N400 sensitive only to the predicted probability of the stimuli encountered, or also the predicted probability of alternatives? We revisit this question with state-of-the-art NLP methods, with the results supporting the former hypothesis:

3: The N400, a neural index of language processing, is highly sensitive to the contextual probability of words. But to what extent can lexical prediction explain other N400 phenomena? Using GPT-3, we show that it can implicitly account for both semantic similarity and plausibility effects:

2: Do multilingual language models learn that different languages can have the same grammatical structures? We use the structural priming paradigm from psycholinguistics to provide evidence that they do:

If you’re interested in hearing more of my thoughts on this topic, check out this article at Communications of the ACM by Sandrine Ceurstemont that includes quotes from an interview with me and my co-author Ben Bergen:

1. Training language models on more data generally improves their performance, but is this always the case? We show that inverse scaling can occur not just across models of different sizes, but also in individual models over the course of training:

With all the new people here on Bluesky, I think it’s a good time to (re-)introduce myself. I’m a postdoc at MIT carrying out research at the intersection of the cognitive science of language and AI. Here are some of the things I’ve worked on in the last year 🧵: