Coming up at ICML: 🤯Distribution shifts are still a huge challenge in ML. There's already a ton of algorithms to address specific conditions. So what if the challenge was just selecting the right algorithm for the right conditions?🤔🧵

Coming up at ICML: 🤯Distribution shifts are still a huge challenge in ML. There's already a ton of algorithms to address specific conditions. So what if the challenge was just selecting the right algorithm for the right conditions?🤔🧵

ChatBotArena is far from the first eval to be overfit to. It's becoming underrated. Likely the single most impactful evaluation project since ChatGPT. The labs are the ones releasing these slightly off models.

Would you present your next NeurIPS paper in Europe instead of traveling to San Diego (US) if this was an option? Søren Hauberg (DTU) and I would love to hear the answer through this poll: (1/6)

Hiring two student researchers for Gemma post-training team at @GoogleDeepMind Paris! First topic is about diversity in RL for LLMs (merging, generalization, exploration & creativity), second is about distillation. Ideal if you're finishing PhD. DMs open!

🥁Introducing Gemini 2.5, our most intelligent model with impressive capabilities in advanced reasoning and coding.

Now integrating thinking capabilities, 2.5 Pro Experimental is our most performant Gemini model yet. It’s #1 on the LM Arena leaderboard. 🥇

This is a very tidy little RL paper for reasoning. Their GRPO changes:

1 Two different clip hyperparams, so positive clipping can uplift more unexpected tokens

2 Dynamic sampling -- remove samples w flat reward in batch

3 Per token loss

4 Managing too long generations in loss

dapo-sia.github.io

Welcome Gemma 3, Google’s new open-weight LLM. All sizes (1B, 4B, 12B and 27B) excel on benchmarks, but the key result may be the 27B reaching 1338 on LMSYS. For this, we scaled post-training, with our novel distillation, RL and merging strategies.

Report: storage.googleapis.com/deepmind-med...

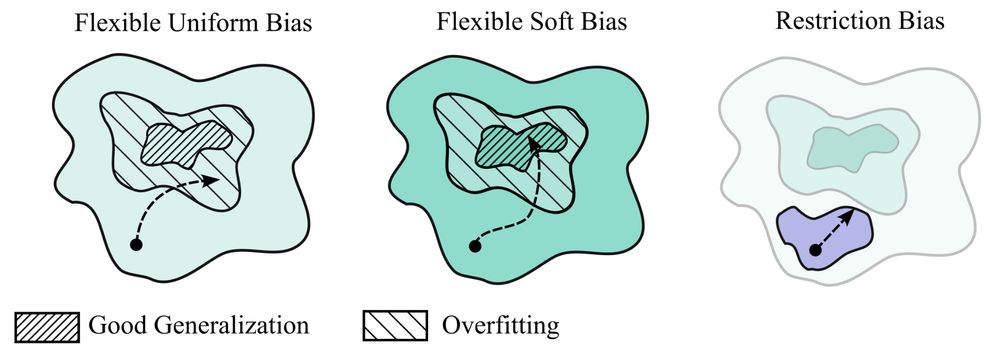

My new paper "Deep Learning is Not So Mysterious or Different": arxiv.org/abs/2503.02113. Generalization behaviours in deep learning can be intuitively understood through a notion of soft inductive biases, and formally characterized with countable hypothesis bounds! 1/12

Modern post-training is essentially distillation then RL. While reward hacking is well-known and feared, could there be such a thing as teacher hacking? Our latest paper confirms it. Fortunately, we also show how to mitigate it! The secret: diversity and onlineness! arxiv.org/abs/2502.02671

We just released the Helium-1 model , a 2B multi-lingual LLM which @exgrv.bsky.social and @lmazare.bsky.social have been crafting for us! Best model so far under 2.17B params on multi-lingual benchmarks 🇬🇧🇮🇹🇪🇸🇵🇹🇫🇷🇩🇪

On HF, under CC-BY licence: huggingface.co/kyutai/heliu...

ILYA: "PRETRAINING IS DONE. WE ARE NOW IN THE POST TRAINING ERA."

Of all of OpenAI's days, the RL API is still the most revealing of the state of AI research trends. Lots of open doors for those looking at RL.

OpenAI's Reinforcement Finetuning and RL for the masses

The cherry on Yann LeCun’s cake has finally been realized.

🚨So, you want to predict your model's performance at test time?🚨

💡Our NeurIPS 2024 paper proposes 𝐌𝐚𝐍𝐨, a training-free and SOTA approach!

📑 arxiv.org/pdf/2405.18979

🖥️https://github.com/Renchunzi-Xie/MaNo

1/🧵(A surprise at the end!)

The right place for your phd:

With Colin Raffel, UofT works on decentralizing, democratizing, and derisking large-scale AI. Wanna work on model m(o)erging, collaborative/decentralized learning, identifying & mitigating risks, etc. Apply (deadline is Monday!)

web.cs.toronto.edu/graduate/how...

🤖📈

🥐 Building a Computer Vision FR Starter Pack!

👉 Who else should be included?

Comment below or DM me to be added

go.bsky.app/dfvcLZ

distributed learning for LLM?

recently, @primeintellect.bsky.social have announced finishing their 10B distributed learning, trained across the world.

what is it exactly?

🧵

Decomposing Uncertainty for Large Language Models through Input Clarification Ensembling by Bairu Hou et al. #ICML2024

tl;dr: generate multiple clarifications of input txt w/ external LLM then forward:

>disagreement btw outputs -> data uncertainty

>avg uncertainty in each output -> model uncertainty

This year, there are 16 positions at CNRS in computer science (8 in "applied" domains → ask me - 8 on "fundamental" domains → ask the other David).

@mathurinmassias.bsky.social has a good list of advice mathurinm.github.io/cnrs_inria_a...

Official 🔗 www.ins2i.cnrs.fr/en/cnrsinfo/...

Don't wait!

Feeling that Paris is "The Place To Be" for computer vision and AI in general.

Min-p Sampling: arxiv.org/abs/2407.01082

1. Get max prob

2. Find min prob based on a threshold \in [0, 1] \times that max prob

3. Gather only tokens probs above that min prob

4. Sample in that pool, according to renormalized probs

More robust to change in temperature!

My growing list of #computervision researchers on Bsky.

Missed you? Let me know.

go.bsky.app/M7HGC3Y

Bluesky now has over 20M people!! 🎉

We've been adding over a million users per day for the last few days. To celebrate, here are 20 fun facts about Bluesky:

New people in AI have joined recently, I can't cite all, but here are some: @fguney.bsky.social @natalianeverova.bsky.social @damienteney.bsky.social @jdigne.bsky.social @nbonneel.bsky.social @steevenj7.bsky.social @douillard.bsky.social @ramealexandre.bsky.social @stonet2000.bsky.social

1/