Some text in heuristics/separation of results appear twice? Good post otherwise

Some text in heuristics/separation of results appear twice? Good post otherwise

Thank you for coming and for the great discussion!!

So excited for the RL theory meets experiment workshop tomorrow at NeurIPS: arlet-workshop.github.io/neurips2025/...

Talks look AMAZING and you can hear me be the foolish experimentalist on a panel

The paper looks cool! And it seems well-written :). I did not expect these exponents in Theorem 4.2 😆

Very excited to share our preprint: Self-Speculative Masked Diffusions

We speed up sampling of masked diffusion models by ~2x by using speculative sampling and a hybrid non-causal / causal transformer

arxiv.org/abs/2510.03929

w/ @vdebortoli.bsky.social, Jiaxin Shi, @arnauddoucet.bsky.social

We're finally out of stealth: percepta.ai

We're a research / engineering team working together in industries like health and logistics to ship ML tools that drastically improve productivity. If you're interested in ML and RL work that matters, come join us 😀

I am happy to share that our paper "Unsupervised Learning for Optimal Transport plan prediction between unbalanced graphs" was accepted at Neurips 2025 ! 🥳

Huge thanks to my co-authors @rflamary.bsky.social and Bertrand Thirion !

arxiv.org/abs/2506.12025

(1/5)

News 🎉 We’re thrilled to announce our final panelist: David Silver!

Don’t miss David and our amazing lineup of speakers—submit your latest RL work to our NeurIPS workshop.

📅 Extended deadline: Sept 2 (AoE)

We've extended the deadline for our workshop's calls for papers/ideas! Submit your work by August 29 AoE. Instructions on the website: arlet-workshop.github.io/neurips2025/...

The OpenReview link for our calls (for papers and ideas) is available, submit here: openreview.net/group?id=Neu...

We look forward to receiving your submissions!

last year's edition was so much fun I'm really looking forward to this one!! join us in San Diego :))

Was it recorded? 🤔

Join us for Nneka's presentation tomorrow! Last talk before the summer break.

Join us tomorrow for Dave's talk! He will present his recent work on randomised exploration, which received an outstanding paper award at ALT 2025 earlier this year.

new preprint with the amazing @lviano.bsky.social and @neu-rips.bsky.social on offline imitation learning! learned a lot :)

when the expert is hard to represent but the environment is simple, estimating a Q-value rather than the expert directly may be beneficial. lots of open questions left though!

🚨 New paper accepted at SIMODS! 🚨

“Nonlinear Meta-learning Can Guarantee Faster Rates”

arxiv.org/abs/2307.10870

When does meta learning work? Spoiler: generalise to new tasks by overfitting on your training tasks!

Here is why:

🧵👇

Dhruv Rohatgi will be giving a lecture on our recent work on comp-stat tradeoffs in next-token prediction at the RL Theory virtual seminar series (rl-theory.bsky.social) tomorrow at 2pm EST! Should be a fun talk---come check it out!!

new work on computing distances between stochastic processes ***based on sample paths only***! we can now:

- learn distances between Markov chains

- extract "encoder-decoder" pairs for representation learning

- with sample- and computational-complexity guarantees

read on for some quick details..

1/n

A new blog post with intuitions behind continuous-time Markov chains, a building block of diffusion language models, like @inceptionlabs.bsky.social's Mercury and Gemini Diffusion. This post touches on different ways of looking at Markov chains, connections to point processes, and more.

oh the inference blog posts are back 🥰

Mattes Mollenhauer, Nicole M\"ucke, Dimitri Meunier, Arthur Gretton: Regularized least squares learning with heavy-tailed noise is minimax optimal https://arxiv.org/abs/2505.14214 https://arxiv.org/pdf/2505.14214 https://arxiv.org/html/2505.14214

Later today, Sikata and Marcel will talk about their recent work on oracle-efficient RL with ensembles. Join us!

Excited to share what I've been up to: bringing text diffusion to Gemini!

Diffusion models are _fast_, and hold immense promise to challenge autoregressive models as the de facto standard for language modeling.

omg thanks

Community events and tutorials, list from the website

Workshops, list from the website

The tutorials, workshops, and community events for #COLT2025 have been announced!

Exciting topics, and impressive slate of speakers and events, on June 30! The workshops have calls for contributions (⏰ May 16, 19, and 25): check them out!

learningtheory.org/colt2025/ind...

Announcing the first workshop on Foundations of Post-Training (FoPT) at COLT 2025!

📝 Soliciting abstracts/posters exploring theoretical & practical aspects of post-training and RL with language models!

🗓️ Deadline: May 19, 2025

looking forward to giving a talk at the 2025 GHOST day in the beautiful city of Poznan (Poland) --- hope to see you there this weekend!

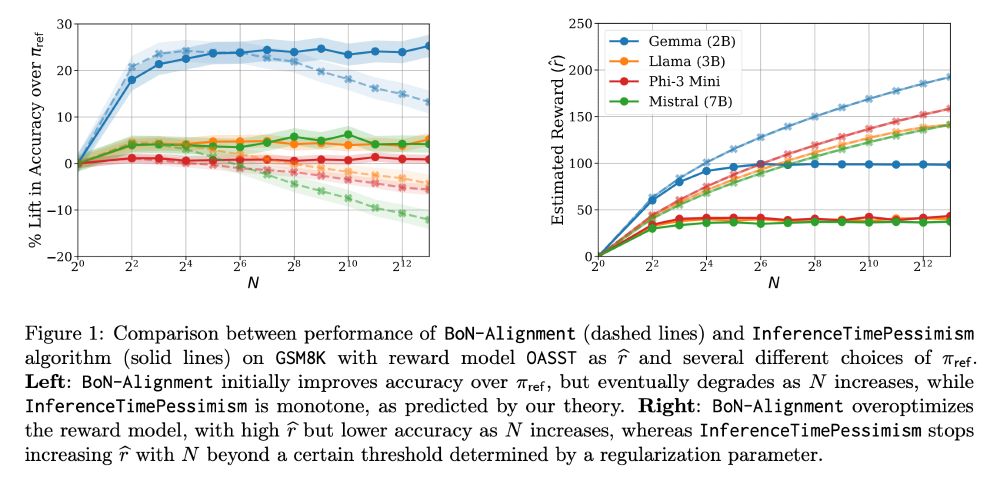

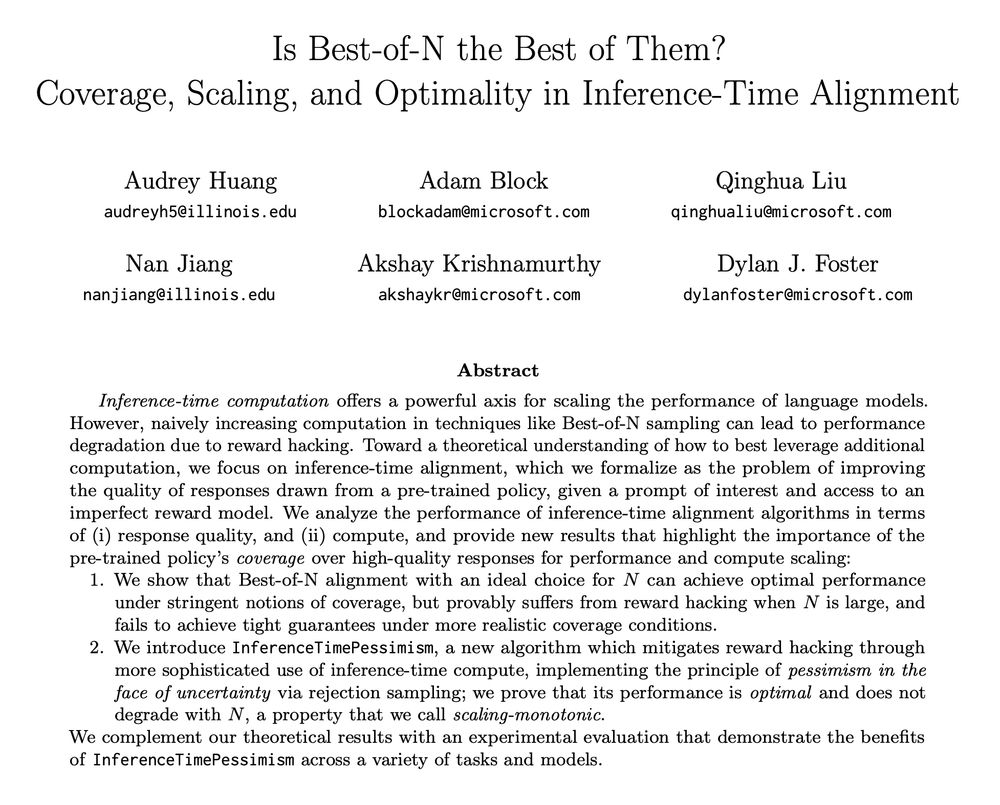

Is Best-of-N really the best we can do for language model inference?

New paper (appearing at ICML) led by the amazing Audrey Huang (ahahaudrey.bsky.social) with Adam Block, Qinghua Liu, Nan Jiang, and Akshay Krishnamurthy (akshaykr.bsky.social).

1/11

Spectral Representation for Causal Estimation with Hidden Confounders

at #AISTATS2025

A spectral method for causal effect estimation with hidden confounders, for instrumental variable and proxy causal learning

arxiv.org/abs/2407.10448

Haotian Sun, @antoine-mln.bsky.social, Tongzheng Ren, Bo Dai