BayesFlow released version 2.0.4, presented numerous findings at the MathPsych/ICCM 2025 conference at Ohio State University, and expanded its contributor list to 25 active members! Congrats to BayesFlow on all these new huge accomplishments!

BayesFlow released version 2.0.4, presented numerous findings at the MathPsych/ICCM 2025 conference at Ohio State University, and expanded its contributor list to 25 active members! Congrats to BayesFlow on all these new huge accomplishments!

I'm putting together a visualization workshop for PhD students 🧪📊

Looking for examples of the good, the bad, and the ugly.

Do you have examples for a great (or awful) figure? Plots and overview/explainer figures are welcome.

Thanks 🧡

🧠 Check out the classic examples from Bayesian Cognitive Modeling: A Practical Course (Lee & Wagenmakers, 2013), translated into step-by-step tutorials with BayesFlow!

Interactive version: kucharssim.github.io/bayesflow-co...

PDF: osf.io/preprints/ps...

New preprint!

Individual differences in neurophysiological correlates of post-response adaptation: A model-based approach

osf.io/preprints/ps...

This work seeks to extract the effects of response monitoring on decision-making using model-based CogNeuro and methods to study individual differences.

Congrats, really impressive work (especially providing the many additional resources on the website)!

Abstract Introduction A key step in the Bayesian workflow for model building is the graphical assessment of model predictions, whether these are drawn from the prior or posterior predictive distribution. The goal of these assessments is to identify whether the model is a reasonable (and ideally accurate) representation of the domain knowledge and/or observed data. There are many commonly used visual predictive checks which can be misleading if their implicit assumptions do not match the reality. Thus, there is a need for more guidance for selecting, interpreting, and diagnosing appropriate visualizations. As a visual predictive check itself can be viewed as a model fit to data, assessing when this model fails to represent the data is important for drawing well-informed conclusions. Demonstration We present recommendations for appropriate visual predictive checks for observations that are: continuous, discrete, or a mixture of the two. We also discuss diagnostics to aid in the selection of visual methods. Specifically, in the detection of an incorrect assumption of continuously-distributed data: identifying when data is likely to be discrete or contain discrete components, detecting and estimating possible bounds in data, and a diagnostic of the goodness-of-fit to data for density plots made through kernel density estimates. Conclusion We offer recommendations and diagnostic tools to mitigate ad-hoc decision-making in visual predictive checks. These contributions aim to improve the robustness and interpretability of Bayesian model criticism practices.

New paper Säilynoja, Johnson, Martin, and Vehtari, "Recommendations for visual predictive checks in Bayesian workflow" teemusailynoja.github.io/visual-predi... (also arxiv.org/abs/2503.01509)

A study with 5M+ data points explores the link between cognitive parameters and socioeconomic outcomes: The stability of processing speed was the strongest predictor.

BayesFlow facilitated efficient inference for complex decision-making models, scaling Bayesian workflows to big data.

🔗Paper

A reminder of our talk this Thursday (30th Jan), at 11am GMT. Paul Bürkner (TU Dortmund University), will talk about "Amortized Mixture and Multilevel Models". Sign up at listserv.csv.warwick... to receive the link.

Scholar inbox is the best paper recommender and I cannot recommend it enough as a conference companion. I don’t know how people do poster sessions without it.

1️⃣ An agent-based model simulates a dynamic population of professional speed climbers.

2️⃣ BayesFlow handles amortized parameter estimation in the SBI setting.

📣 Shoutout to @masonyoungblood.bsky.social & @sampassmore.bsky.social

📄 Preprint: osf.io/preprints/ps...

💻 Code: github.com/masonyoungbl...

The meme that never dies ✨

Check out this project on modeling stationary and time-varying parameters with BayesFlow.

The family of methods is called "neural superstatistics", how can it not be cool!? 😎

👨💻 Led by @schumacherlu.bsky.social

I can definitely relate to looking up your own writing to figure out how you actually did things 😅

@dtfrazier.bsky.social

Stellar TL;DR of our recent work by our team! ✨

To celebrate the new beginnings on Bluesky, let's reminisce about one of our highlights from the old days:

The unexpected shout-out by @fchollet.bsky.social that made everyone go crazy on the BayesFlow Slack server and led to a 15% increase in GitHub stars.

The beta version of BayesFlow 2.0 is becoming more powerful and stable by the day. If you are curious about Amortized Bayesian Inference, give BayesFlow a try!

github.com/bayesflow-or...

Thrilled to contribute to this work led by David Frazier providing theory for NPE/NLE in simulation-based inference. These methods are known to match the accuracy of ABC and BSL with fewer simulations, this paper rigorously shows why this can be achieved.

arxiv.org/abs/2411.12068

For those who don’t know yet, I am organising an online talk series together with Arno Solin on “Advances in Probabilistic Machine Learning (APML)”.

It’s free for everyone to join and support early career researchers!

You can register and check out the schedule here: aaltoml.github.io/apml/

The first list filled up, so here's a second list of AI for Science researchers on bluesky.

Let me know if I missed you / if you'd like to join!

bsky.app/starter-pack...

I'm making a list of AI for Science researchers on bluesky — let me know if I missed you / if you'd like to join!

go.bsky.app/AcP9Lix

✨ Super excited to share our paper **Ensemble everything everywhere: Multi-scale aggregation for adversarial robustness** arxiv.org/abs/2408.05446 ✨

Inspired by biology we 1) get adversarial robustness + interpretability for free, 2) turn classifiers into generators & 3) design attacks on GPT-4

Bluesky now has over 20M people!! 🎉

We've been adding over a million users per day for the last few days. To celebrate, here are 20 fun facts about Bluesky:

🙋

Same here :)

🙋♂️ Working on deep learning for taming complex cognitive models.

Would be great if you could add me!

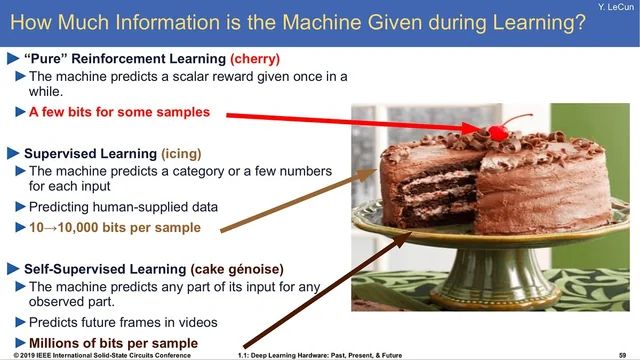

Yann LeCun’s analogy of intelligence being a cake of self-supervised, supervised, and RL

Eight years later, Yann LeCun’s cake 🍰 analogy was spot on: self-supervised > supervised > RL

> “If intelligence is a cake, the bulk of the cake is unsupervised learning, the icing on the cake is supervised learning, and the cherry on the cake is reinforcement learning (RL).”

Well, at least in the long run... 😄