“casual interception” as defined in \citep{}…

“casual interception” as defined in \citep{}…

Ever dreamed of AI agents learning through interacting with the open world unsupervisedly? Our latest preprint introduces NNetNav-Live which collects training data through exploration on real websites and hindsight labeling, which produces a SOTA OSS agent.

controlling a browser / computer!

but requires a bit more tooling to set it up.

Please check out our paper for more details: arxiv.org/pdf/2410.02907

And our code if you want a NNetNav-ed model for your own domain:

github.com/MurtyShikhar...

Done with collaborators: @zhuhao.me, Dzmitry Bahdanau and @chrmanning.bsky.social

We find that cross-website robustness is limited, and almost always, performance goes up from incorporating in-domain nnetnav data. This makes it even more important to work on unsupervised learning for agents - how are you going to collect human data for *any* website? [6/n]

We use this data for SFT-ing LLama3.1-8b. Our best models outperform zero-shot GPT-4 on both WebArena and WebVoyager, and reach SoTA performance among unsupervised methods for both datasets [5/n]

We use NNetNav to collect around 10k workflows for over 20 websites including 15 live websites, and 5 self-hosted websites.

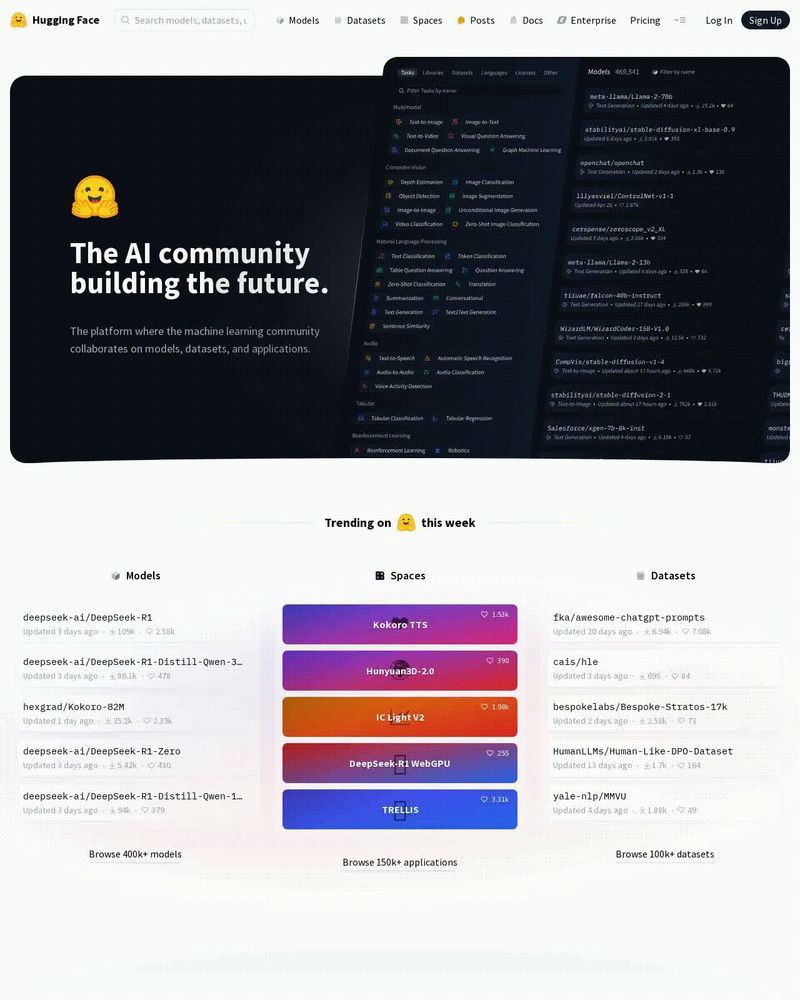

Data is available on 🤗: huggingface.co/datasets/sta...

huggingface.co/datasets/sta...

[4/n]

Main ideas behind NNetNav exploration

1 complex goals have intermediate subgoals thus complex trajectories must have meaningful sub-trajectories

2 Use an LM instruction relabeler + judge to test if trajectory-so-far is meaningful. If yes, continue exploring, otherwise prune [3/n]

NNetNav uses a structured exploration method to efficiently search and collect traces on live-websites, which are retroactively labeled into instructions, finding a strikingly diverse set of workflows for any website (e.g. like this plot) [2/n]

Want to make a browser agent for *any* domain like banking or healthcare?

We propose methods for training LLMs with open-ended, unsupervised interaction on live websites:

✅ OSS SoTA on WebVoyager

✅ world's smallest high-performing web-agent

Try it here: nnetnav.dev

going to stay off twitter for my own mental health. something has gone horribly wrong with that platform.

Couldn't make it to NeurIPS due to work, but do check out our workshop happening in West Ballroom B. Lots of cool things to come, including a very fun panel!

Come visit our poster "MoEUT: Mixture-of-Experts Universal Transformers" on Friday at 4:30 in East Exhibit Hall A-C #1907 on #NeurIPS2024. With Kazuki Irie, Jürgen Schmidhuber, Christopher Potts and @chrmanning.bsky.social.

The extraordinary recent takeover of ML/AI by #NLP is well-known but insufficiently reflected on.

Look at the @neuripsconf.bsky.social tutorials in 2024!

neurips.cc/virtual/2024...

14 tutorials; 6 have "LLM" in the title; 4 more cover foundation models, with large NLP coverage. That's > 70% 😲

🚨 Thrilled to share that Compositional Generalization Across Distributional Shifts with Sparse Tree Operations received a spotlight award at #NeurIPS2024! 🌟 I'll present a poster on Tuesday and give an invited lightning talk at the System 2 Reasoning Workshop on Sunday. 🧵👇

AgentLab diagram. The image describes AgentLab, a framework for efficient parallel experiments with agents. It highlights: Core Agent Features: Dynamic Prompting and a Unified LLM API for interacting with large language models. BrowserGym Platform: A tool for testing agents on benchmarks like WebArena, WorkArena, MiniWoB, and others. Key Features: Reproducibility, a Unified Leaderboard, an analysis tool called Xray, and a Dataset for sharing agent traces. Blue elements represent AgentLab components.

🧵-1

We are thrilled to release #AgentLab, a new open-source package for developing and evaluating web agents. This builds on the new #BrowserGym package which supports 10 different benchmarks, including #WebArena.

Folks, I'm not going to be at Neurips this year, but we have an *awesome* workshop that i'm super proud of.

Go attend, and use the link below to ask all of your burning questions about LLM reasoning, agents and compositionality!

🎊Excited for #neurips2024 and our "System 2 Reasoning at Scale" workshop. We have an excited lineup of speakers who will answer your most burning questions about AI and reasoning 🚀

🔥Got spicy questions? Submit & vote here:

app.sli.do/event/dJNU63...

I also wear the AI agents researcher hat. Can't say i'm similarly impressed by reviewers in that community...

ACL syntax track reviewers >> almost any other conference.

These folks care about their sub-field and i learn something new every time!

Now, reviewers are upset if we only finetune sub 10B parameter models!

for more context: we are training the probe on sentences from PTB / BLIMP

thx for sharing, though semantic parsing almost certainly benefits from modeling syntax :)

SRL probe still rewards hidden states that model dependency relations, no? would like a probe thats agnostic to how well the underlying network models syntax

could i get added? thx for making this!!

What is a probing task that is purely about semantics?

Context: I have a probe trained to predict dependency relations, and would like to train another one on a semantics only task (for research purposes)

To be fair, after some prompt engineering:

German:

(S

(NP (DT Der) (NN Mann))

(VP (VB mag)

(NP (JJ schwarze) (NNS Katzen))))

Japanese:

(S

(NP (NN Otoko) (PP wa))

(VP

(NP (JJ kuro) (NN neko) (PP ga))

Asked GPT-4o to draw parse trees in two languages:

Hot take (since it's still just friends on this platform):

It's crazy how the classic "sample and rerank" baseline from machine translation and IR, got re-branded as "scaling up inference-time compute".

nothing but blue skies, for posting puns