📣 Please share: We invite submissions to the 29th International Conference on Artificial Intelligence and Statistics (#AISTATS 2026) and welcome paper submissions at the intersection of AI, machine learning, statistics, and related areas. [1/3]

📣 Please share: We invite submissions to the 29th International Conference on Artificial Intelligence and Statistics (#AISTATS 2026) and welcome paper submissions at the intersection of AI, machine learning, statistics, and related areas. [1/3]

I'm looking for my first PhD student! We will push the frontiers of probabilistic machine learning for the molecular sciences, and study how to design new algorithms that exploit the unique properties of molecular systems to learn about the world.

efzu.fa.em2.oraclecloud.com/hcmUI/Candid...

EurIPS is coming! 📣 Mark your calendar for Dec. 2-7, 2025 in Copenhagen 📅

EurIPS is a community-organized conference where you can present accepted NeurIPS 2025 papers, endorsed by @neuripsconf.bsky.social and @nordicair.bsky.social and is co-developed by @ellis.eu

eurips.cc

📣 Excited to present our ICLR spotlight paper at #ICLR2025!

"Bayesian Optimization via Continual Variational Last Layer Training"

📍 Poster #368 (Hall 3 + Hall 2B)

⏰ Friday during Poster Session 3

If you're in Singapore and interested in BO or Bayesian methods, definitely stop by!

More infos👇

If you would like to read the paper, download the models or catch us at AISTATS, here are the details:

📑: arxiv.org/abs/2503.13296.

📍: Poster Session 1 - Poster 109.

Code+Models: github.com/jonasvj/OnLo... (7/7)

Finally, we open-source all of our trained BNNs for further analysis - we do this due to the computational efforts required to train these models, and to allow further analysis of empirical results that we find highly unintuitive and surprising. (6/7)

We also conduct a number of sensitivity and ablation studies to explain the different predictive performance between BNN and DE-BNNs. (5/7)

We show that increased out-of-distribution performance in DE-BNNs often comes at an in-distribution performance cost and that DEs generally outperform DE-BNNs on in-distribution metrics for large ensemble sizes. (4/7)

Surprisingly, we find that across a number of dataset, architectures, approximate inference methods and tasks, that this is not the case when the ensembles grow large enough (but not in the asymptotic regime). A few key points from the paper: (3/7)

In this work we investigated the commonly held belief that trivially equipping Deep Ensembles (DEs) with local posterior structure (obtaining what we call DE-BNNs) should improve predictive uncertainty and model calibration. (2/7)

I will soon be travelling to Thailand to present our recently accepted paper "On Local Posterior Structure in Deep Ensembles" at AISTATS! The paper was written with my joint first co-author Jonas Vestergaard Jensen, Mikkel Schmidt and Michael Riis Andersen. (1/7)

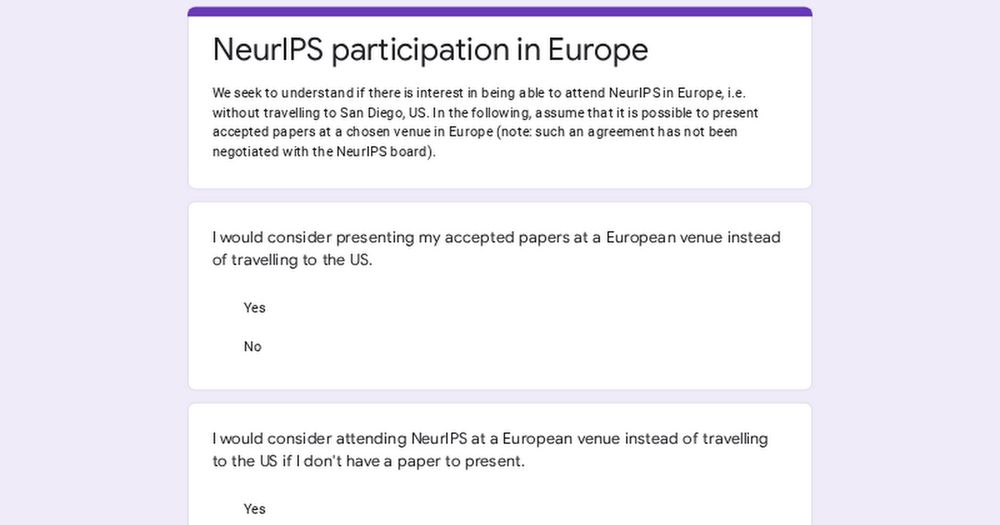

Would you present your next NeurIPS paper in Europe instead of traveling to San Diego (US) if this was an option? Søren Hauberg (DTU) and I would love to hear the answer through this poll: (1/6)

Our paper got accepted to ICLR 2025! 🎉 Looking forward to meeting everyone in Singapur!

BO with Variational Last Layers as Surrogate

📚 New Paper with collaborators from DTU, Vector, & Google DeepMind

A neural net-based approach to BO that performs well in both classic, small-scale problems, and can efficiently scale far beyond GP surrogate models.

Visit our poster @ NeurIPS Bayesian decision-making workshop today!

More info👇