Another year, another Thesis Fast Forward, fantastic set of work! Thanks Ruben for keeping it going!

23.01.2026 20:11

👍 3

🔁 1

💬 0

📌 0

SIGGRAPH Thesis Fastforward 2026

YouTube video by ACMSIGGRAPH

The #SIGGRAPH Thesis Fast Forward 2026 is here!

Learn about the future of CG in 9 PhD theses: from Monte Carlo PDE solvers and photorealistic 3D avatars to fantasy-based rehab games and modular umbrella meshes. 🎓✨

youtu.be/FlrTZEJeEXs

23.01.2026 08:58

👍 5

🔁 6

💬 0

📌 1

I asked my husband if there is a French equivalent of "sticks and stones will break my bones" and he gave me "the toad's drool doesn't reach the white dove" and "the train of your insults is rolling on the rails of my indifference" and now I would like both of these on a t-shirt

03.12.2025 12:03

👍 47

🔁 13

💬 4

📌 2

Julia Guerrero Viu @juliagviu.bsky.social, investigadora aragonesa recibe el premio SCIE-Zonta-Sngular 2025, que impulsa la visibilidad de las mujeres en la informática 🤩https://shre.ink/oeUz

¡Enhorabuena! Orgullosos de nuestra #genteunizar 🫶

03.11.2025 10:35

👍 7

🔁 2

💬 0

📌 0

I was at #AdobeMAX this week to present #projectSurfaceSwap! Our surface selection and replacement techs!

Check it out here: youtu.be/Xg4n60hYfhA?...

What a blast the Sneaks were!

30.10.2025 22:51

👍 9

🔁 0

💬 0

📌 0

The #SIGGRAPH Thesis Fast Forward 2026 submissions are now open! Deadline: Nov 7th 2025. Let the world know what you've been working on in your PhD! More info at research.siggraph.org/thesisff/ and check out last year's iteration youtube.com/watch?v=8esF...

07.10.2025 15:05

👍 4

🔁 4

💬 0

📌 0

Who's Adam?

18.09.2025 07:43

👍 2

🔁 0

💬 1

📌 0

Sneak peak into the live event. That's the most important part of the FF, the other 2.5 hours pale in comparison.

19.08.2025 12:53

👍 3

🔁 0

💬 0

📌 0

Can we meet to have beer/coffee and talk about field trend, open scientific questions, the future of our species (this may get mildly depressing) and the meaning of life instead?

19.08.2025 12:51

👍 2

🔁 0

💬 0

📌 0

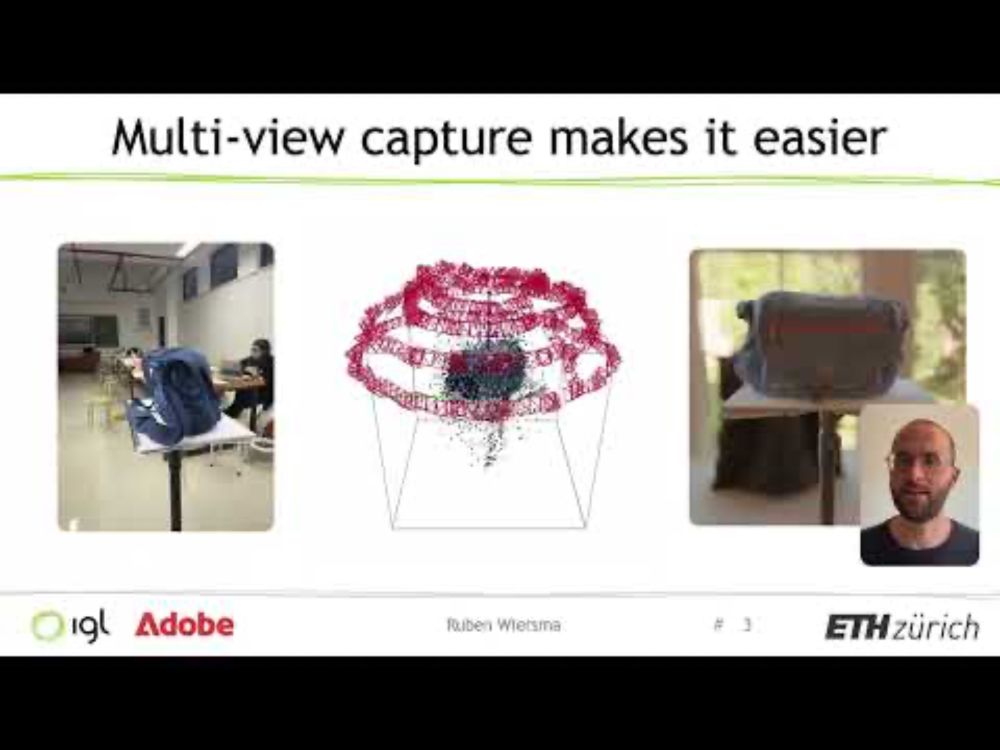

Uncertainty for SVBRDF Acquisition using Frequency Analysis - SIGGRAPH 2025

YouTube video by Ruben Wiersma

If you're confused, I uploaded the presentation as well www.youtube.com/watch?v=jv7o...

18.08.2025 14:04

👍 6

🔁 3

💬 1

📌 0

Fast Forward SIGGRAPH 2025 - Uncertainty for SVBRDF Acquisition using Frequency Analysis

YouTube video by Ruben Wiersma

Our fast forward for SIGGRAPH 2025. Keep SIGGRAPH weird! www.youtube.com/watch?v=sYel...

18.08.2025 14:04

👍 16

🔁 6

💬 2

📌 1

I will be presenting in the 10.30 session in room 208-209. I will share thoughts about implicit/generative and explicit appearance representations. See you there if that sounds interesting!

13.08.2025 16:39

👍 2

🔁 1

💬 0

📌 0

Come check out what the newest generation has been up to!

09.08.2025 14:44

👍 3

🔁 0

💬 0

📌 0

It's in Vancouver ;)

08.08.2025 20:08

👍 0

🔁 0

💬 1

📌 0

Presentation - SIGGRAPH 2025 Conference Schedule

🧑🎓I have the pleasure to present at the Best of Eurographics session at #SIGGRAPH2025 next week! I’ll be looking back at our work on appearance authoring, editing & understanding — come by and say hi! 🔗 s2025.conference-schedule.org/presentation...

08.08.2025 18:10

👍 7

🔁 1

💬 1

📌 1

Mark your calendars for the #SIGGRAPH 2025 Thesis Fast Forward in-person event. Recent PhD graduates will present their thesis work and answer questions on Monday 11 August, 10:30-11:30am: s2025.conference-schedule.org/presentation...

30.07.2025 07:42

👍 8

🔁 3

💬 0

📌 1

Folks in the #SIGGRAPH community:

You may or may not be aware of the controversy around the next #SIGGRAPHAsia location, summarized here www.cs.toronto.edu/~jacobson/we...

If you're concerned consider signing this letter docs.google.com/document/d/1...

via this form

docs.google.com/forms/d/e/1F...

20.06.2025 16:13

👍 26

🔁 7

💬 1

📌 2

Attending @cvprconference.bsky.social and looking for a PhD or postdoc position in the area of 3d reconstruction (Gaussian splatting, nerfs, scene understanding, etc.)? Find me or drop me an email ;)

12.06.2025 16:42

👍 17

🔁 10

💬 0

📌 0

⭐ Image editing without paired data, delivering on the promise of RGB<->X! Edit in Intrinsic space, get back your image with the desired modification!

The method is quite fun too, conditional token optimization and noise inversion.

12.06.2025 21:51

👍 6

🔁 2

💬 1

📌 0

I feel like "j'veux dire..." would be a pretty solid equivalent

09.06.2025 21:08

👍 1

🔁 0

💬 0

📌 0

Lots of ad for this in Old Street tube stop. Who is this ad for. Is the goal negative buzz?

06.06.2025 11:03

👍 0

🔁 0

💬 1

📌 0

Thanks! And congrats on the paper award!

17.05.2025 16:21

👍 1

🔁 0

💬 1

📌 0

Thanks for diving in the crazy worlds of materials with me!

17.05.2025 16:02

👍 1

🔁 0

💬 0

📌 0

Thanks Ana, hope to see you in Vancouver?

17.05.2025 16:02

👍 1

🔁 0

💬 1

📌 0

Thanks Vova!

17.05.2025 16:01

👍 0

🔁 0

💬 0

📌 0

Brilliant insights from @michael-j-black.bsky.social on the importance of data and 3D+ for 4D foundation models that understand humans, and the future of embodied intelligence in the last keynote talk of #Eurographics2025!

See you next year in Aachen :)

16.05.2025 11:23

👍 19

🔁 1

💬 0

📌 0

Awesome look into the future of humanoid robots and what we can learn from character animation from Karen Liu’s keynote at #Eurographics2025!

15.05.2025 16:34

👍 7

🔁 1

💬 0

📌 0

Amazing keynote by Alyosha Efros on the role of data in visual computing at #Eurographics2025!

Thought-provoking insights from generative models to 3D perception :)

14.05.2025 15:41

👍 14

🔁 2

💬 0

📌 0