Grok fact-checks our paper on Grok fact-checking - and it approves!

Grok fact-checks our paper on Grok fact-checking - and it approves!

🚨New WP "@Grok is this true?"

We analyze 1.6M factcheck requests on X (grok & Perplexity)

📌Usage is polarized, Grok users more likely to be Reps

📌BUT Rep posts rated as false more often—even by Grok

📌Bot agreement with factchecks is OK but not great; APIs match fact-checkers

osf.io/preprints/ps...

In a new working paper, @thomasrenault.bsky.social, @mmosleh.bsky.social & @dgrand.bsky.social break down how Grok is being used to fact-check, and on whom.

osf.io/preprints/ps...

New on @indicator.media: "@grok is this true" was the single most frequent reply tagging X's AI chatbot in the six months following its launch.

Comment l’irruption du FN a bouleversé le jeu politique

(A la marge)

blogs.alternatives-economiques.fr/anota/2025/1...

We are seeking a Fellow to lead cutting-edge research on short-form video content and its societal implications. Bridging the gap between computer vision, causal inference, and computational social science, the Fellow will focus on the large-scale analysis of TikTok data (using an existing, massive dataset). The position involves developing novel multimodal methods to understand how short-form algorithmic content shapes public opinion, political polarization, and online culture. This is a unique opportunity to work at the forefront of the field, combining rigorous social science research designs with state-of-the-art computational techniques.

The Center for Information Technology Policy at Princeton invites applications for a Postdoctoral Fellow to work with an interdisciplinary team (me, @bstewart.bsky.social, and @manoelhortaribeiro.bsky.social).

Link: puwebp.princeton.edu/AcadHire/app...

Please apply by THIS SUNDAY, Dec. 14!

Apply for funding to work with me and Gord at Cornell!

Would love to read more. Is there a full paper ? Or full version of the poster ? Thx

3 months into Meta’s pivot to Community Notes, the company has yet to share any data about its crowdsourced moderation feature.

With help from three @indicator.media readers in the pilot, I tried to piece together how it’s going:

indicator.media/p/a-first-lo...

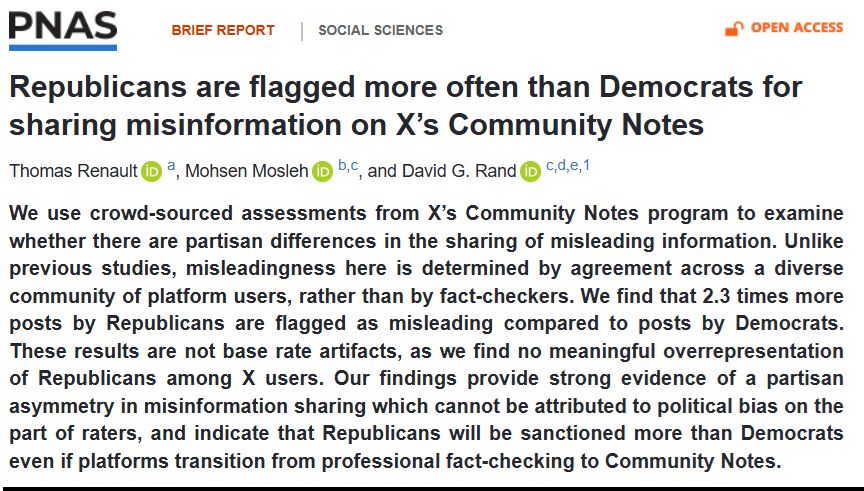

🚨In PNAS🚨

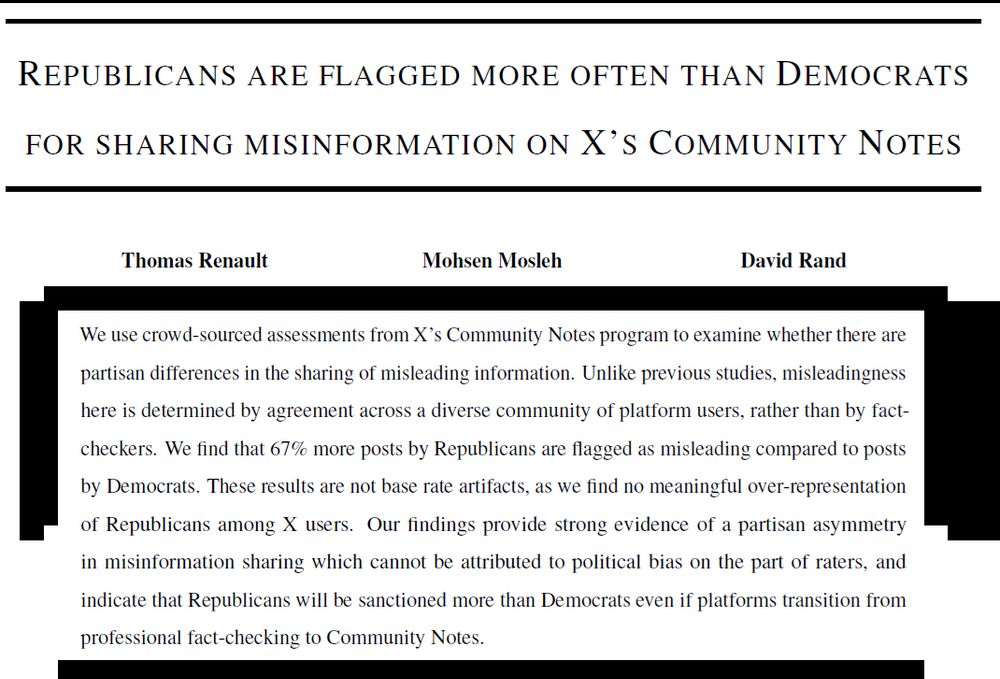

The right often accuses fact-checkers of political bias

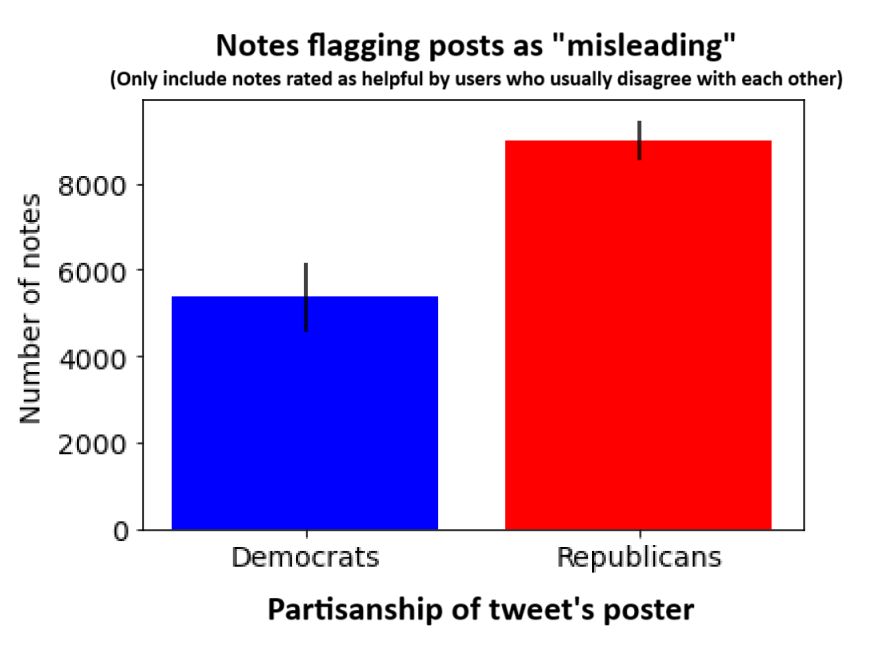

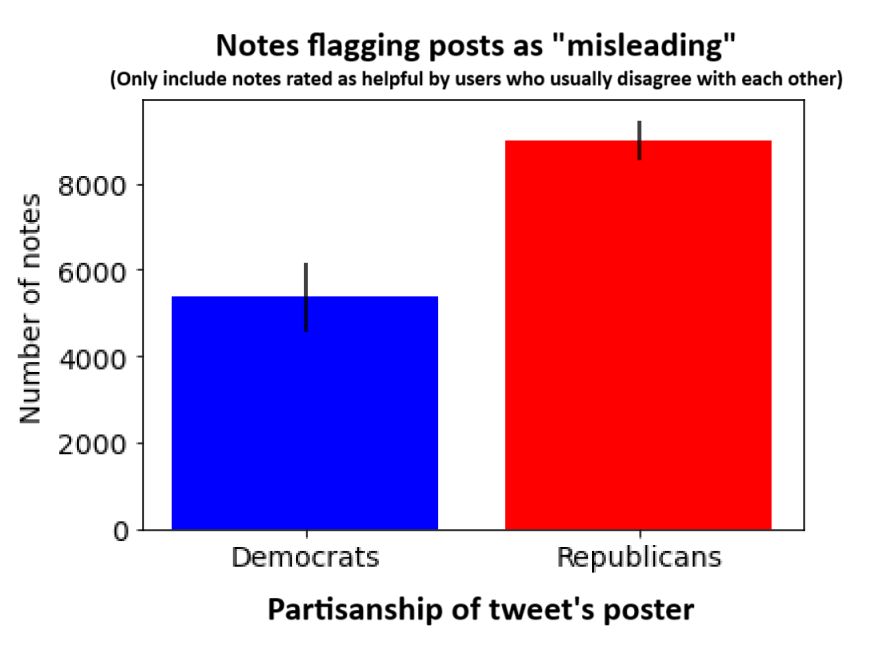

But we analyzed Community Notes on Musk's X and found posts flagged as "misleading" are 2.3x more likely to be written by Reps than Dems!

The issue is Reps sharing misinformation, not fact-checker bias...

www.pnas.org/doi/10.1073/...

With @dgrand.bsky.social and @mmosleh.bsky.social

🔗 Read the Brief Report (Open Access) here: www.pnas.org/doi/10.1073/...

— We find a real, measurable partisan gap in misinformation sharing : even a “crowdsourced,” supposedly neutral system ends up flagging more Republican posts

— This challenges arguments that fact-checkers should be dismissed on the grounds that they’re too biased.

We analyzed every English Community Note on X over 1.5 years. In Community Notes:

— Users from across the political spectrum propose and rate notes

— Notes are only published when there is agreement between people who typically disagree (via X’s “bridging algorithm”)

💡 Why is this important?

Many past studies have found that Republicans share more misinformation. But critics argue those findings could be driven by :

— Bias in how fact-checkers or academics define misinformation

— Bias in which news stories researchers choose to study

🚨 New in PNAS 🚨

Posts by Republicans are 2.3 times more likely to be flagged as misleading than those by Democrats on X's Community Notes. A 🧵

pnas.org/doi/epub/10.... (Open Access)

Interested in how people talk to each other online? Care about causal inference and/or NLP? Want to design and implement field experiments?

Come do a PhD with Dominik Hangartner, me, and a bunch of awesome people at IPL in Zurich:

jobs.ethz.ch/job/view/JOP...

Many thanks to lead author @thomasrenault.bsky.social who does amazing work on Community Notes and other topics; and the always-wonderful coauthor @mmosleh.bsky.social

For more of my group's work on misinformation, check out this doc: docs.google.com/document/d/1...

We examine all English notes 1/23-6/24. More notes are proposed on tweets written by Reps than Dems. The partisan diff is MUCH bigger when restricting to "helpful" (ie ~unbiased) notes: 63% on Reps, 37% on Dems. The "vox populi" on X has spoken and concluded that Reps share more misinfo than Dems!

Accusations of bias against conservatives drove Musk to buy Twitter- then gut fact-checking and up Community Notes. This month, Zuckerberg did the same at Meta. But greater sanctioning of conservatives could just be the result of conservatives sharing more misinformation bsky.app/profile/dgra...

🚨New WP🚨

Remember Musk+Zuck+Trump+Jordan etc crying fact-checker bias b/c Reps were flagged more than Dems? We analyzed Community Notes on Musk's X and guess what: posts flagged as "misleading" are 67% more likely to be written by Reps! The issue is Reps, not fact-checkers...

osf.io/preprints/ps...

🚨OpEd+data: Meta is out of step with public opinion🚨

Zuck cut moderation b/c he said people no longer want it. But he's wrong!

We polled 1k Americans and most people, including majority of Reps:

i) want content moderation

ii) don't want Community Notes w/o fact-checkers

thehill.com/opinion/tech...