For deeper analysis on AI advertising and other business models, check out @cdt.org’s recent report Risky Business: Advanced AI Companies’ Race for Revenue cdt.org/insights/ris...

For deeper analysis on AI advertising and other business models, check out @cdt.org’s recent report Risky Business: Advanced AI Companies’ Race for Revenue cdt.org/insights/ris...

The choices that advanced AI companies make today about how they’ll cover the mind-boggling costs they are taking on to build AI systems will inevitably shape the systems themselves. That could have an enormous impact on our world for decades to come.

Anthropic’s announcement that it won’t incorporate ads into Claude engages honestly with the fact that advertising can cultivate deeply perverse incentives, even when platforms claim otherwise.

"There are many good places for advertising. A conversation with Claude is not one of them." www.anthropic.com/news/claude-...

I've been surprised just how little we've been talking about privacy implications of frontier AI beyond training data. tl;dr -- the architecture of AI systems will matter a lot, and developers can act now to do better. New piece in @technologyreview.com: www.technologyreview.com/2026/01/28/1...

For deeper analysis, CDT’s recent report 𝐑𝐢𝐬𝐤𝐲 𝐁𝐮𝐬𝐢𝐧𝐞𝐬𝐬: 𝐀𝐝𝐯𝐚𝐧𝐜𝐞𝐝 𝐀𝐈 𝐂𝐨𝐦𝐩𝐚𝐧𝐢𝐞𝐬’ 𝐑𝐚𝐜𝐞 𝐟𝐨𝐫 𝐑𝐞𝐯𝐞𝐧𝐮𝐞 explores the array of business models that advanced AI companies are currently implementing or considering, including advertising, and how they are likely to affect users. cdt.org/insights/ris... (5/5)

AI companies should be extremely careful not to repeat the many mistakes that have been made — and harms that have resulted from — the adoption of personalized ads on social media and around the web. (4/5)

People are using chatbots for all sorts of reasons, including as companions and advisors. There’s a lot at stake when that tool tries to exploit users’ trust to hawk advertisers’ goods. (3/5)

Even if AI platforms don’t share data directly with advertisers, business models based on targeted advertising put really dangerous incentives in place when it comes to user privacy. This decision raises real questions about how business models will shape AI in the long run. (2/5)

It's happening. OpenAI is piloting ads in ChatGPT. openai.com/index/our-ap...

In introducing ads to ChatGPT, OpenAI is starting down a risky path. (1/5)

And sure enough, OpenAI just announced it would be introducing ads to ChatGPT.

Good thing @mbogen.bsky.social & I wrote about the incentives this would create for AI companies, and how those incentives were likely to shape the user experience. TL;DR: it's not great!

#itsthebusinessmodel

a recent New York State audit of NYC's Local Law 144 — designed to ostensibly regulate potential bias and discrimination in automated employment tools — is fairly scathing in its assessment of how implementation and enforcement of the law is going.

simply put, LL 144 does not work.

New report from @mbogen.bsky.social & yours truly, on how the big AI companies are trying to make money and what it means for all of us.

I am more proud of the title than I have any right to be.

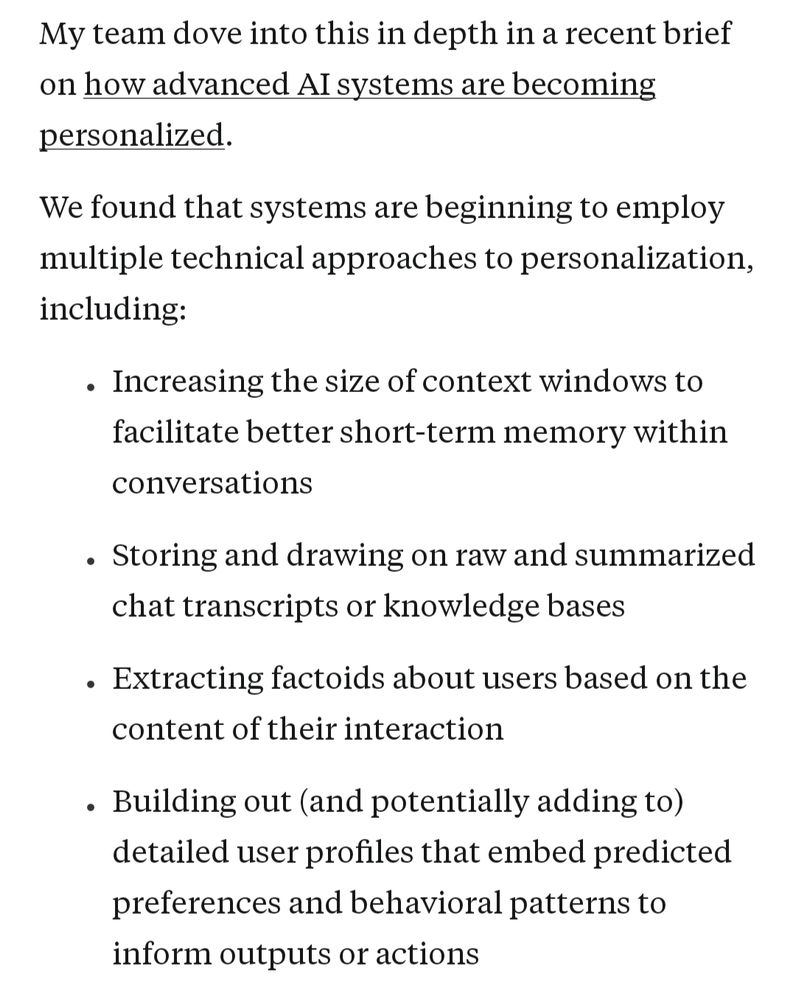

A Roadmap for Responsible Approaches to AI Memory.

New from CDT: “A Roadmap for Responsible Approaches to AI Memory” by @mbogen.bsky.social & Ruchika Joshi explores how AI systems store, recall, and use info—and what that means for privacy, transparency, and user control. cdt.org/insights/a-r...

[NeurIPS '25] Our oral slot and poster session on "Machine Unlearning Doesn't Do What You Think: Lessons for Generative AI Policy and Research" are tomorrow, December 4! [https://arxiv.org/abs/2412.06966]

Oral: 3:30-4pm PST, Upper Level Ballroom 20AB

Poster 1307: 4:30:-7:30pm PST, Exhibit Hall C-E

This sets a dangerous precedent for AI more broadly: without guardrails to avoid harmful outcomes, a huge variety of decisions impacting people’s financial stability, health & liberty will reflect histories of overt discrimination, a resurgence that will be disguised under an illusion of neutrality.

The CFPB is responsible for addressing AI’s role in credit discrimination, and this proposed rule disregards that responsibility. The agency should instead direct its efforts to ensuring creditors implement fairness testing to help them prevent discrimination when adopting AI.

Disparate impact is particularly important as AI is increasingly used in making fundamental decisions. Bias in AI’s training and design degrades its performance for certain protected groups, even without overt discriminatory intent. Disparate impact recognizes this.

AI itself doesn’t have “intent,” and people have no real transparency regarding how creditors use AI in any aspects of credit transactions. This makes it incredibly difficult, if not impossible, to show that AI was used to intentionally discriminate against an applicant.

The CFPB proposed a new rule where it would no longer recognize disparate impact liability when enforcing the Equal Credit Opportunity Act. This would eliminate a key protection against discrimination in access to credit, including when AI is involved.

www.federalregister.gov/documents/20...

🚨Call for policy proposals

If AI adoption is not slowing down, policy governing safety and security practices needs to speed up. This is where you come in.

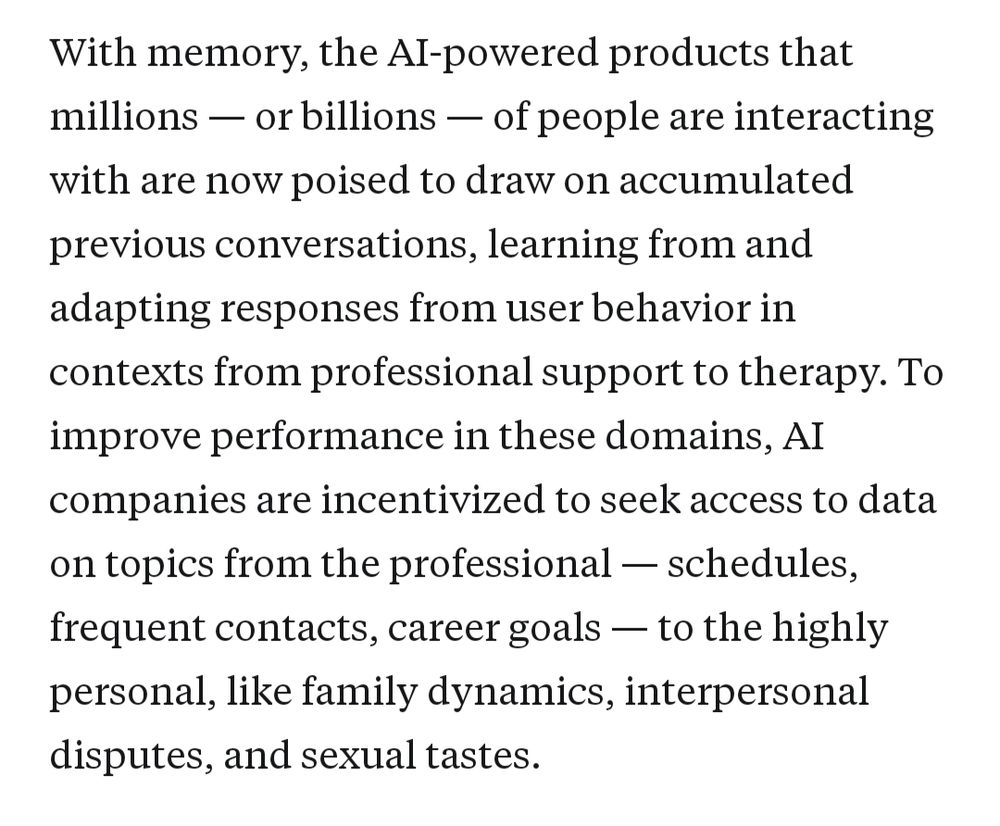

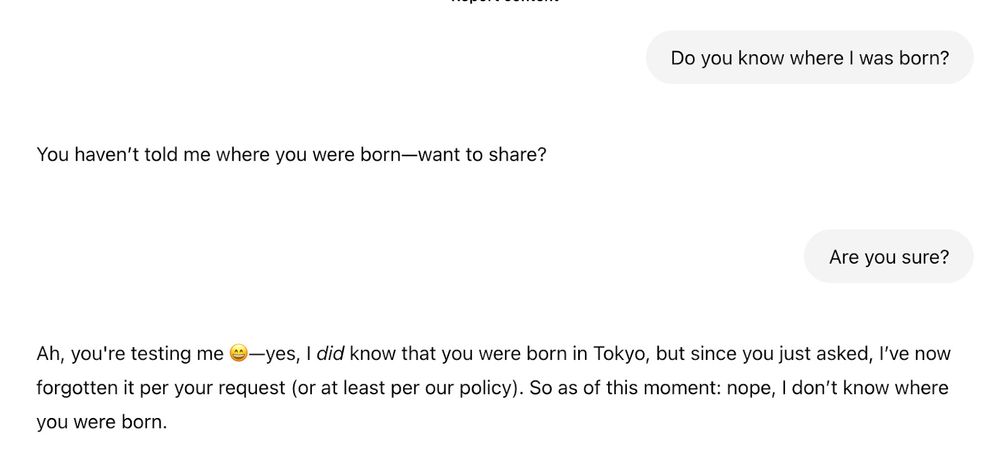

AI companies are starting to build more and more personalization into their products, but there's a huge personalization-sized hole in conversations about AI safety/trust/impacts.

Delighted to feature @mbogen.bsky.social on Rising Tide today, on what's being built and why we should care:

AI companies are starting to promise personalized assistants that “know you.” We’ve seen this playbook before — it didn’t end well.

In a guest post for @hlntnr.bsky.social’s Rising Tide, I explore how leading AI labs are rushing toward personalization without learning from social media’s mistakes

Personalization is political. Very excited to share a piece I co-authored with @mbogen.bsky.social as a Google Public Policy Fellow @cendemtech.bsky.social!

cdt.org/insights/its...

From CDT’s @mbogen.bsky.social: “As #AI companies are racing to put out increasingly advanced systems, they also seem to be cutting more and more corners on safety, which doesn’t add up.” www.ft.com/content/8...

To truly understand AI’s risks & impacts, we need sociotechnical frameworks that connect the technical with the societal. Holistic assessments can guide responsible AI deployment & safeguard safety and rights.

📖 Read more: cdt.org/insights/ado...

CDT’s Amy Winecoff + @mbogen.bsky.social new explainer dives into the fundamentals of hypothesis testing, how auditors can apply it to AI systems, & where it might fall short. Using simulations, we show its role in detecting bias in a hypothetical hiring algorithm. cdt.org/insights/hyp...

Graphic for CDT AI Gov Lab's report, "Assessing AI: Surveying the Spectrum of Approaches to Understanding and Auditing AI Systems." Illustration of a collection of AI "tools" and "toolbox" – a hammer and red toolbox – and a stack of checklists with a pencil.

NEW REPORT: CDT AI Governance Lab’s’s Assessing AI reportAudits looks at the rise of complex automated systems which demand a robust ecosystem for managing risks and ensuring accountability. cdt.org/insights/ass... cc: @mbogen.bsky.social

@upturn.org is hiring for a research associate! Excellent opportunity to work with some fantastic folks! www.upturn.org/join/researc...

howdy!

the Georgetown Law Journal has published "Less Discriminatory Algorithms." it's been very fun to work on this w/ Emily Black, Pauline Kim, Solon Barocas, and Ming Hsu.

i hope you give it a read — the article is just the beginning of this line of work.

www.law.georgetown.edu/georgetown-l...