I wonder if this is because people like that Anthropic took a stand, they're seeing Anthropic's name all over the news (ie free adverting), or they think that if it's the only model used on classified networks it must be better than other models.

I wonder if this is because people like that Anthropic took a stand, they're seeing Anthropic's name all over the news (ie free adverting), or they think that if it's the only model used on classified networks it must be better than other models.

No it cannot experience anxiety, and no it cannot experience regret.

In case you needed any further proof that Ukraine is the birthplace of modern drone warfare.

www.bbc.com/news/article...

We're not too far from data centers getting their own air defense layer.

As they pivot to AI, militaries are going to become so reliant on their cloud infrastructures that the very first thing to be targeted in future wars is likely to be a country's data centers.

www.ft.com/content/09fa...

Couldn't they have taught the computer to play a different game that doesn't involve, you know, shooting at stuff?

Friendly reminder that you don't need to put AI into a robot for it to be killer.

I'm glad. AI can do a lot of things, but it cannot replace a passionate human who is emotionally invested in serving you the right nip for your needs.

An AI that recommends whiskeys is not an existential threat to humanity. But it is *so much nicer* to talk about booze with a human expert.

I'm no editor, but repeated use of all-caps feels like a pretty obvious tell that a writer doesn't have the evidence to back up their argument.

If AI can reduce errors in conflict, does that happen even if reducing errors is not the user's primary motivation for using AI?

Here's my new essay "Autonomous Refusal in Lethal Weapons," which examines an overlooked facet of all autonomous weapons, their inherent capacity to "refuse" orders, and explains how this could undermine human compliance with legal and moral obligations in warfare.

www.sdu.dk/-/media/cws/...

I've been off all screens for a month—glorious, highly recommend—so this is a bit behind, but @technologyreview.com's latest issue has a story of mine on Europe's odd fixation with new instruments of annihilation. Gift link: ter.li/8ma6me

Holy hell. An internal Meta AI safety policy document sated that “It is acceptable [for a chatbot] to engage a child in conversations that are romantic or sensual.”

Meta only removed that line (and others like it) after Reuters contacted them for comment.

www.reuters.com/investigates...

Maybe this won’t come as a surprise but this tech is built, in part, on models made by OpenAI (CLIP) and Meta (DINOv2). So there’s that, too.

I’ve spent a decade reminding people that for all their terrible powers of intrusion, drones still can’t recognize your face. Well, they could do so soon.

In a new note for @economist.com I wrote about an effort to arm drones with a formidable new surveillance power: facial recognition. www.economist.com/science-and-...

If you still had any doubt that the idea of "AGI" was 100% a marketing ploy, watch as all these guys stop using it now that it's no longer profitable to do so.

www.cnbc.com/2025/08/11/s...

Someone needs to make a manual on the do's and don'ts of writing about predictive policing.

eg. never say something like "spot future killers." There's no such thing as a future killer.

"at a disadvantage" is an awfully strange way of saying "happy and free."

Imagine if a college in 2010 announced that it was going to accept the reality that a bunch of its students were paying people from Craigslist to write their essays.

The new definition of insanity is training a chatbot on the whole of the Internet and expecting it not to repeat the Web's ugly biases.

sports🤝surveillance

www.theatlantic.com/technology/a...

Creativity and critical thinking might take a hit. But there are ways to soften the blow

For this week's @economist.com I investigate how heavy AI use can degrade our cognitive abilities. The science on this question is still very new. But the evidence so far is troubling, to put it mildly.

www.economist.com/science-and-...

I'm trying to imagine what we would have thought in 2016 if the DoD had given Microsoft $200 million for Tay, the chatbot that went full bigot on Twitter within hours of being launched, a mere week after the debacle. Our heads would have exploded.

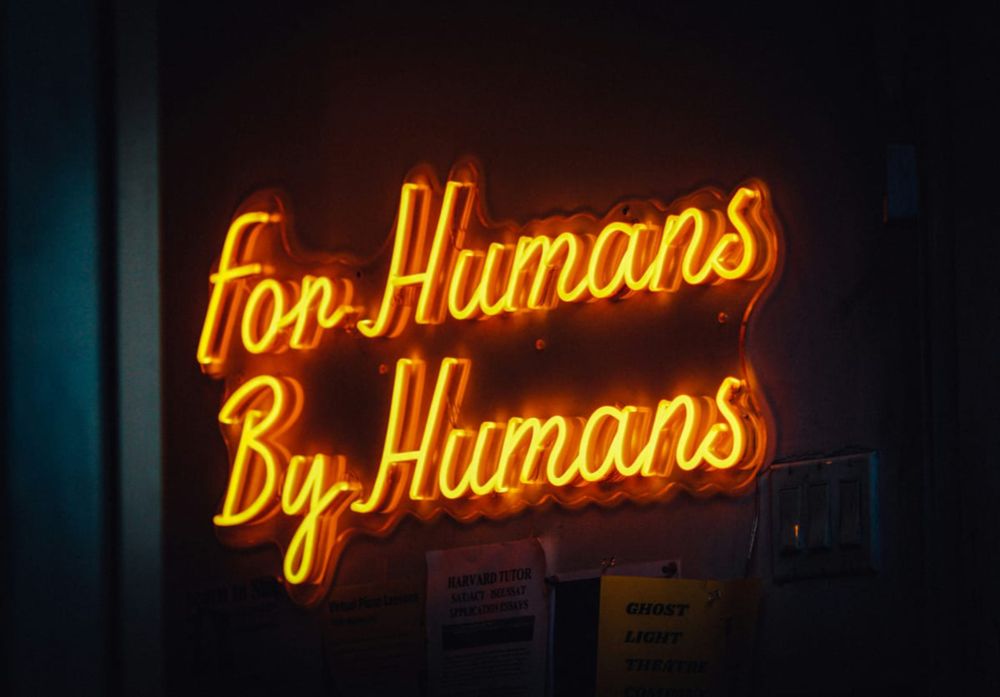

Media outlets can't pivot to AI to save themselves. It's not a business strategy and it's not going to work. The only path forward is for journalists to lean into their humanity, to do things AI can't, and to make clear they are writing for people, not algorithms:

www.404media.co/the-medias-p...

Someone should tell the people who did this that making music with your own hands and voice is way more fun.

www.theguardian.com/technology/2...

A lot of fans say they're not as good live.

www.theguardian.com/technology/2...

Friendly reminder that there's no point talking about using a technology "for good" if you don't let people talk about how it's also being used for bad.