LSE announces new centre to study animal sentience

The Jeremy Coller Centre for Animal Sentience at LSE will develop new approaches to studying the feelings of other animals scientifically.

An emotional day - I can announce I'll be the first director of The Jeremy Coller Centre for Animal Sentience at the LSE, supported by a £4m grant from the Jeremy Coller Foundation. Our mission: to develop better policies, laws and ways of caring for animals. (1/2)

www.lse.ac.uk/News/Latest-...

25.03.2025 10:42

👍 721

🔁 133

💬 51

📌 12

Post-Doctoral Associate/Research Scientist, New York University

- PhilJobs:JFP

Post-Doctoral Associate/Research Scientist, New York University

An international database of jobs for philosophers

Two or more 2-year Postdoc / Research Scientist positions at NYU to work on issues tied to artificial consciousness. Strong research track record with expertise in AI expected. No teaching. Salary around $62K. Details and application materials are here philjobs.org/job/show/28878

16.03.2025 13:02

👍 8

🔁 2

💬 1

📌 0

Events

Spring 2025

The NYU Wild Animal Welfare Program is thrilled to be hosting an online panel with Heather Browning and Oscar Horta on March 19 at 12pm ET! This event will settle once and for all the question whether wild animal welfare is net positive or negative. RSVP below :)

sites.google.com/nyu.edu/wild...

04.03.2025 14:40

👍 22

🔁 6

💬 0

📌 2

Should we care more about shrimp?

www.slowboring.com/p/mailbag-mo...

28.02.2025 12:48

👍 73

🔁 6

💬 9

📌 1

In philosophy at least, this is uncommon but the editor would be fully within their rights. The reviewers only make recommendations; the editors are supposed to use their independent judgement when appropriate.

20.02.2025 13:33

👍 1

🔁 0

💬 0

📌 0

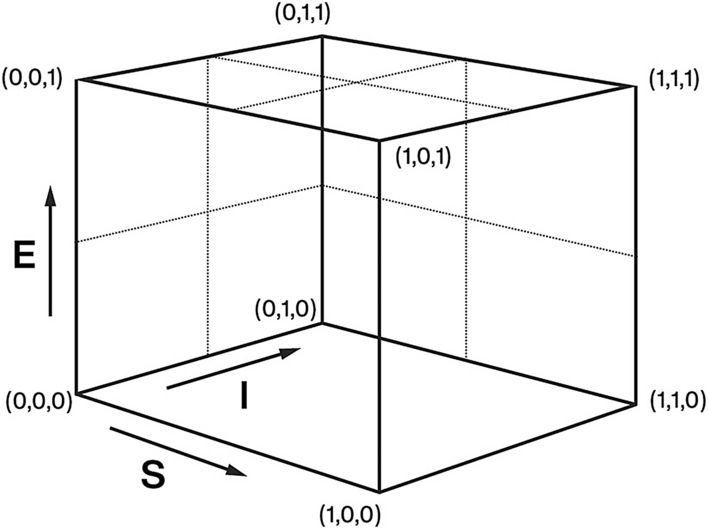

LLMs and World Models, Part 1

How do Large Language Models Make Sense of Their “Worlds”?

Do large language models develop "emergent" models of the world? My latest Substack posts explore this claim and more generally the nature of "world models":

LLMs and World Models, Part 1: aiguide.substack.com/p/llms-and-w...

LLMs and World Models, Part 2: aiguide.substack.com/p/llms-and-w...

13.02.2025 22:30

👍 212

🔁 58

💬 14

📌 10

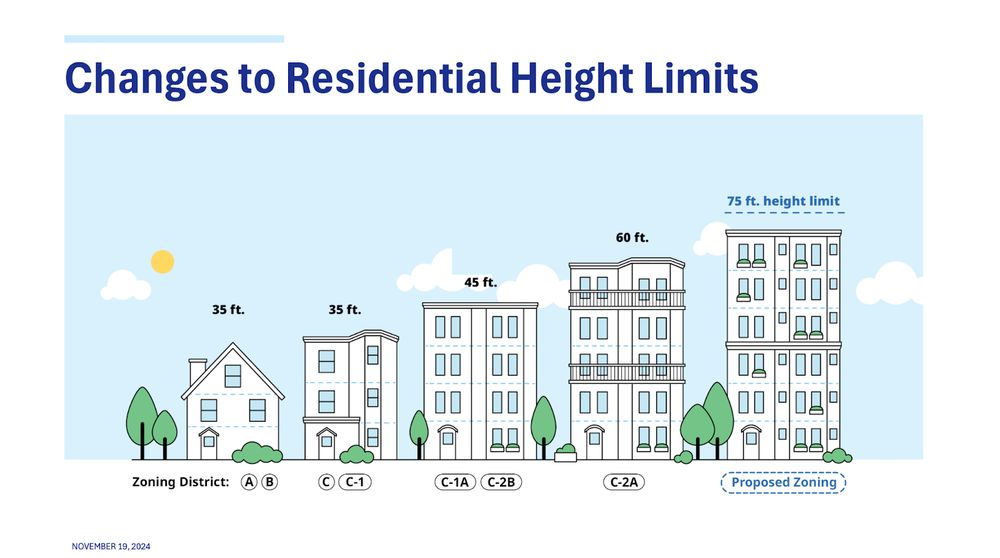

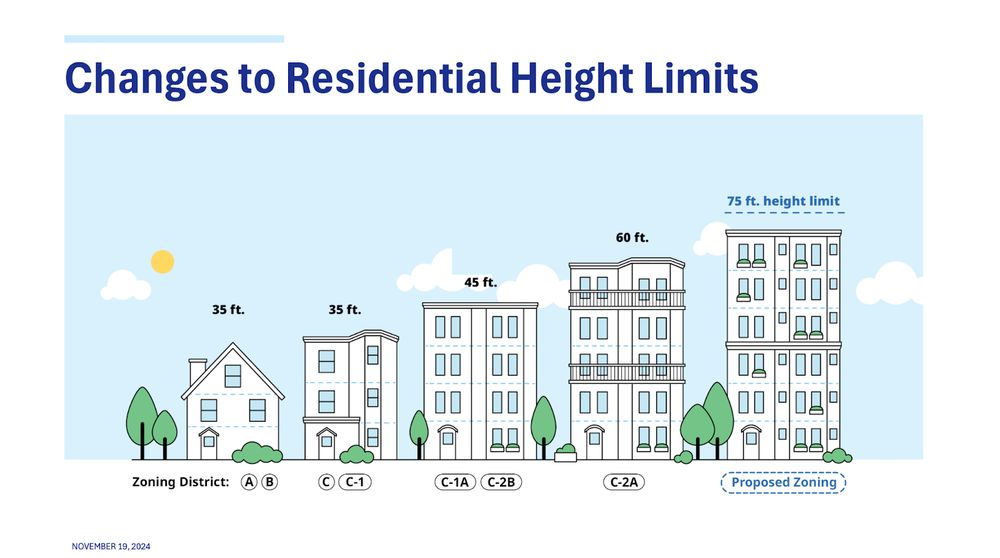

I can’t believe it - after years of advocacy, exclusionary zoning has ended in Cambridge.

We just passed the single most comprehensive rezoning in the US—legalizing multifamily housing up to 6 stories citywide in a Paris style

Here’s the details 🧵

11.02.2025 01:46

👍 2451

🔁 487

💬 66

📌 234

This is one reason why I think manuscripts should contain a robustness checks section. This would make it normal for researchers to conduct additional analyses, and for reviewers to request additional analyses, that ask: if key analyses are done this other reasonable way, are the results different?

07.02.2025 14:24

👍 13

🔁 4

💬 1

📌 0

Evaluating Artificial Consciousness 2025 - Sciencesconf.org

Call for abstracts: Workshop “Evaluating Artificial Consciousness”:

eac-2025.sciencesconf.org

10-11 June 2025 at RUB Bochum

#PhilMind #consciousness #consci #sentience #Ethics #CogSci

17.01.2025 14:18

👍 17

🔁 8

💬 1

📌 2

Cherish every day this thing isn't spreading from human to human.

27.12.2024 16:48

👍 10

🔁 2

💬 0

📌 0

Title card: Alignment Faking in Large Language Models by Greenblatt et al.

New work from my team at Anthropic in collaboration with Redwood Research. I think this is plausibly the most important AGI safety result of the year. Cross-posting the thread below:

18.12.2024 17:46

👍 126

🔁 29

💬 5

📌 11

New in print: "Let's Hope We're Not Living in a Simulation":

In Reality+, David Chalmers suggests that it wouldn't be too bad if we lived in a computer simulation. I argue on the contrary that if we live in a simulation, we ought to attach a significant conditional credence to 1/3

17.12.2024 19:21

👍 35

🔁 6

💬 1

📌 0