Thanks to my amazing coauthors

@markar.bsky.social, Destiny Akinode, @kthai1618.bsky.social, Bradley Emi, Max Spero and @miyyer.bsky.social and the support of UMD Clip lab and Pangram Labs

Thanks to my amazing coauthors

@markar.bsky.social, Destiny Akinode, @kthai1618.bsky.social, Bradley Emi, Max Spero and @miyyer.bsky.social and the support of UMD Clip lab and Pangram Labs

We will be continuously monitoring American news to keep up with how AI use changes over time. Follow along at 🌐 ainewsaudit.github.io

We’re releasing:

🌐 Browse articles: ainewsaudit.github.io

📂 Datasets (recent_news, opinions, ai_reporters): github.com/jenna-russe...

📄 Paper: arxiv.org/abs/2510.18774

AI has been creeping into the news all of us read, often without any disclosure. We call for clearly defined standards for U.S. newsrooms:

1️⃣ Clearly define what counts as acceptable use of AI and publish these standards openly

2️⃣ Require AI-use attestations for all writers

Many AI-written stories still contain authentic quotes. We hypothesize that people often use AI for editing or expanding on their human-written work. But with no disclosure, there's no way to tell for sure.

We also track how AI adoption has evolved over time:

Among 10 veteran reporters we followed longitudinally, AI use rose from 0% pre-ChatGPT (2022) to >40% in 2025.

AI is disproportionately affecting news written in languages other than English. Roughly ~8% of English news is AI-generated, compared to 33% of non-English languages (primarily Spanish). Without disclosure, we cannot be sure whether AI is translating stories or writing them.

In NYT, WaPo & WSJ, opinion sections show 6.4× higher AI use than other sections, rising ~25× since 2022 (from ~0% → ~4%).

AI use is concentrated among prominent guest authors: politicians, CEOs, and scientists.

Despite widespread use, transparency is basically nonexistent.

Out of 100 AI-flagged articles we manually annotated, only 5 disclosed that AI was used and over 90% of outlets have no public AI policy.

AI use isn’t evenly distributed:

🗞️ Far higher in small local papers than national outlets

🌎 Especially common in Mid-Atlantic & Southern states

🏢 Largely Driven by ownership groups (e.g. Boone Newsmedia & Advance Publications)

🧭 Most concentrated in weather, tech, and health

We detect AI using Pangram, a model with a reported false positive rate of 0.001% on news text. We find that 5.2% of recent news Is completely AI-generated, with another 3.9% partially AI-generated. www.pangram.com/

AI is already at work in American newsrooms.

We examine 186k articles published this summer and find that ~9% are either fully or partially AI-generated, usually without readers having any idea.

Here's what we learned about how AI is influencing local and national journalism:

🤔 What if you gave an LLM thousands of random human-written paragraphs and told it to write something new -- while copying 90% of its output from those texts?

🧟 You get what we call a Frankentext!

💡 Frankentexts are surprisingly coherent and tough for AI detectors to flag.

International students will stop coming to American universities if their visas are going to be at risk. This will make our intellectual community poorer and also make tuition more expensive for domestic students.

There is a quasi-religion in Silicon Valley that views AI as godlike. This faith has always been parallel to Evangelical Christianity: salvation (transhumanism), the rapture (the technological singularity), and demons (Roko's Basilisk)

Lately the AI faith has fully fused with Christian Nationalism.

Introducing 🐻 BEARCUBS 🐻, a “small but mighty” dataset of 111 QA pairs designed to assess computer-using web agents in multimodal interactions on the live web!

✅ Humans achieve 85% accuracy

❌ OpenAI Operator: 24%

❌ Anthropic Computer Use: 14%

❌ Convergence AI Proxy: 13%

Is the needle-in-a-haystack test still meaningful given the giant green heatmaps in modern LLM papers?

We create ONERULER 💍, a multilingual long-context benchmark that allows for nonexistent needles. Turns out NIAH isn't so easy after all!

Our analysis across 26 languages 🧵👇

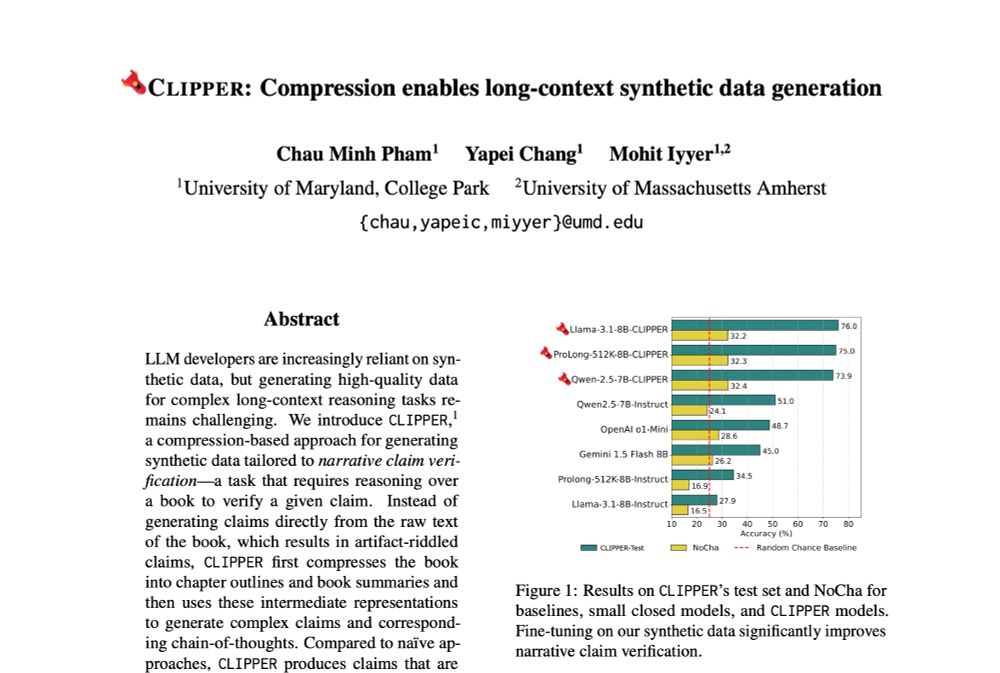

⚠️Current methods for generating instruction-following data fall short for long-range reasoning tasks like narrative claim verification.

We present CLIPPER ✂️, a compression-based pipeline that produces grounded instructions for ~$0.5 each, 34x cheaper than human annotations.

Also, the non experts have a range of LLM usage. Having a writing background is key, and a fact many are missing.

Hi Shane. We originally used 5 people, only 1 of whom could detect AI-generated text. I then searched out people who I thought could be experts and they had to pass multiple rounds of testing to be included in the study. Details in appendix. Nonexpert performance is already widely known.

This is a great question - we didn’t dive deeper than choosing articles from American publications. There were a few mentions where experts mentioned this awkward phrasing and thought it could be a non-native speaker, but still knew it was a human!

It would be very interesting to see if every language had their own set of “AI vocab” words 🤣

I think importantly is user who do writing tasks like editing/publishing! It’s the mix of having great language skills and frequent usage. Alot of ppl who just use LLMs a lot are way worse detectors than they think they’ll be.

Link found in last post of thread 😀 (but putting it here again) arxiv.org/abs/2501.15654

📎 Paper: arxiv.org/abs/2501.15654

👩💻 Code & Data: github.com/jenna-russe...

Thanks to my amazing coauthors @markar.bsky.social and @miyyer.bsky.social and the support of UMass NLP

We're releasing our dataset of articles and expert annotations! 📂✨

We hope this helps users of automatic detectors understand not just if a text is AI-generated, but why. 🤖📖

Can LLMs mimic human expert detectors? 🤔

We prompted LLMs to imitate our expert annotators. The results show promise, outperforming detectors like Binoculars and RADAR. 🚀 However, LLMs still fall short of matching our human experts and advanced detectors like Pangram. ⚖️👥

What they get wrong: ❌

Sometimes, humans get tripped up by:

📚 Common "AI vocab" words in human-written texts

✍️ Grammar mistakes they assume "AI wouldn’t make"

🌀🗣️ One expert was often fooled by o1's use of informal language - like slang, contractions, and colloquialisms.

What experts get right: ✅

They spot telltale signs of AI, like:

📚 "AI Vocab" (delve, crucial, vibrant ...)

🔄 Predictable sentence structure

🗨️ Quotes that feel too polished

For human-written content, they look for:

🎨 Creativity

🎭 Stylistic quirks

🌊 A natural & clear flow

Across GPT-4o, Claude, and o1 articles, experts correctly identified 99.3% of AI-generated content without misclassifying any human-written articles.🕵️♀️

Among automatic detectors, Pangram significantly outperformed the rest, missing only a few more texts than the experts. 🔍⚡