CDT's Non-Resident Fellows.

We’re thrilled to welcome CDT’s 2026–2027 class of Non-Resident Fellows — a remarkable group of scholars and experts whose insights will strengthen our work defending civil rights, civil liberties, and democratic values in the digital age. cdt.org/about/fellow...

06.02.2026 18:26

👍 8

🔁 2

💬 1

📌 1

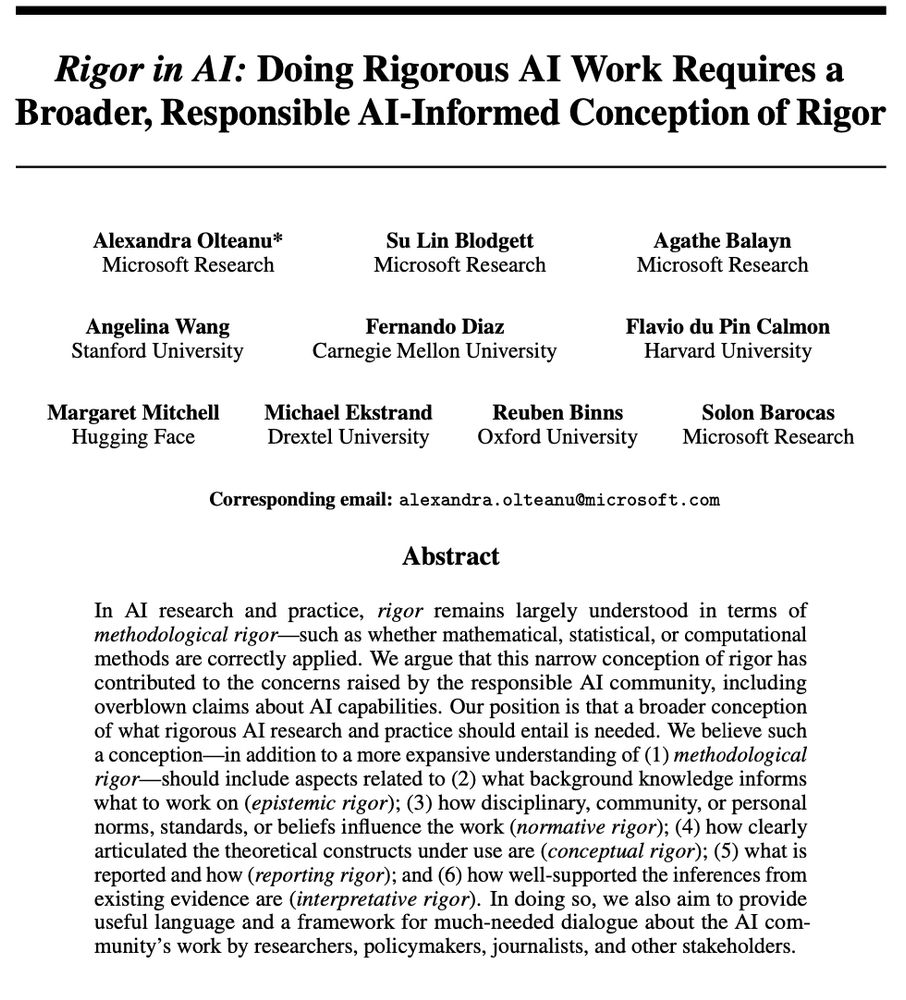

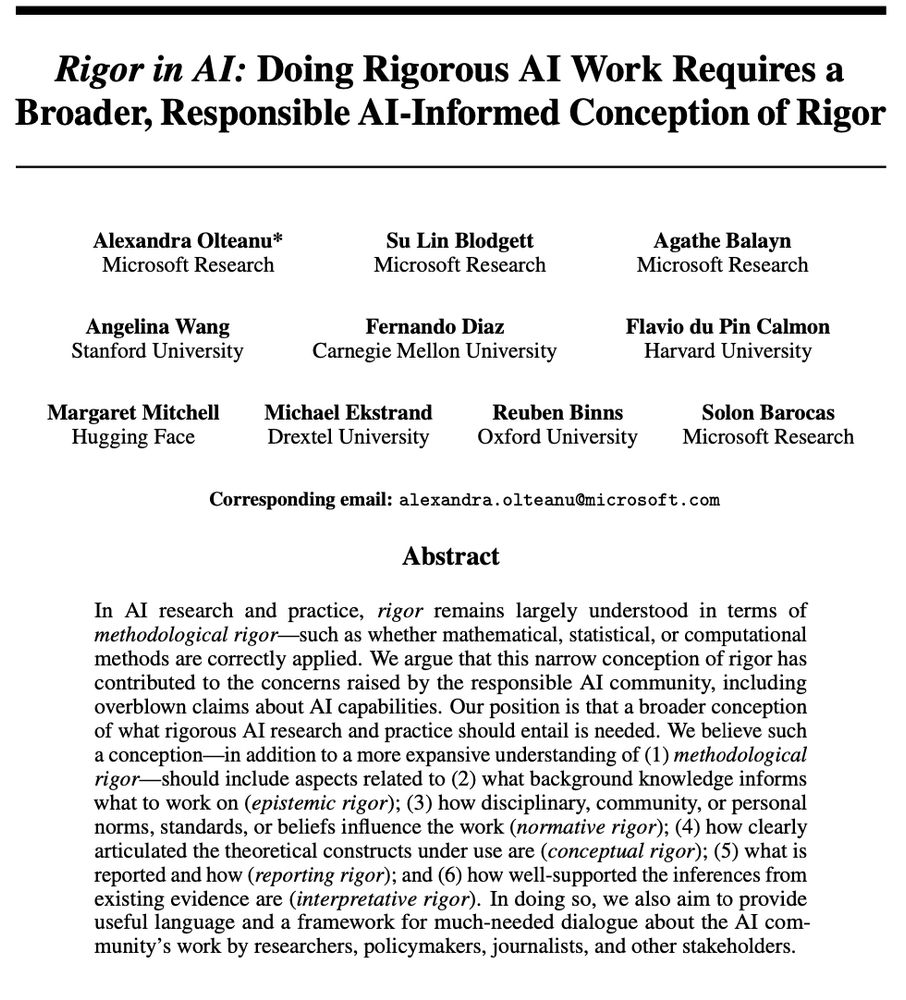

Title + abstract of the preprint

Excited to present a new preprint with @nkgarg.bsky.social: presenting usage statistics and observational findings from Paper Skygest in the first six months of deployment! 🎉📜

arxiv.org/abs/2601.04253

14.01.2026 19:48

👍 147

🔁 45

💬 4

📌 4

This is work with Daniel E. Ho and @sanmikoyejo.bsky.social. We also have a related preprint with Erin Beeghly on the social impacts of this personalization, and how it interacts with group-based preferences and stereotypes: angelina-wang.github.io/files/person...

12.12.2025 20:42

👍 3

🔁 1

💬 0

📌 0

This has huge implications for evaluation:

• Benchmark scores ≠ what end users actually experience

• Some high-risk behaviors, e.g., manipulative patterns, might only surface in personalized interfaces

We argue for more realistic evals that take personalization into account.

12.12.2025 20:42

👍 2

🔁 1

💬 1

📌 0

In fact, even the same MMLU science question can yield different ChatGPT answers for different users, despite using the exact same underlying model. User-level personalization and interaction patterns shape outputs in ways existing evals do not capture.

12.12.2025 20:42

👍 1

🔁 1

💬 1

📌 0

Most LLM evals use API calls or offline inference, testing models in a memory-less silo. Our new Patterns paper shows this misses how LLMs actually behave in real user interfaces, where personalization and interaction history shape responses: arxiv.org/abs/2509.19364

12.12.2025 20:42

👍 38

🔁 11

💬 1

📌 1

Started a thread in the other place and bringing it over here - I really think we should be more vocal about the opportunities that lay at the intersection of these two options!

So I'm starting a live thread of new roles as I become aware of them - feel free to add / extend / share :

29.10.2025 02:25

👍 107

🔁 31

💬 6

📌 3

One of the postdoc programs is called Empire AI, similarly named but entirely unrelated to the excellent book Empire of AI by @karenhao.bsky.social that everyone should read

28.10.2025 18:19

👍 2

🔁 0

💬 1

📌 0

Cornell University, Empire AI Fellows Program

Job #AJO30971, Postdoctoral Fellow, Empire AI Fellows Program, Cornell University, New York, New York, US

Cornell (NYC and Ithaca) is recruiting AI postdocs, apply by Nov 20, 2025! If you're interested in working with me on technical approaches to responsible AI (e.g., personalization, fairness), please email me.

academicjobsonline.org/ajo/jobs/30971

28.10.2025 18:19

👍 32

🔁 20

💬 1

📌 2

AI Surrogates and illusions of generalizability in cognitive science

Recent advances in artificial intelligence (AI) have generated enthusiasm for using AI simulations of human research participants to generate new know…

Can AI simulations of human research participants advance cognitive science? In @cp-trendscognsci.bsky.social, @lmesseri.bsky.social & I analyze this vision. We show how “AI Surrogates” entrench practices that limit the generalizability of cognitive science while aspiring to do the opposite. 1/

21.10.2025 20:24

👍 289

🔁 119

💬 9

📌 27

Grateful to win Best Paper at ACL for our work on Fairness through Difference Awareness with my amazing collaborators!! Check out the paper for why we think fairness has both gone too far, and at the same time, not far enough aclanthology.org/2025.acl-lon...

30.07.2025 15:34

👍 28

🔁 4

💬 0

📌 0

Angelina Wang presents the benchmark with Jewish synagogue hiring as an example.

@angelinawang.bsky.social presents the "Fairness through Difference Awareness" benchmark. Fairness tests require no discrimination...

but the law supports many forms of discrimination! E.g., synagogues should hire Jewish rabbis. LLMs often get this wrong aclanthology.org/2025.acl-lon... #ACL2025NLP

30.07.2025 07:26

👍 5

🔁 1

💬 1

📌 0

Was beyond disappointed to see this in the AI Action Plan. Messing with the NIST RMF (which many private & public institutions currently rely on) feels like a cheap shot

24.07.2025 14:25

👍 11

🔁 5

💬 2

📌 1

Print screen of the first page of a paper pre-print titled "Rigor in AI: Doing Rigorous AI Work Requires a Broader, Responsible AI-Informed Conception of Rigor" by Olteanu et al. Paper abstract: "In AI research and practice, rigor remains largely understood in terms of methodological rigor -- such as whether mathematical, statistical, or computational methods are correctly applied. We argue that this narrow conception of rigor has contributed to the concerns raised by the responsible AI community, including overblown claims about AI capabilities. Our position is that a broader conception of what rigorous AI research and practice should entail is needed. We believe such a conception -- in addition to a more expansive understanding of (1) methodological rigor -- should include aspects related to (2) what background knowledge informs what to work on (epistemic rigor); (3) how disciplinary, community, or personal norms, standards, or beliefs influence the work (normative rigor); (4) how clearly articulated the theoretical constructs under use are (conceptual rigor); (5) what is reported and how (reporting rigor); and (6) how well-supported the inferences from existing evidence are (interpretative rigor). In doing so, we also aim to provide useful language and a framework for much-needed dialogue about the AI community's work by researchers, policymakers, journalists, and other stakeholders."

We have to talk about rigor in AI work and what it should entail. The reality is that impoverished notions of rigor do not only lead to some one-off undesirable outcomes but can have a deeply formative impact on the scientific integrity and quality of both AI research and practice 1/

18.06.2025 11:48

👍 63

🔁 18

💬 2

📌 3

Alright, people, let's be honest: GenAI systems are everywhere, and figuring out whether they're any good is a total mess. Should we use them? Where? How? Do they need a total overhaul?

(1/6)

15.06.2025 00:20

👍 33

🔁 11

💬 1

📌 0

I’ll be at both FAccT in Athens and ACL in Vienna this summer presenting these works, come say hi :)

02.06.2025 16:38

👍 5

🔁 0

💬 0

📌 0

Screenshot of paper title and author: "Identities are not Interchangeable: The Problem of Overgeneralization in Fair Machine Learning" by Angelina Wang

2. 𝗿𝗮𝗰𝗶𝘀𝗺 ≠ 𝘀𝗲𝘅𝗶𝘀𝗺 ≠ 𝗮𝗯𝗹𝗲𝗶𝘀𝗺 ≠ … At FAccT 2025: Different oppressions manifest differently, and that matters for AI. Ex: neighborhoods segregate by race, but rarely by sex, shaping the harms we should target. arxiv.org/abs/2505.04038

02.06.2025 16:38

👍 4

🔁 0

💬 1

📌 0

Instead, we should permit differentiating based on the context. Ex: synagogues in America are legally allowed to discriminate by religion when hiring rabbis. Work with Michelle Phan, Daniel E. Ho, @sanmikoyejo.bsky.social arxiv.org/abs/2502.01926

02.06.2025 16:38

👍 1

🔁 1

💬 1

📌 0

Screenshot of paper title and author list: "Fairness through Difference Awareness: Measuring Desired Group Discrimination in LLMs" by Angelina Wang, Michelle Phan, Daniel E. Ho, Sanmi Koyejo

1. 𝗳𝗮𝗶𝗿𝗻𝗲𝘀𝘀 ≠ 𝘁𝗿𝗲𝗮𝘁𝗶𝗻𝗴 𝗴𝗿𝗼𝘂𝗽𝘀 𝘁𝗵𝗲 𝘀𝗮𝗺𝗲. At ACL 2025 Main: We diagnose issues like Google Gemini’s racially diverse Nazis to be a result of equating fairness with racial color-blindness, erasing important group differences.

02.06.2025 16:38

👍 3

🔁 0

💬 1

📌 0

In the pursuit of convenient definitions and equations of fairness that scale, we have abstracted away too much social context, enforcing equality between any and all groups. In two new works, we push back against two pervasive and pernicious assumptions:

02.06.2025 16:38

👍 1

🔁 0

💬 1

📌 0

Have you ever felt that AI fairness was too strict, enforcing fairness when it didn’t seem necessary? How about too narrow, missing a wide range of important harms? We argue that the way to address both of these critiques is to discriminate more 🧵

02.06.2025 16:38

👍 15

🔁 1

💬 1

📌 0

My ‘woke DEI’ grant has been flagged for scrutiny. Where do I go from here?

My work in making artificial intelligence fair has been noticed by US officials intent on ending ‘class warfare propaganda’.

The US government recently flagged my scientific grant in its "woke DEI database". Many people have asked me what I will do.

My answer today in Nature.

We will not be cowed. We will keep using AI to build a fairer, healthier world.

www.nature.com/articles/d41...

25.04.2025 17:19

👍 40

🔁 13

💬 1

📌 1

If you work in ML fairness, perhaps you tend to get asked similar sets of questions from ML-focused folks, such as what is the best definition or equation for fairness. For those interested, please read, and for those often asked these questions, feel free to pass on the site!

17.03.2025 14:39

👍 1

🔁 0

💬 0

📌 0

I've recently put together a "Fairness FAQ": tinyurl.com/fairness-faq. If you work in non-fairness ML and you've heard about fairness, perhaps you've wondered things like what the best definitions of fairness are, and whether we can train algorithms that optimize for it.

17.03.2025 14:39

👍 44

🔁 19

💬 3

📌 1

*Please repost* @sjgreenwood.bsky.social and I just launched a new personalized feed (*please pin*) that we hope will become a "must use" for #academicsky. The feed shows posts about papers filtered by *your* follower network. It's become my default Bluesky experience bsky.app/profile/pape...

10.03.2025 18:14

👍 522

🔁 296

💬 23

📌 83

I am excited to announce that I will join the University of Zurich as an assistant professor in August this year! I am looking for PhD students and postdocs starting from the fall.

My research interests include optimization, federated learning, machine learning, privacy, and unlearning.

06.03.2025 02:17

👍 28

🔁 5

💬 1

📌 1

Cutting $880 billion from Medicaid is going to have a lot of devastating consequences for a lot of people

26.02.2025 03:11

👍 30885

🔁 6886

💬 2007

📌 509

Yes these are good points, and thanks for the pointer! But the trajectory does seem to be towards LLMs replacing human participants in certain cases. The presence of these companies, for instance, to me signal real world use: www.syntheticusers.com, synthetic-humans.ai

20.02.2025 02:08

👍 2

🔁 0

💬 1

📌 0

Yes! The preprint is available here: arxiv.org/abs/2402.01908

20.02.2025 02:06

👍 2

🔁 0

💬 1

📌 0

A screenshot of an email notifying Spotsylvania parents that funding for a program for youth with disabilities has been canceled

the richest man in the world has decided that your kids don't deserve special education programs

16.02.2025 18:14

👍 10638

🔁 4677

💬 343

📌 621