Are AVs safer than non AVs?

Are AVs safer than non AVs?

arxiv.org/abs/2411.10109

Why link is broken?

replicate.delivery/xezq/qyZUXNM...

They do require it.

If you use general purpose tools like Chagpt for this, then yes.

Tools like mine are built exactly to overcome the tyranny of the mean.

My question is: why don’t you try it and check for yourself?

It’s free to try and then you’d have your own informed opinion.

The best thing about this app is watching people talk about what LLMs can and can’t do but their only experience is chatting with ChatGPT on the free version.

What a surprise. Who would have guessed ?

Your rejection has been inspiring to us.

Please keep at it.

Love that happens.

I can’t confirm any specific customers. But hope they are.

Why, instead of shuddering, don’t you give it a try with a topic you know a lot about?

Wouldn’t that allow you to know how good they are or at least get a sense?

bsky.app/profile/emol...

We still get new costumers everyday.

The coolest thing about this app in contrast to the other is that it was able to gather the most annoying people in a single place:

There’s deep thoughts and empathic virtue signalling at every corner.

Glad we have the tech/AI bros on the other one.

bsky.app/profile/emol...

🤷♂️

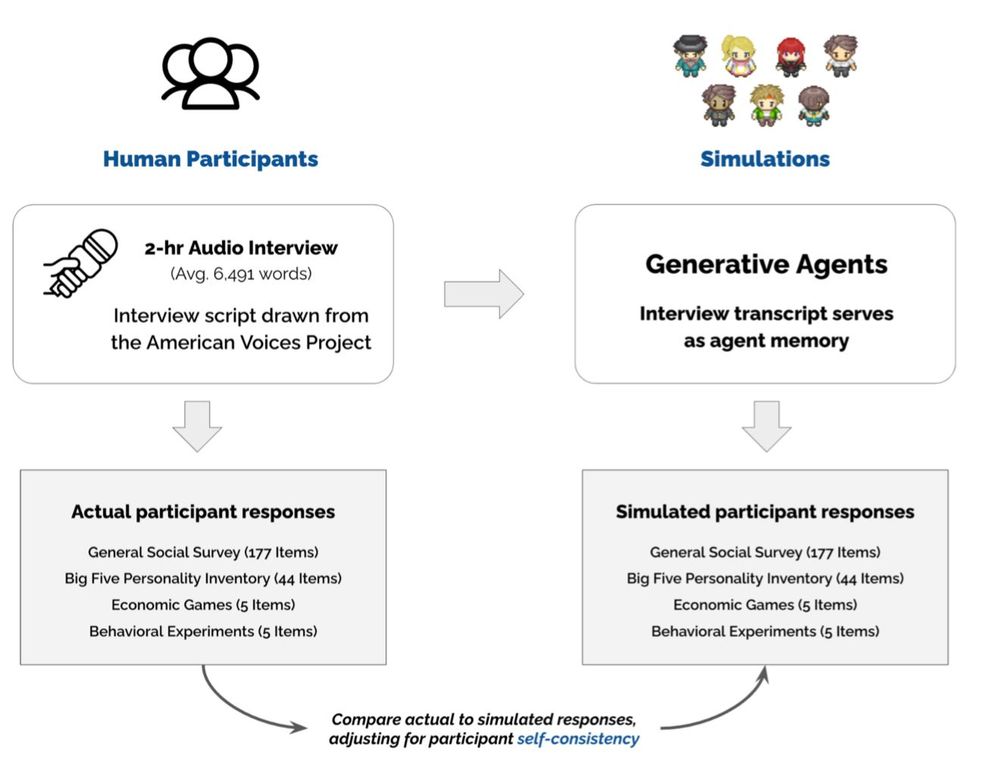

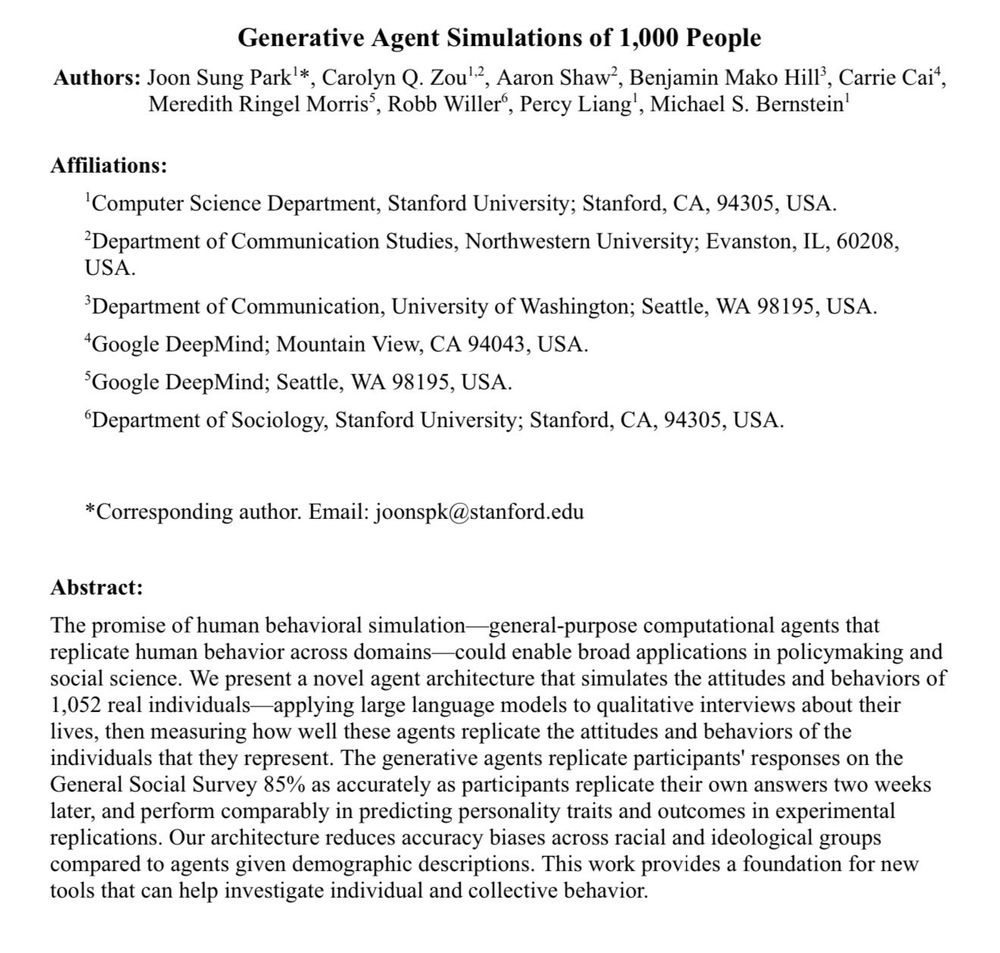

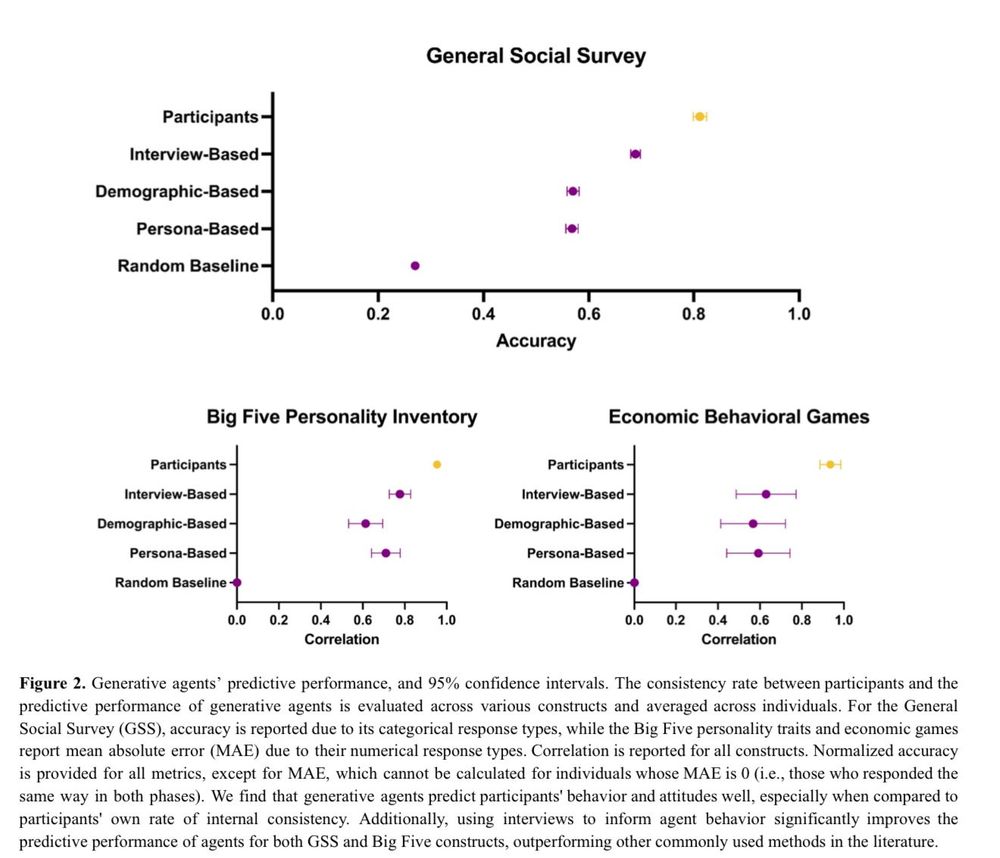

Crazy interesting paper in many ways:

1) Voice-enabled GPT-4o conducted 2 hour

interviews of 1,052 people

2) GPT-4o agents were given the transcripts & prompted to simulate the people

3) The agents were given surveys & tasks. They achieved 85% accuracy in simulating interviewees real answers!

Because we provide value to the people who buy our service.

This is wild. User researchers (myself definitely included) scoffed at the idea of doing research with “synthetic users” a year or so ago. Might be time to start thinking differently about the idea.

Happy to do a personal demo on how to get started.