We put probabilistic circuits into diffusion language models and got a big boost in reasoning performance!

We put probabilistic circuits into diffusion language models and got a big boost in reasoning performance!

🚨 New paper alert!

We introduce Vision-Language Programs (VLP), a neuro-symbolic framework that combines the perceptual power of VLMs with program synthesis for robust visual reasoning.

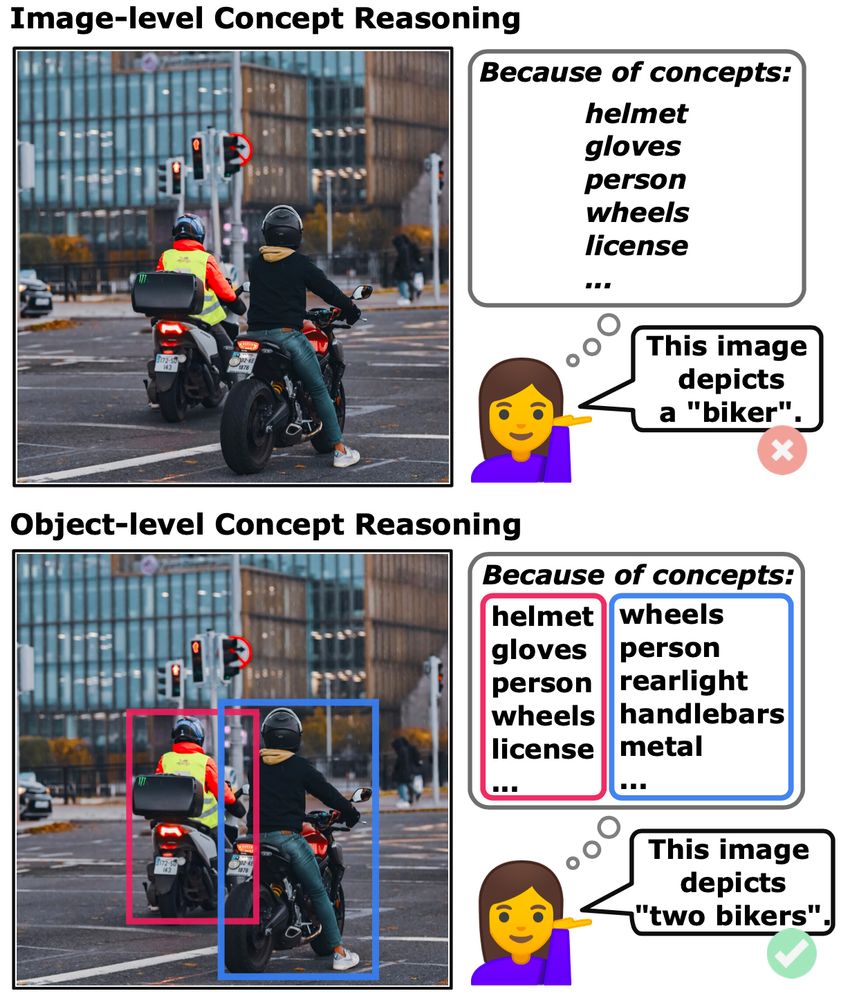

Can concept-based models handle complex, object-rich images? We think so! Meet Object-Centric Concept Bottlenecks (OCB) — adding object-awareness to interpretable AI. Led by David Steinmann w/ @toniwuest.bsky.social & @kerstingaiml.bsky.social .

📄 arxiv.org/abs/2505.244...

#AI #XAI #NeSy #CBM #ML

I think we should be building systems that complement people; systems that do well the things that people do poorly; systems that make individuals and organizations more effective and more humane. 3/

🚀 TU Darmstadt leads the new Cluster of Excellence "Reasonable AI" – advancing trustworthy, efficient & adaptive AI grounded in common sense. A big thank you to the entire team, the university, and the state of Hesse for their tremendous work & support!

Horizontal, vertical, hybrid data partitioning: heterogeneity is tough to handle in federated learning!

🔥In “Scaling Probabilistic Circuits via Data Partitioning" - accepted at #UAI25 - we unify the different settings through aggregation of learned client distributions: arxiv.org/abs/2503.08141

Excited to share that our paper got accepted at #ICML2025!! 🎉

We challenge Vision-Language Models like OpenAI’s o1 with Bongard problems, classic visual reasoning challenges and uncover surprising shortcomings.

Check out the paper: arxiv.org/abs/2410.19546

& read more below 👇

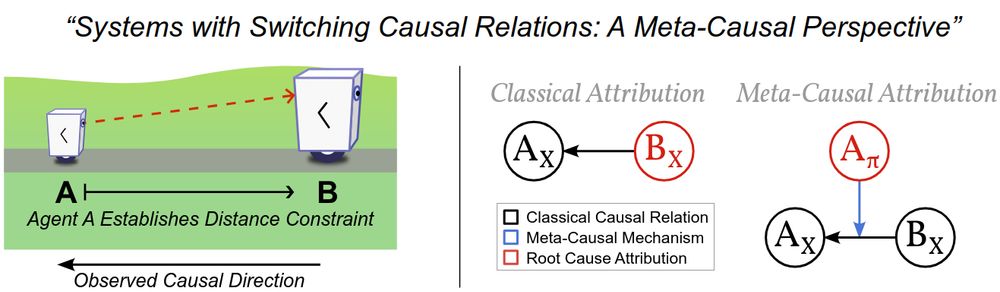

I'm excited to present our spotlight on meta-causal models at #ICLR2025 next week.

We model evolving causal graphs in dynamic systems. Applications to inference and attribution of agent actions. Paper: openreview.net/forum?id=J9V...

Visit our poster #441 during the Sat 3pm session.

Feel in meme mode today. 😅

Happy to see our work at TMLR!

We systematically show the relationships between two apparently different fields: tensor factorizations and circuits, and how bridging the two enables us to exchange results, research opportunitie in ML, and practical implementation solutions.

Thrilled to share our #ICLR2025 work on Meta-Causal States! 🌟 Causal graphs evolve with dynamic systems & agent actions. We show how to cluster causal models by qualitative behavior, revealing hidden dynamics & emergent relationships 🚀 #Causality #ML

https://arxiv.org/abs/2410.13054

When building human allied AI systems, it is imperative to think of who is in control. In our blue sky paper at #AAAI, we argue that a deeper understanding of whether human is in the loop or if AI is in the loop is essential! arxiv.org/pdf/2412.14232

Scaling continuous latent variable models as probabilistic integral circuits @nolovedeeplearning.bsky.social @gengala

Neural Concept Binder @WolfStammer

@florianbusch.bsky.social @moritzwillig.bsky.social @philosotim.bsky.social

Some of the causality crew are here and Florian is also working on PCs

Currently finishing my master's thesis and this plot is kinda mesmerizing ✨️🤩

GMMs were here 30 years ago and will be here in 30 years.

GANs? VAEs? nah.

I would really appreciate such an effort. Students and phds I talked to acknowledged that problem for model/algo comparability, but nobody knew of any ML resources treating it.

🌳 🌳🌲 🌲 ️

🌲 🌲 🌳 🌲

🌳 🌳 🌲 🌲 🌿 🌿

🌲🌳 🌳🌲☘️ 🌲🌳 🦔 🌳

🌳 🦔 🐿️ 🌳 🐿️🐿️ 🍄 🌲🦌🌲

🚂

🌳 🌳 🦔 🌳🌲 🌲 🌳

🌲 🌳 🌲 🌲 🌲 🌲 🌲