Excited to share our #ICCV2025 work Reusing Computation in Text-to-Image Diffusion for Efficient Generation of Image Sets!

Our method generates large sets of images using significantly less compute than standard diffusion.

📎https://ddecatur.github.io/hierarchical-diffusion/

1/

22.10.2025 20:22

👍 8

🔁 2

💬 1

📌 2

This work was led by Sining Lu and Guan Chen, in collaboration with Nam Anh Dinh, Itai Lang, Ari Holtzman, and me.

Check out our paper: arxiv.org/abs/2508.08228

We’re still actively developing LL3M, and we’d love to hear your thoughts! 7/

15.08.2025 04:15

👍 1

🔁 1

💬 0

📌 0

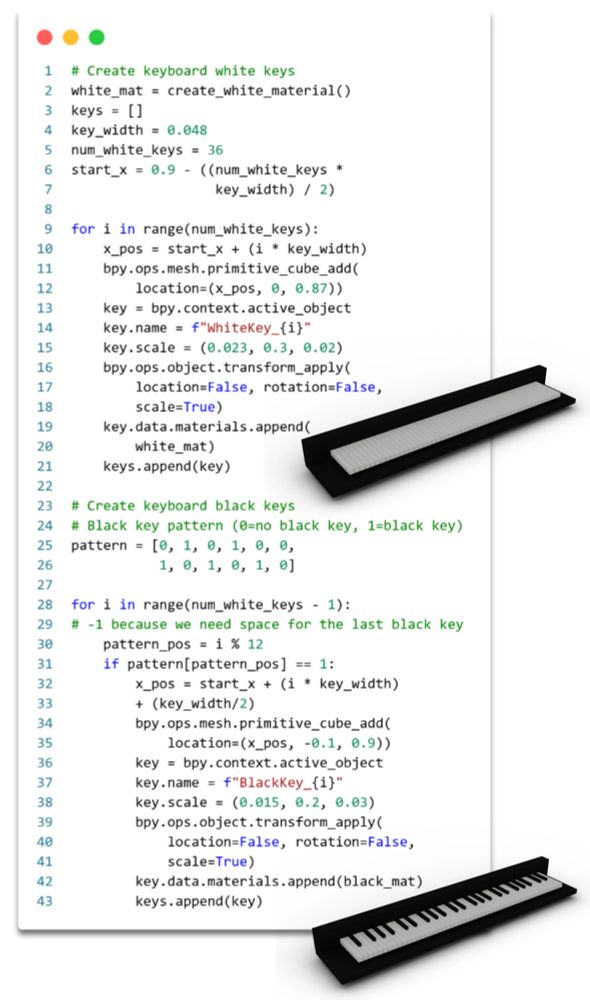

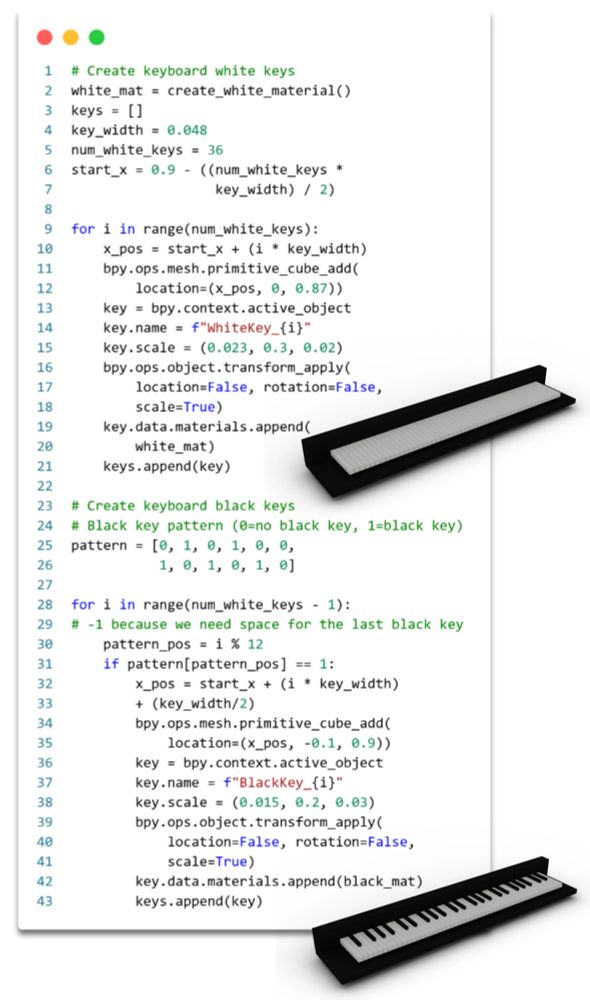

Another cool thing about LL3M: the Blender code it writes is actually readable. Clear structure, detailed comments, intuitive variable names. Easy to tweak a single parameter (e.g. key width) or even change the algorithmic logic (e.g. the keyboard pattern). 6/

15.08.2025 04:15

👍 0

🔁 0

💬 1

📌 0

LL3M generates 3D assets in 3 phases with specialized agents:

1️⃣ Initial Creation → break prompt into subtasks, retrieve relevant code snippets (BlenderRAG)

2️⃣ Auto-refine → critic spots issues, verification checks fixes

3️⃣ User-guided → iterative edits via user feedback

5/

15.08.2025 04:15

👍 0

🔁 0

💬 1

📌 0

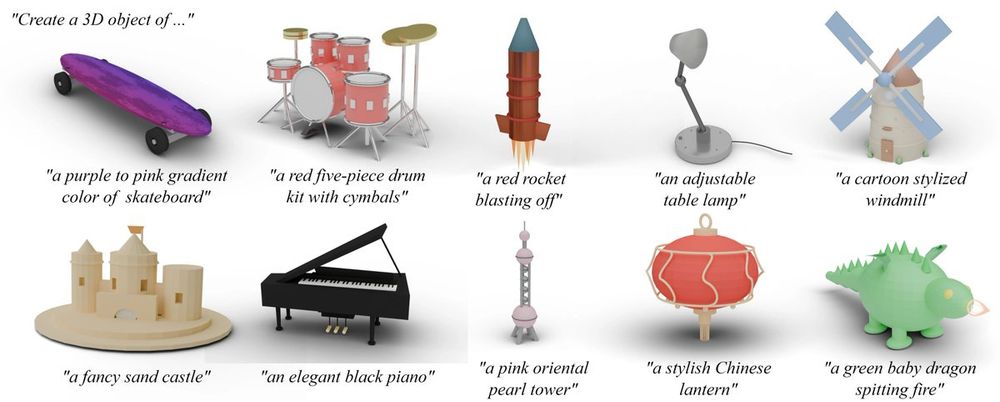

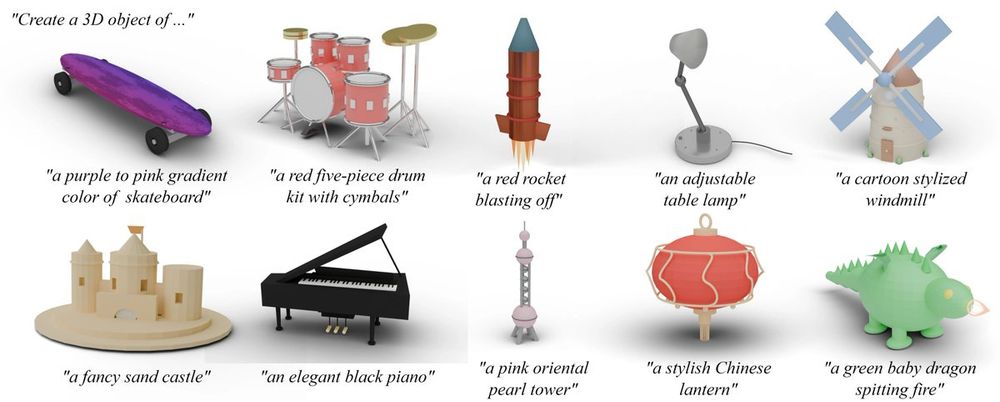

LL3M can create a wide range of shapes, without requiring specialized 3D datasets or fine-tuning. Every asset created is represented under the hood as editable Blender code. 4/

15.08.2025 04:15

👍 0

🔁 0

💬 1

📌 0

Even non-experts can jump right in and easily edit 3D shapes. Blender code created by LL3M generates a node graph that is packed with tunable parameters, enabling users to tweak colors, textures, patterns, lengths, heights, and more. 3/

15.08.2025 04:15

👍 0

🔁 0

💬 1

📌 0

What we ❤️ about LL3M: You're in the loop! If you want to make a tweak, LL3M can be your collaborative 3D design partner. And there's no need to regenerate the entire model each time - target a specific part, provide follow-up prompts, and the rest stays intact. 2/

15.08.2025 04:15

👍 0

🔁 0

💬 1

📌 0

We’ve been building something we’re 𝑟𝑒𝑎𝑙𝑙𝑦 excited about – LL3M: LLM-powered agents that turn text into editable 3D assets. LL3M models shapes as interpretable Blender code, making geometry, appearance, and style easy to modify. 🔗 threedle.github.io/ll3m 1/

15.08.2025 04:15

👍 2

🔁 0

💬 1

📌 0

Our work “Geometry in Style” will be presented at #CVPR2025 on Sunday at 4pm in ExHall D, poster 219. Drop by and say hi!

Our technique is capable of performing expressive text-driven deformations that preserve the input shape identity.

1/

15.06.2025 18:33

👍 22

🔁 4

💬 1

📌 0

Big update from The Workshop on Computer Vision For Mixed Reality @ CVPR 2025:

📝 Papers and schedule are now up!

Our Speakers:

Richard Newcombe (Meta)

Anjul Patney (NVIDIA)

Rana Hanocka (UChicago)

Laura Leal-Taixe (NVIDIA)

Margarita Grinvald (Meta)

📅 June 11, 8AM 📍Room 109.

cv4mr.github.io

03.06.2025 15:50

👍 15

🔁 3

💬 2

📌 4

Congrats Brian and team on the best paper honorable mention at #3dv2025 🥳🎉

Brian (hywkim-brian.github.io/site/) is going to start his PhD next year at Columbia with @silviasellan.bsky.social Make sure to watch out for their awesome works 📈📈📈

26.03.2025 03:53

👍 15

🔁 3

💬 1

📌 0

Tweet advertising that Rana Hanocka at UChicago CS is recruiting PhD students.

My colleague Rana Hanocka does exciting work on the frontier for 3D graphics and AI/ML, and she’s recruiting PhD students this cycle!

11.12.2024 06:18

👍 6

🔁 1

💬 1

📌 0