Finally, I want to thank the folks from HuggingFace for helping draft the official blog post (special shoutout to @clefourrier , @vanstriendaniel, @nathanhabib1011) and @Cohere_Labs for the research credits. :)

Finally, I want to thank the folks from HuggingFace for helping draft the official blog post (special shoutout to @clefourrier , @vanstriendaniel, @nathanhabib1011) and @Cohere_Labs for the research credits. :)

Evals are often the first step, we hope FilBench paves the way for language-specific adaptation especially for Philippine languages! I've written some of my thoughts here:

ljvmiranda921.github.io/projects/20...

Here's the link to the paper and leaderboard:

📜 Paper: arxiv.org/abs/2508.03523

📊 Leaderboard: ud-filipino-filbench-leaderboard.hf.space/

This collaboration is exciting, it felt like assembling the Avengers of Filipino NLP. @acocodes and Conner are great collaborators, and I was happy to team-up with @jcblaisecruz and @josephimperial_, who are working on Filipino NLP for longer than I did!

🇵🇭 One of my research interests is improving the state of Filipino NLP

Happy to share that we're taking a major step towards this by introducing FilBench, an LLM benchmark for Filipino!

Also accepted at EMNLP Main! 🎉

Learn more:

huggingface.co/blog/filbench

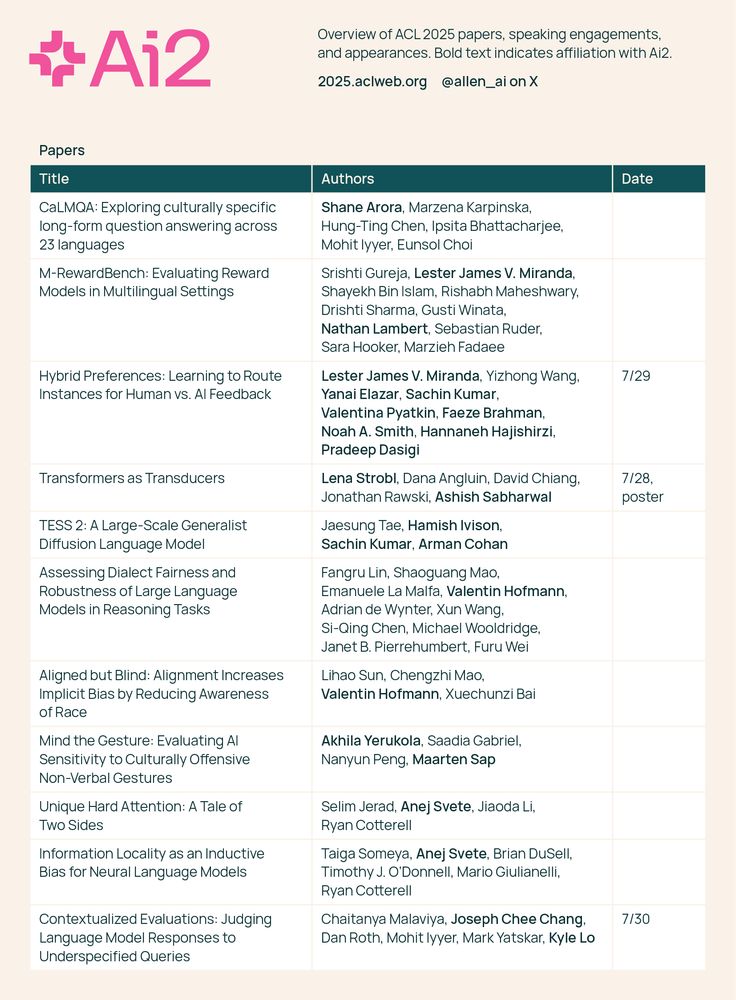

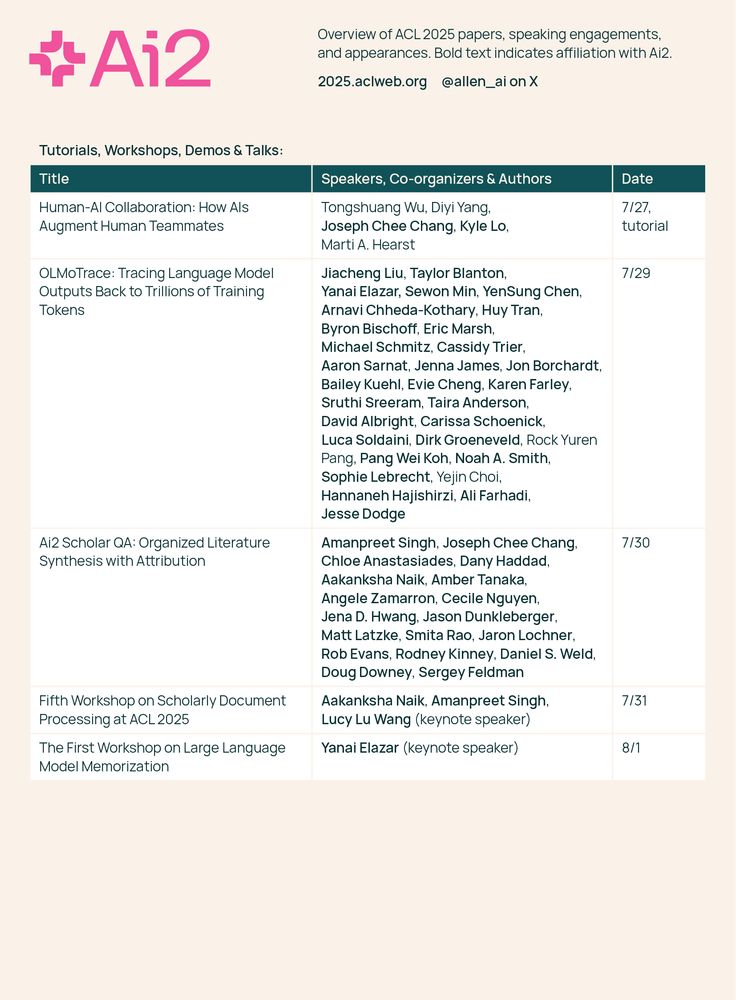

Ai2 is excited to be at #ACL2025 in Vienna, Austria this week. Come say hello, meet the team, and chat about the future of NLP. See you there! 🤝📚

I was also part of a large-scale @seacrowd.bsky.social collaboration on building a vision-language dataset tailored for Southeast Asian Languages :) Also at ACL Main - aclanthology.org/2025.acl-lo...

July 29 Hall 4/5 10:30-12:00

#ACL2025 #ACL2025NLP

3️⃣ The UD-NewsCrawl Treebank: Reflections and Challenges from a Large-scale Tagalog Syntactic Annotation Project (Main) -

aclanthology.org/2025.acl-lo...

July 29 Hall 4/5 10:30-12:00

Collab with folks from UP Diliman

#ACL2025 #ACL2025NLP

2️⃣ M-RewardBench: Evaluating Reward Models in Multilingual Settings (Main) - aclanthology.org/2025.acl-lo...

July 28 Hall 4/5 11:00-12:30

Collab with folks from @cohereforai.bsky.social

#ACL2025 #ACL2025NLP

1️⃣ Hybrid Preferences: Learning to Route Instances for Human vs. AI Feedback (Main) - aclanthology.org/2025.acl-lo...

7/29 Hall 4/5 10:30-12:00

My project here at @ai2.bsky.social!

#ACL2025NLP

I'll be at @aclmeeting.bsky.social in Vienna! I'm going to present the ff first/co-first author works:

fun learning stuff (+ phew i haven't blogged in a long time!): ljvmiranda921.github.io/notebook/202...

We’re thrilled that SEA-VL has been accepted to the ACL 2025 (Main)!

Thank you to everyone who contributed to this project 🥳

Paper: arxiv.org/abs/2503.07920

Project: seacrowd.github.io/seavl-launch/

#ACL2025NLP #SEACrowd #ForSEABySEA

Image illustrating that ALM can enable Ensembling, Transfer to Bytes, and general Cross-Tokenizer Distillation.

We created Approximate Likelihood Matching, a principled (and very effective) method for *cross-tokenizer distillation*!

With ALM, you can create ensembles of models from different families, convert existing subword-level models to byte-level and a bunch more🧵

🕵🏻💬 Introducing Feedback Forensics: a new tool to investigate pairwise preference data.

Feedback data is notoriously difficult to interpret and has many known issues – our app aims to help!

Try it at app.feedbackforensics.com

Three example use-cases 👇🧵

OLMo 2 0325 32B Preference Mixture: Solves AI alignment challenges through diverse preferences

- Combines 7 datasets

- Filters for instruction-following capability

- Balances on-policy and off-policy prompts

- Enabled successful DPO of OLMo-2-0325-32B model

huggingface.co/datasets/all...

The logo for Tülu 405B.

Here is Tülu 3 405B 🐫 our open-source post-training model that surpasses the performance of DeepSeek-V3! It demonstrates that our recipe, which includes RVLR scales to 405B - with performance on par with GPT-4o, & surpassing prior open-weight post-trained models of the same size including Llama 3.1.

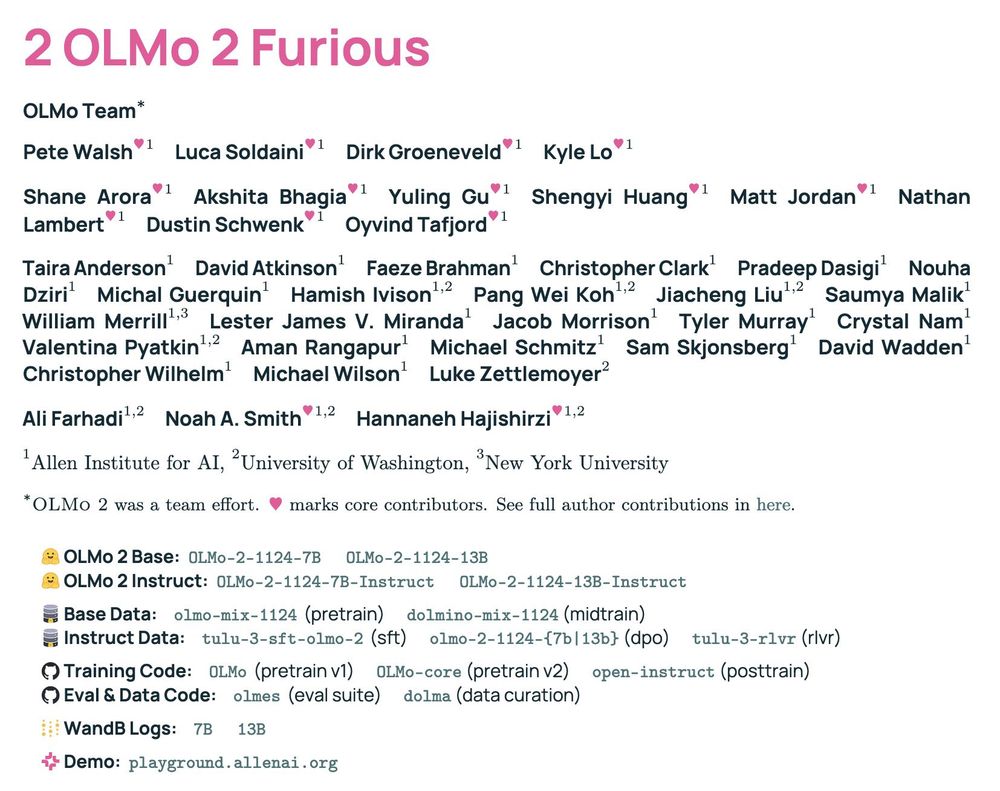

kicking off 2025 with our OLMo 2 tech report while payin homage to the sequelest of sequels 🫡

🚗 2 OLMo 2 Furious 🔥 is everythin we learned since OLMo 1, with deep dives into:

🚖 stable pretrain recipe

🚔 lr anneal 🤝 data curricula 🤝 soups

🚘 tulu post-train recipe

🚜 compute infra setup

👇🧵

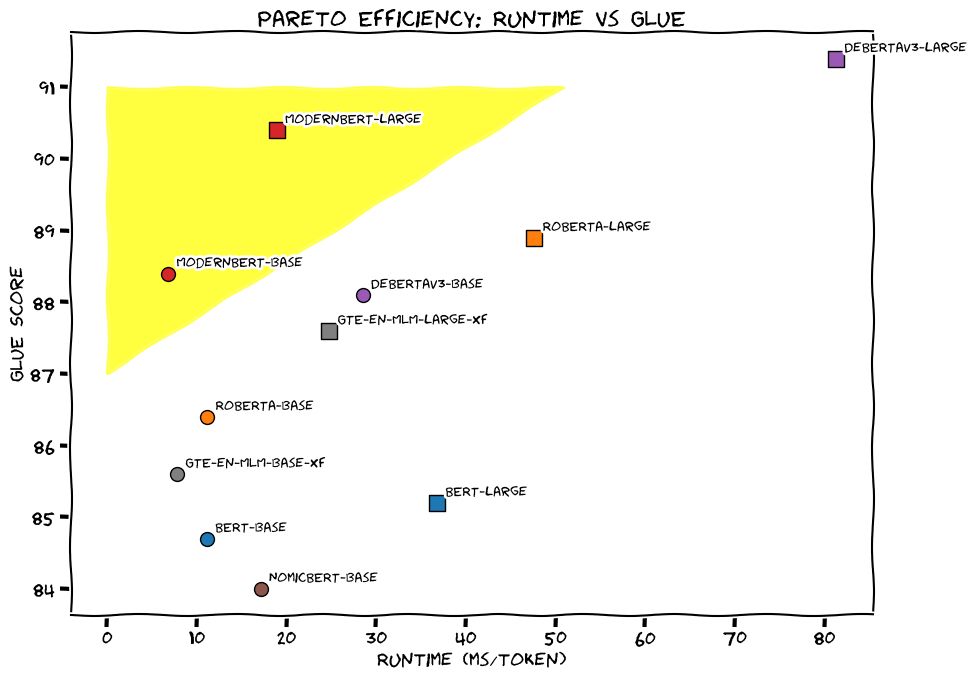

BERT is BACK! I joined a collaboration with AnswerAI and LightOn to bring you the next iteration of BERT.

Introducing ModernBERT: 16x larger sequence length, better downstream performance (classification, retrieval), the fastest & most memory efficient encoder on the market.

🧵

New research reveals a worrying trend: AI's data practices risk concentrating power overwhelmingly in the hands of dominant technology companies. With analysis from

@shaynelongpre.bsky.social @sarahooker.bsky.social @smw.bsky.social @giadapistilli.com www.technologyreview.com/2024/12/18/1...

Stop by our #NeurIPS tutorial on Experimental Design & Analysis for AI Researchers! 📊

neurips.cc/virtual/2024/tutorial/99528

Are you an AI researcher interested in comparing models/methods? Then your conclusions rely on well-designed experiments. We'll cover best practices + case studies. 👇

We just updated the AI for Humanists guide to model selection to include Llama 3.3, and a recommended best cost/capability tradeoff, llama 3.1 8B. What have you tried, and what would you suggest?

aiforhumanists.com/guides/models/

the science of LMs should be fully open✨

today @akshitab.bsky.social @natolambert.bsky.social and I are giving our #neurips2024 tutorial on language model development.

everything from data, training, adaptation. published or not, no secrets 🫡

tues, 12/10, 9:30am PT ☕️

neurips.cc/virtual/2024...

Come chat with me at #NeurIPS2024 and learn about how to use Paloma to evaluate perplexity over hundreds of domains! ✨We have stickers too✨

⭐️ We're going to launch Grassroots Science, a year-long ambitious, massive-scale, fully open-source initiative aimed at developing multilingual LLMs aligned to diverse and inclusive human preferences in Feb 2025.

🌐 Check our website: grassroots.science.

#NLProc #GrassrootsScience

Thank you @oxykodit.bsky.social !

Happy to share this and excited to bring this to the public! Nice collab with folks from the University of the Philippines (UP), @angelaquino_ph and Elsie Or, for this impactful work :) Hoping to have the official UD release next year as well.

We're releasing the largest Universal Dependencies (UD) treebank for Tagalog, UD-NewsCrawl! This dataset has been a long time coming, but glad to see this through: 15k+ sentences versus the previous ~150 sents from older Tagalog treebanks.

🤗 : huggingface.co/datasets/UD-...

📝 : Paper soon!

I am seriously behind uploading Learning Machines videos, but I did want to get @jonathanberant.bsky.social's out sooner than later. It's not only a great talk, it also gives a remarkably broad overview and contextualization, so it's an excellent way to ramp up on post-training

youtu.be/2AthqCX3h8U