think of techniques and tools that give policymakers and safety teams meaningful ways of monitoring, verifying and governing AGI.

x.com/withmartian/...

think of techniques and tools that give policymakers and safety teams meaningful ways of monitoring, verifying and governing AGI.

x.com/withmartian/...

But this isn't just about code. It's about Human Empowerment.

We don't just want nice visualizations or circuits in toy models. We want methods that allow us to meaningfully explain a system and fix it when things go wrong.

The theme of the prize is Interpretability for Code Generation.

Why? Because code offers ground truth. Unlike natural language, code is formal and allows us to measure faithfulness and track progress

We are taking a skeptical lens to the current state of the art. Research needs to answer the hard questions holding the field back - aka Actionable

Are our methods Scalable? → Are they Complete? → And most critically—Are they actually useful for fix things?

Incredibly excited to announce $1 Million prize pool to solve the world’s most important scientific problem in Interpretability.

The goal is to turns hard interpretability questions into tools for human empowerment, oversight and governance.

Special thanks to @maosbot.bsky.social for all the support and encouragement!

Much credit to my wonderful TA's🙌:

@hunarbatra.bsky.social

Matthew Farrugia-Roberts

(Head TA) and

@jamesaoldfield.bsky.social

🎟 We also have the wonderful

Marta Ziosi

asa guest tutorial speaker on AI governance and regulations!

🔥 We’re lucky to host world-class guest lecturers:

•

@yoshuabengio.bsky.social

– Université de Montréal, Mila, LawZero

•

@neelnanda.bsky.social

Google DeepMind

•

Joslyn Barnhart

Google DeepMind

•

Robert Trager

– Oxford Martin AI Governance Initiative

We’ll cover:

1️⃣ The alignment problem -- foundations & present-day challenges

2️⃣ Frontier alignment methods & evaluation (RLHF, Constitutional AI, etc.)

3️⃣ Interpretability & monitoring incl. hands-on mech interp labs

4️⃣ Sociotechnical aspects of alignment, governance, risks, Economics of AI and policy

🔑 What it is:

An AIMS CDT course with 15 h of lectures + 15 h of labs

💡 Lectures are open to all Oxford students, and we’ll do our best to record & make them publicly available.

🚨New AI Safety Course

@aims_oxford

!

I’m thrilled to launch a new called AI Safety & Alignment (AISAA) course on the foundations & frontier research of making advanced AI systems safe and aligned at

@UniofOxford

what to expect 👇

robots.ox.ac.uk/~fazl/aisaa/

Evaluating the Infinite

🧵

My latest paper tries to solve a longstanding problem afflicting fields such as decision theory, economics, and ethics — the problem of infinities.

Let me explain a bit about what causes the problem and how my solution avoids it.

1/N

arxiv.org/abs/2509.19389

🚀 Excited to have 2 papers accepted at #NeurIP2025! 🎉 congrats to my amazing co-authors!

More details (and more bragging) soon! and maybe even more news on sep 25 👀

See you all in… Mexico? San Diego? Copenhagen? Who knows! 🌍✈️

🚨 NEW PAPER 🚨: Embodied AI (incl. AI-powered drones, self-driving cars and robots) is here, but policies are lagging. We analyzed the EAI risks and found significant gaps in governance

arxiv.org/pdf/2509.00117

Co-authors Jared Perlo @fbarez.bsky.social Alex Robey & @floridi.bsky.social

1\4

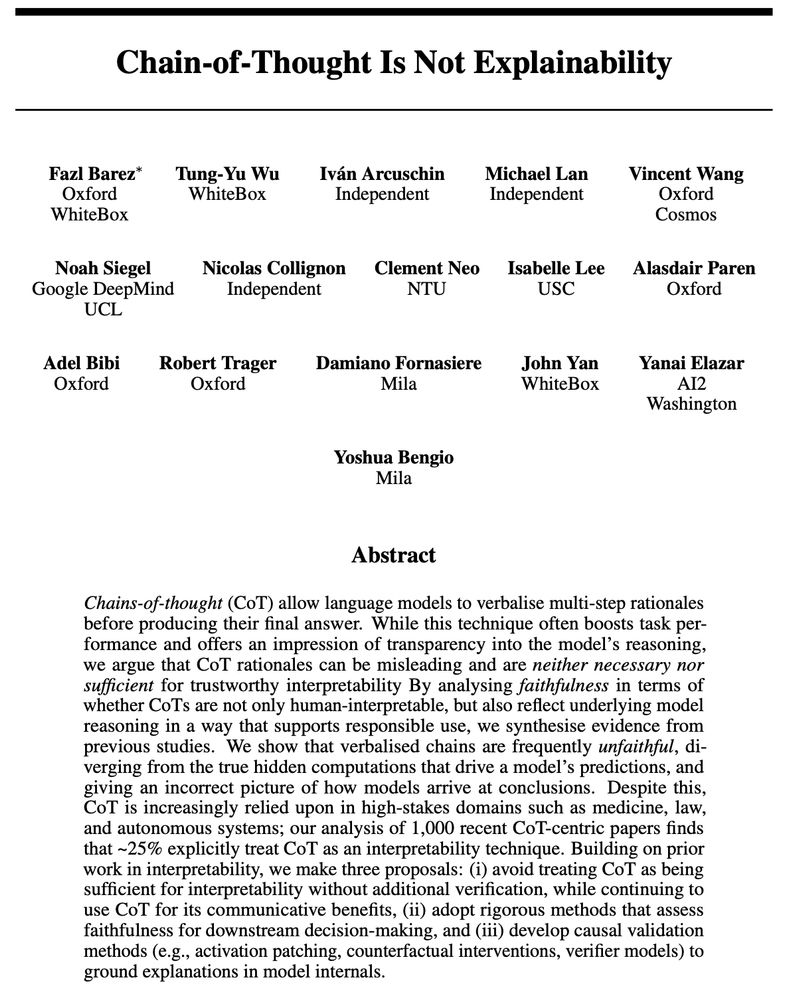

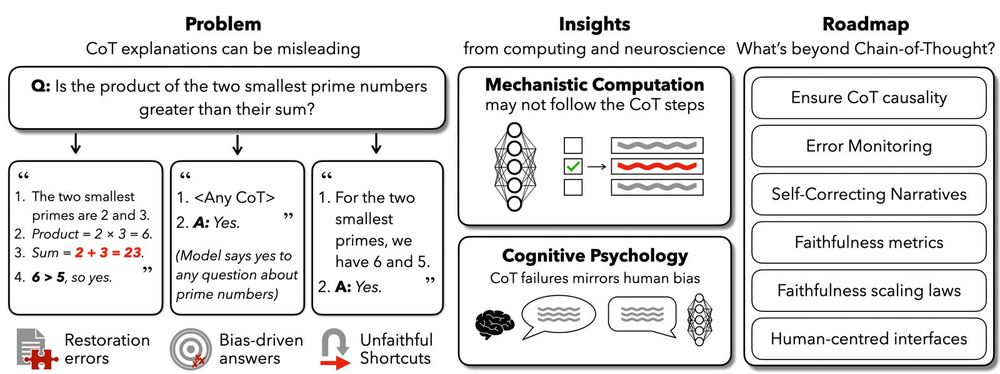

Other works have highlighted that CoTs ≠ explainability alphaxiv.org/abs/2025.02 (@fbarez.bsky.social), and that intermediate (CoT) tokens ≠ reasoning traces arxiv.org/abs/2504.09762 (@rao2z.bsky.social).

Here, FUR offers a fine-grained test if LMs latently used information from CoTs for answers!

It is so easy to confuse chain of thought and explainability and in fact in a lot of the media it is presented as if with current LLMs we are allowed to view their actual thought processes. It is not that!

Link to the paper www.alphaxiv.org/abs/2025.02

@alasdair-p.bsky.social, @adelbibi.bsky.social , Robert Trager, Damiano Fornasiere, @john-yan.bsky.social , @yanai.bsky.social@yoshuabengio.bsky.social

Work done with my wonderful collaborators @tonywu1105.bsky.social Iván Arcuschin, @bearraptor, Vincent Wang, @noahysiegel.bsky.social , N. Collignon, C. Neo, @wordscompute.bsky.social pute.bsky.social

Bottom line: CoT can be useful but should never be mistaken for genuine interpretability. Ensuring trustworthy explanations requires rigorous validation and deeper insight into model internals, which is especially critical as AI scales up in high-stakes domains. (9/9) 📖✨

Inspired by cognitive science, we suggest strategies like error monitoring, self-correcting narratives, and dual-process reasoning (intuitive + reflective steps). Enhanced human oversight tools are also critical to interpret and verify model reasoning. (8/9)

We suggest treating CoT as complementary rather than sufficient for interpretability, developing rigorous methods to verify CoT faithfulness, and applying causal validation techniques like activation patching, counterfactual checks, and verifier models. (7/9)

Why does this disconnect occur? One possibility is that models process information via distributed, parallel computations. Yet CoT presents reasoning as a sequential narrative. This fundamental mismatch leads to inherently unfaithful explanations. (6/9)

Another red flag: models often silently correct errors within their reasoning steps. They may produce the correct final answer by reasoning steps that are not verbalised, while the steps they do verbalise remain flawed, creating an illusion of transparency. (5/9)

Alarmingly, explicit prompt biases can easily sway model answers without ever being mentioned in their explanations. Models rationalize biased answers convincingly, yet fail to disclose these hidden influences. Trusting such rationales can be dangerous. (4/9)

Our analysis shows high-stakes domains often rely on CoT explanations: ~38% of medical AI, 25% of AI for law, and 63% of autonomous vehicle papers using CoT misclaim it as interpretability. Misplaced trust here risks serious real-world consequences. (3/9)

Language models can be prompted or trained to verbalize reasoning steps in their Chain of Thought (CoT). Despite prior work showing such reasoning can be unfaithful, we find that around 25% of recent CoT-centric papers still mistakenly claim CoT as an interpretability technique. (2/9)

Excited to share our paper: "Chain-of-Thought Is Not Explainability"! We unpack a critical misconception in AI: models explaining their steps (CoT) aren't necessarily revealing their true reasoning. Spoiler: the transparency can be an illusion. (1/9) 🧵

Co-authored with: Isaac Friend, Keir Reid, Igor Krawczuk, Vincent Wang, @jakobmokander.bsky.social kander.bsky.social , @philiptorr.bsky.social , Julia C Morse and Robert Trager