I remember discussing this approach with you in Milano!

I remember discussing this approach with you in Milano!

Thanks to the team, Kien Nguyen, Theo Gevers, @cgmsnoek.bsky.social, and @martin-r-oswald.bsky.social from the University of Amsterdam!

We experimented with different backbones, camera pose representations, scalability, and attention mechanisms. Our evaluation spans hundreds of full-length videos across various metrics, without aligning the predicted trajectory to the ground truth, to simulate a real-world application

VoT does not require calibration or post-optimization and operates in real-time, capable of processing thousands of frames. It is trained on a vast amount of real-world indoor data, but can work just fine in outdoor scenarios. It uses only camera poses as supervision, making it broadly accessible

📽️ Check out Visual Odometry Transformer! VoT is an end-to-end model for getting accurate metric camera poses from monocular videos.

vladimiryugay.github.io/vot/

Will you release the slides?👀 They're superb

I will be presenting our previous work at CVPR Nashville. Drop by if you want to chat!

This work was conducted in collaboration wit Kersten Thies, @lucacarlone.bsky.social , Theo Gevers, @martin-r-oswald.bsky.social , and Lukas Schmid at the Computer Vision Group of the University of Amsterdam and the SPARKLab of @mit.edu

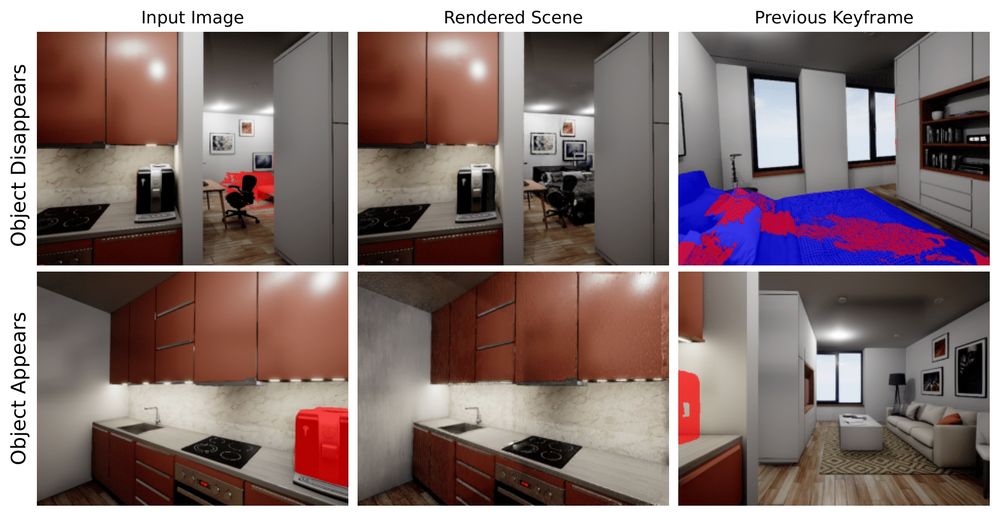

We evaluate our method on synthetic and real-world datasets that undergo significant changes, including the movement, removal, and addition of large pieces of furniture, cutlery, a coffee machine, and pictures on the walls

GaME detects scene changes and directly manipulates the 3D Gaussians to keep the map up to date. Additionally, our keyframe management system identifies and eliminates pixels that observe stale geometry, thereby minimizing the amount of discarded information

We found two main problems. First, the 3D Gaussian maps can not easily “optimize out” changes in the geometry on the fly. Second, frames observing the old state of the scene contaminate the optimization process, resulting in visual artifacts and inconsistencies

Imagine you want ot create a 3DGS map of your apartment. You reconstructed your kitchen and continued to the bedroom. While you are in the bedroom, someone has moved the chair and added a table in the kitchen without telling you. That’s what can happen with your reconstruction👇

Introducing “Gaussian Mapping of Evolving Scenes”! We present an RGBD mapping system with novel view synthesis capabilities that accurately reconstruct scenes that change over time

vladimiryugay.github.io/game/

Resubmission mentality in marathons

Munich 2023 -> 8 months prep -> COVID -> ❌

Amsterdam 2024 -> 6 months prep -> COVID -> ❌

Leiden 2025 -> 6 months prep -> lfg ✅

🔹@rerun.io visualisation script for easy debugging, analysis, and replaying of reconstruction results with minimal effort

🔹Fully Pythonic pose graph optimisation module. The core library live coding by the author is tremendously enlightening www.youtube.com/watch?v=yXWk...

🔹Place recognition module based on a large vision model - no more annoying dependency chains for DBoVW or NetVLAD

🔹Simple yet efficient mechanism for correcting and merging multiple 3D Gaussian Splatting maps into a global map

⏩Code release for MAGiC-SLAM!

github.com/VladimirYuga...

We vibe-coded hard to make the code as simple as possible. Here are some features you can seamlessly integrate into your 3D reconstruction pipeline right away:

🔹DinoV2-based place recognition module - no more annoying dependency chains of DBoVW or NetVLAD

🔹A simple yet efficient mechanism for correcting and merging multiple 3D Gaussian Splatting sub-maps into a global map

Fantastic work! Can't wait to try it out!

It feels like a tighter bubble on bsky. It also seems that the more people are aligned, the less they engage

Ye ye. Or monst3r-project.github.io. One can use them as a prior for dynamic envs just like mast3r for static ones

There's so much progress in there partially bc *3r and splats are inexpensive. GPU poor can iterate fast :)

Probably more methods for dynamic environments. Smth monst3r-like

Last year splats, this year *3r

This work was done with amazing collaborators Theo Gevers and @martin-r-oswald.bsky.social at the Computer Vision Group of the University of Amsterdam.

7/7

Finally, we extend evaluation to novel view synthesis on real-world datasets. By extracting sequences from the ego-centric Aria dataset to simulate multi-agent operations, we prepared a hold-out test with novel view trajectories, ensuring a comprehensive evaluation of our system's capabilities.

6/7

Our sub-maps inherently support local pose corrections provided by the loop closure module. Combined with an efficient caching scheme and a two-stage merging process, this allows for fast and precise global map reconstruction.

5/7