This de identification token is consistently present throughout the dataset and contributes nothing towards improving the image quality.

This points towards a major flaw in the dataset given MIMIC is one of the most significant medical datasets for T2I generation. 💔

It turns out that this de identification token (“___”) holds the most significant contribution towards memorizing training images.

In other words, steps taken to protect patient information are in fact posing a threat to it.

MIMIC dataset contains pairs of images and corresponding text reports. These are raw reports describing the images.

In the dataset, the sensitive patient information is hidden or de identified. This is done by replacing it with three underscores (“___”).

Are you working on Text-to-Image generation of Chest X-Rays using the MIMIC dataset?

Here is something I found over the last weekend. 🧵

Observations documented in this preprint -

arxiv.org/abs/2502.07516

Hello all,

What’s the best tool to make nice figures for academic AI papers?

Not an ML-related post but I am just as happy to share this

1000 followers on Bluesky already that’s crazy

A new starter pack for Medical AI researchers!

go.bsky.app/PddA2uy

Done!

Sharing with people who might find this relevant - go.bsky.app/r5eVvT, go.bsky.app/PJKJ8vK, bsky.app/profile/berk...

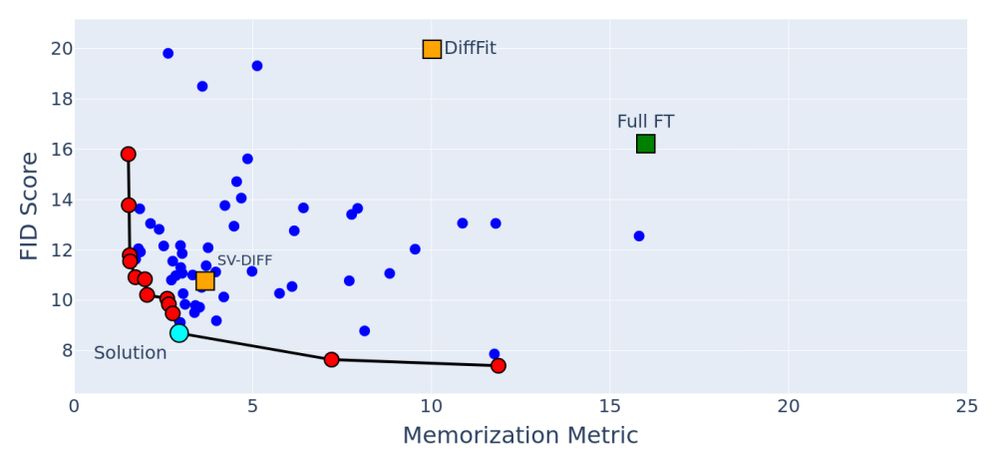

Hence, we devise MemControl - a framework that searches for the optimal parameters to be fine-tuned to:

(1) Improve image generation quality

(2) Reduce Memorization!

MemControl leads to optimal model capacity that should be used during fine-tuning: Not more, not less!

We also found that fine-tuning different subsets of parameters in a diffusion model can affect generative quality and memorization differently!

Each marker in the figure is a diffusion model finetuned on the same data but with different parameter subset.

Full FT (green) leads to high memorization!

We provide empirical proof that reducing the model capacity (by fine-tuning fewer parameters) can lead to reduced memorization!

Q. How to fine-tune with fewer parameters? 🤔

A. Parameter-Efficient Fine-Tuning (PEFT) ✨

Figure showing how conventional fine-tuning methods can lead to replication of artifacts (red boxes)

The conventional way of fine-tuning models (full fine-tuning) can lead to replication of artifacts in X-Rays that can further lead to leakage of patient information, thus endangering patient privacy.

Artifact replication is shown in red boxes.

Delighted to share our work "𝐌𝐞𝐦𝐂𝐨𝐧𝐭𝐫𝐨𝐥" now accepted at 𝐖𝐀𝐂𝐕 '𝟐𝟓. We show strong results for medical image generation and also establish an initial benchmark for generative quality and memorization of synthetic chest x-rays!

Paper: arxiv.org/abs/2405.19458

Code: github.com/Raman1121/Di...

More👇

Would love to join!

MIDL Conference has joined BlueSky!

bsky.app/profile/midl...

Please add me 😂

I would love to be added 😂

@smcgrath.phd

Again, very sorry to hear about what you are going through. Advertising here is a great idea. I personally got some good advice about a condition I was going through. Wish I could be more helpful though. Wishing you the best!

So sorry to hear about this @ian-goodfellow.bsky.social . Do you think any of this might be related to prolonged headphone usage in addition to many other factors? Or if that exacerbates the condition?

For my fellow medical AI researchers, here is a starter pack - go.bsky.app/r5eVvT

I would consider myself slightly cracked haha. Would love to be added!

Would love to be added!

Would love to be added!!

A starter pack I would highly recommend (not biased at all 😉)

Would love to be added! Currently doing a PhD in Biomedical AI