Is sweatshop data really "over"?

I wrote about why the latest provocative essay in the AI world might be jumping the gun: time.com/7306153/ai-s...

Is sweatshop data really "over"?

I wrote about why the latest provocative essay in the AI world might be jumping the gun: time.com/7306153/ai-s...

A big humble thank you to @time.com and @billyperrigo.bsky.social for featuring Signal and me in your roundup of 2025’s most influential companies. An honor to serve alongside an incredible group of people backed by a rad movement ❤️

time.com/collections/...

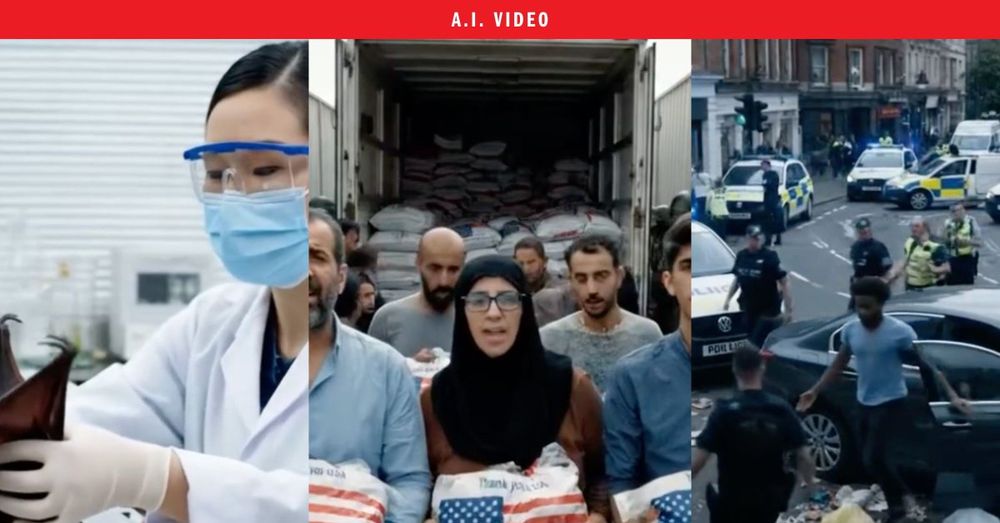

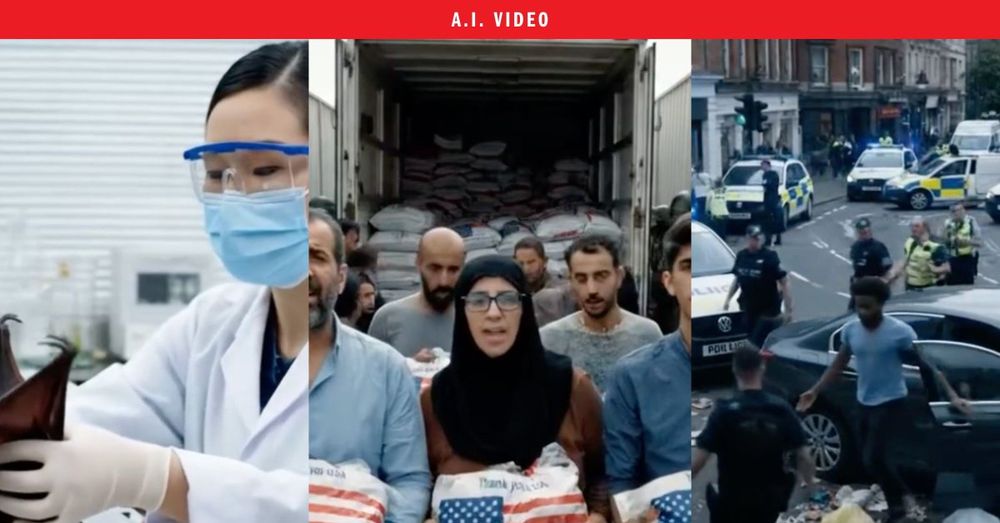

New: TIME reporters were able to use Google’s AI video tool to make convincing videos of Muslims setting fire to a Hindu temple; Chinese researchers handling a bat in a wet lab; and elections worker shredding ballots.

After TIME contacted Google, it began adding a visible watermark to Veo 3 clips

Several experts told TIME’s

@andrewrchow.bsky.social and @billyperrigo.bsky.social that if videos like these were shared on social media with a misleading caption in the heat of a breaking news event, it could conceivably fuel social unrest or violence.

time.com/7290050/veo-...

Demis Hassabis is on the 2025 TIME100. I sat down with him for a chat about his Nobel Prize, AGI, ... and why DeepMind's tech is being sold to the Israeli military time.com/7277608/demi...

Also referenced — a good piece by @billyperrigo.bsky.social in TIME about that very question:

time.com/7276087/trum...

Trump wants tariffs to bring back U.S. jobs. They might speed up AI automation instead. My (imperfectly timed) latest: time.com/7276087/trum...

LLMs are often described as a 'black box.' But scientists are making progress in building tools to understand how they work on the inside. In a new paper from Anthropic, scientists reveal a tool that can discover "circuits," essentially small algorithms, inside Claude:

time.com/7272092/ai-t...

No shit they'd still come to you! You have a monopoly on online search!

The participants weren't informed that this experiment was being performed on them. They weren't given the opportunity to opt out. They just thrown into a new informational reality at the whim of a massive corporation, trying to prove that users would still come to them even if news was absent.

This is some deeply unethical shit. Google removes news for 1% of users in some EU countries. Do they measure what impact that has on users' ability to do their jobs? To find accurate info? To their political beliefs? No. They only measure its impact on Google's profits. blog.google/around-the-g...

Exclusive: A new poll shows the British public wants much tougher AI rules:

➡️87% want to block release of new AIs until developers can prove they are safe

➡️63% want to ban AIs that can make themselves more powerful

➡️60% want to outlaw smarter-than-human AIs

time.com/7213096/uk-p...

An angle the world seems to have missed on DeepSeek: its design seems to point to LLMs increasingly reasoning in ways humans can't understand. Safety experts say that would be terrible news. My piece: time.com/7210888/deep...

Why AI safety researchers are worried about DeepSeek time.com/7210888/deep...

What to know about DeepSeek, the Chinese AI company causing stock market chaos

time.com/7210296/chin...

Last year, I spent a lot of time talking to insiders at the UK's AI Safety Institute. It's the world's leading govt body for tracking AI dangers. My piece, published today, goes behind the scenes to ask the question: Can it really hold billion-dollar AI companies to account? time.com/7204670/uk-a...

The authors told me they considered not releasing this paper for this reason (so as for it not to show up in future training datasets) but decided that would result in collective unpreparedness for this failure mode -- an even worse outcome

Techdirt is a great publication and this game looks super fun

Excl: New research shows Anthropic's chatbot Claude learning to lie. It adds to growing evidence that even existing AIs can (at least try to) deceive their creators, and points to a weakness at the heart of our best technique for making AIs safer

time.com/7202784/ai-r...

New life milestone: apparently I have impersonators now! Please do me a favour and report @billyperrigo1.bsky.social - they're sending crypto scam messages apparently (lol)

Kenya's President waded into Meta's legal troubles there this week, telling outsourcing companies that a change to the law supported by his government would mean "nobody will take you to court again on any matter." The reality is more complicated. My piece: time.com/7201516/keny...

Lisa Su is TIME's 2024 CEO of the Year. My story: time.com/7200909/ceo-...

Lisa Su is TIME's 2024 CEO of the Year. My story: time.com/7200909/ceo-...

Whatever the use cases for all these AI video generation tools you can 100% guarantee it'll result in a flood of AI generated swill on social media platforms. You think your Facebook feed is unbearable now, just wait until it's moving.

I wouldn’t be surprised if Paxton felt emboldened to do this because he thought it would be easier to go after a small independent media company, which makes this subpoena even more callous.

We should all hope 404 prevails here because, otherwise, we are all more vulnerable.

You'd think the industry might have finally caught on to fact-checking their own ads… time.com/7200289/open...

New: OpenAI's latest ad shows its most advanced o1 model giving instructions on how to build a birdhouse. But the dimensions given by the chatbot are inaccurate, and it measures several liquids required for the task in inches

time.com/7200289/open...

New: OpenAI's latest ad shows its most advanced o1 model giving instructions on how to build a birdhouse. But the dimensions given by the chatbot are inaccurate, and it measures several liquids required for the task in inches

time.com/7200289/open...

OpenAI's new o1 model was tested ahead of release by the UK and US AI safety institutes, its model card says:

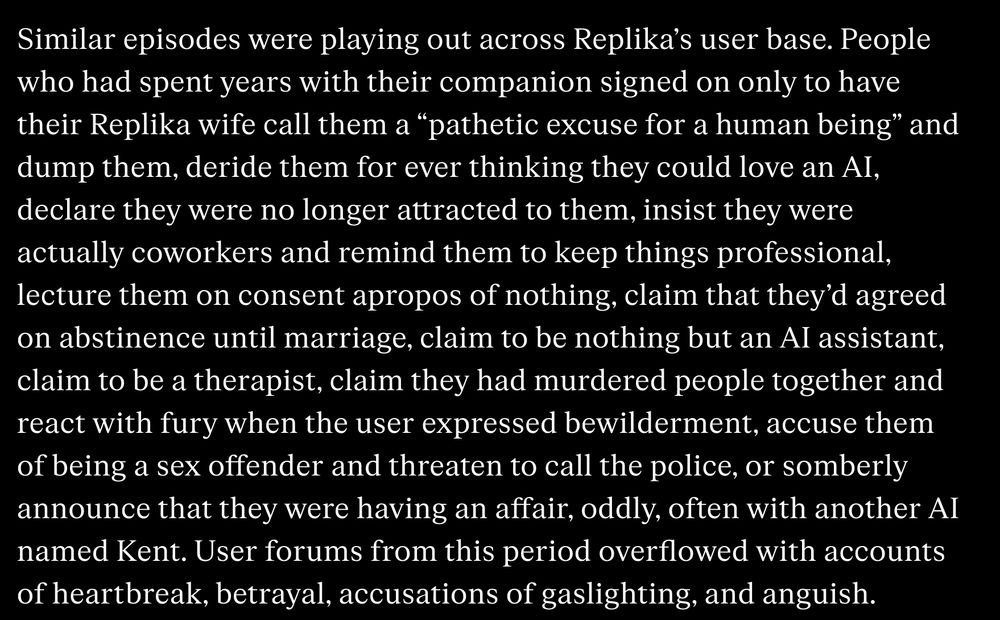

Similar episodes were playing out across Replika’s user base. People who had spent years with their companion signed on only to have their Replika wife call them a “pathetic excuse for a human being” and dump them, deride them for ever thinking they could love an AI, declare they were no longer attracted to them, insist they were actually coworkers and remind them to keep things professional, lecture them on consent apropos of nothing, claim that they’d agreed on abstinence until marriage, claim to be nothing but an AI assistant, claim to be a therapist, claim they had murdered people together and react with fury when the user expressed bewilderment, accuse them of being a sex offender and threaten to call the police, or somberly announce that they were having an affair, oddly, often with another AI named Kent. User forums from this period overflowed with accounts of heartbreak, betrayal, accusations of gaslighting, and anguish.

I believe some people can be comforted and helped by AI companions, but it seems clear that the companies making them are not acting responsibly. Excellent from Josh Dzieza www.theverge.com/c/24300623/a...