Many thanks, Cameron! It was really wonderful to meet your lab!

Many thanks, Cameron! It was really wonderful to meet your lab!

This paper was an awesome collaborative effort of a @fitngin.bsky.social working group. It provides a detailed review of how DNNs can be used to support dev neuro research

@lauriebayet.bsky.social and I wrote the network modeling section about how DNNs can be used to test developmental theories 🧵

1/7 Can infants recognise the world around them? 👶🧠 As part of the FOUNDCOG project, we scanned 134 awake infants using fMRI. Published today in Nature Neuroscience, our research reveals 2-month-old infants already possess complex visual representations in VVC that align with DNNs.

Thanks to fellow defenders @clionaod.bsky.social, Marc'Aurelio Ranzato, and @charvetcj.bsky.social

Defending the foundation model view of infant development www.sciencedirect.com/science/arti...

My paper with @stellalourenco.bsky.social is now out in Science Advances!

We found that children have robust object recognition abilities that surpass many ANNs. Models only outperformed kids when their training far exceeded what a child could experience in their lifetime

doi.org/10.1126/scia...

Thank you, Martin! Hope to see you at TRF4!

Excited to share our new review in #AnnualReviews Psychology on the role of time in perceptual organization! doi.org/10.1146/annu...

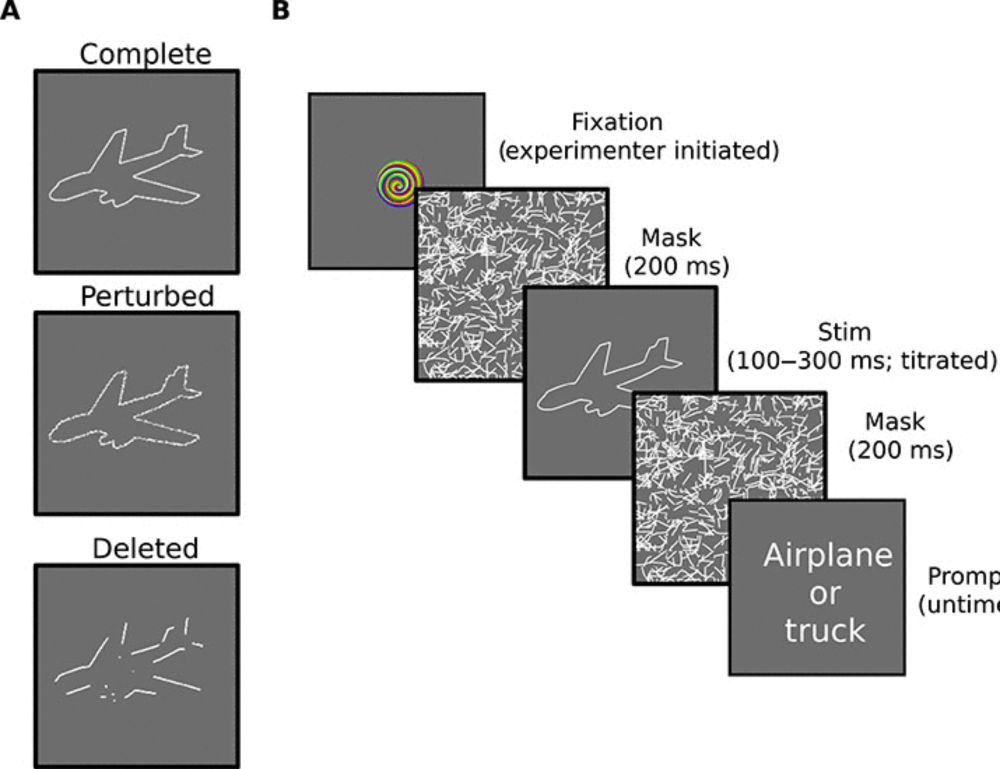

On Tuesday, I will present on new tests of Gestalt processing capabilities in children treated for blindness late in life (Poster session: 13.30-16.30) and, on Friday, @marinv.bsky.social will present on adaptive initial degradations extended to the time domain (Talk: 12.40; Poster: 14.00-17.00)!

Looking forward to #CCN2025! Please come say hi!

On Monday, as part of the ‘From Child to Machine Learning’ Satellite sites.google.com/view/child2m... (3-6pm), I look forward to give a talk about our past work probing the hypothesis that visual degradations in early development may be adaptive.

5/ Thank you also for sharing your recent preprint, which I am looking forward to reading in greater detail shortly!

4/ Second: indeed, there is a mapping from the 1000 categories to the 16 broader classes, based on Geirhos' 2019 code that you can find here: github.com/rgeirhos/tex....

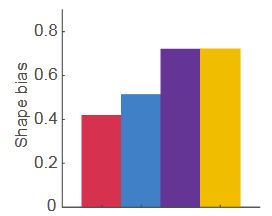

3/ Also, if I'm understanding it correctly, looking at Supplemental Figure 8E in Jang & Tong (2024) (www.nature.com/articles/s41...), it seems that the baseline AlexNet (red) had a shape bias of 0.4 as well, and strong blur (purple) then brought it up to around 0.7!

2/ First: while any absolute values were certainly not the focus of our paper, we did check the baseline and found that it was really quite similar to Geirhos et al. (2019), who had >0.4 for AlexNet. Below is a screenshot from their paper (similar to our Supplementary Fig 14 you shared before).

1/ Thanks, @sushrutthorat.bsky.social and @zejinlu.bsky.social! Great to hear from you, and I look forward to meeting at CCN! Two brief points below that might be helpful:

6/ Thanks to all authors (including Sid, who will soon celebrate his 100th birthday!), and the reviewers for particularly helpful feedback.

Paper: doi.org/10.1038/s420...

MIT News story: news.mit.edu/2025/study-b...

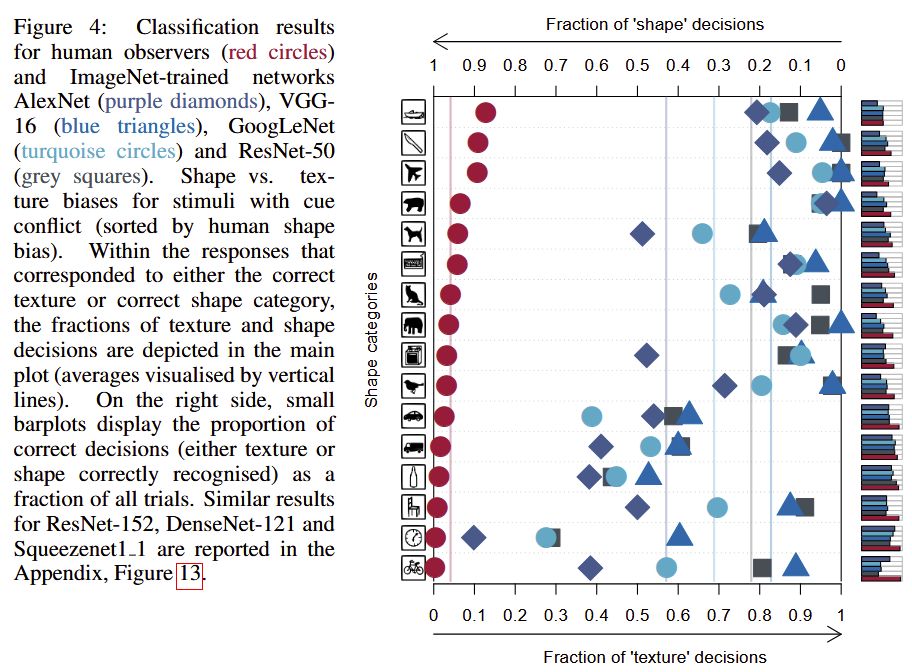

5/ Moreover, developmentally inspired training also led networks toward a stronger bias for global shape processing, potentially driven by magnocellular-like units. Together, this has implications for neuroscience and the design of more robust and human-like computational vision systems.

4/ While not ruling out the role of phylogenetic dispensation ('nature'), our results demonstrate the possibility of an experience-driven ('nurture') route to part of the emergence of this fundamental organizing principle in the mammalian visual system.

3/ Training deep networks with such joint developmental regimens revealed that the temporal confluence in the progression of spatial frequency and color sensitivities significantly shapes some neuronal response properties characteristic of the division of parvo- and magnocellular systems.

2/ Previously, we examined AID (reviewed in doi.org/10.1016/j.dr...) separately for the domains of visual acuity (doi.org/10.1073/pnas...), color sensitivity (doi.org/10.1126/scie...), and even prenatal hearing (doi.org/10.1111/desc...). Here, we consider visual acuity and color sensitivity jointly.

1/ New paper out in @commsbio.nature.com, led by @marinv.bsky.social: doi.org/10.1038/s420...! Across several past studies, we showed how newborns' degraded vision may benefit human development and inspire more robust deep networks. We have referred to this as Adaptive Initial Degradations (AID).