Big thank you to my great co-authors @wolfstammer.bsky.social @hshindo.bsky.social Lukas Helff @devendradhami.bsky.social @kerstingaiml.bsky.social !

Big thank you to my great co-authors @wolfstammer.bsky.social @hshindo.bsky.social Lukas Helff @devendradhami.bsky.social @kerstingaiml.bsky.social !

Excited to share that our paper "Synthesizing Visual Concepts as Vision-Language Programs" has been accepted to #CVPR2026! 🎉

We propose a novel method that combines VLMs with symbolic program synthesis to learn reliable programs of visual concepts.

🌐 ml-research.github.io/vision-langu...

📣 Call for Contributions: Do you have interesting work to share? We invite you to submit your abstract for our poster session featuring innovative projects in this exciting field: eveeno.com/342278190

We are pleased to announce a one-day symposium on AI and Creativity in Darmstadt! Join us for an inspiring lineup of speakers and a full day dedicated to exploring creativity in modern machine learning models and the relationship between biological and artificial creation. 🎨🤖

Super excited that our recent work got featured in the Abstract Synthesis podcast! 🚀

I joined Brian to discuss inductive reasoning in vision and how we can combine Vision-Language Models with Program Synthesis to enable more reliable and interpretable reasoning 💡

Podcast: youtu.be/uefqvsButp8?...

Thanks! The functions (like exist_object, get_objects) are predefined, however the symbols like "round" in this case are discovered by the VLM. By that we get an expressive DSL that still can adapt to the tasks at hand

Thank you, very happy to hear that! I think there are definitely merits in combining the best of both worlds, that should not be overshadowed by the current focus on LLMs. Happy to discuss the topic!

2 Dec

📌 Poster: Vision-Language Programs at WIML Workshop

🕕 6:00 pm to 9:00 pm

4 Dec

📌 Poster: Object-Centric Concept-Bottleneck with David Steinmann

🕟 4:30 pm to 7:30 pm

7 Dec

🎤 Oral: Vision-Language Programs at 01:30 pm

📌 Poster: 4:05 pm to 5:00 pm

After an amazing time in LA and Joshua Tree Park, I’m excited to head to NeurIPS next week. My colleagues and I will be presenting some of our recent work (see below).

Looking forward to connecting and starting new conversations. Feel free to reach out if you want to chat! 💬

Check out the work here: ml-research.github.io/vision-langu...

Work together with my great co-authors @wolfstammer.bsky.social, Hikaru Shindo, Lukas Helff, @devendradhami.bsky.social , @kerstingaiml.bsky.social 💫

With VLP, we introduce VLM functions as a perceptual interface and combine them with symbolic operators. By that, VLP can discover concise concepts in the form of functional programs that faithfully follow the few-shot image examples.

Instead of letting the VLM do all the reasoning in natural language, what if we only use it for perception, and then let a symbolic program do the reasoning on top of that? 💡

Problem: Vision-language models are great at visual recognition, but often fail at faithful visual reasoning.

They can output rules that sound plausible but violate the task constraints or contradict the images.

🚨 New paper alert!

We introduce Vision-Language Programs (VLP), a neuro-symbolic framework that combines the perceptual power of VLMs with program synthesis for robust visual reasoning.

Unfortunately, our submission to #NeurIPS didn’t go through with (5,4,4,3). But because I think it’s an excellent paper, I decided to share it anyway.

We show how to efficiently apply Bayesian learning in VLMs, improve calibration, and do active learning. Cool stuff!

📝 arxiv.org/abs/2412.06014

And last but not least: the spirals are still spinning, each in their own direction 🌀

💻 We also added a demo of the evaluation to our GitHub repo! Check it out here: github.com/ml-research/...

📊 Updated results are also on our webpage!

Link: ml-research.github.io/bongard-in-w...

Curious to hear - should we evaluate other models too? 🤖

🔎 Importantly, Task 2 continues to expose inconsistencies between the solved problems in Task 1 (64) and the problems where the model can correctly classify the individual images of the problem (only 34), given the gt options (Task 2).

🤔 Surprisingly, even some easy problems like BP8 remain unsolved…

Can the new GPT-5 model finally solve Bongard Problems? 👉Not quite yet!

Using our ICML Bongard in Wonderland setup, it solved 64/100 problems - the best score so far! 📈

However, some issues still persist ⬇️

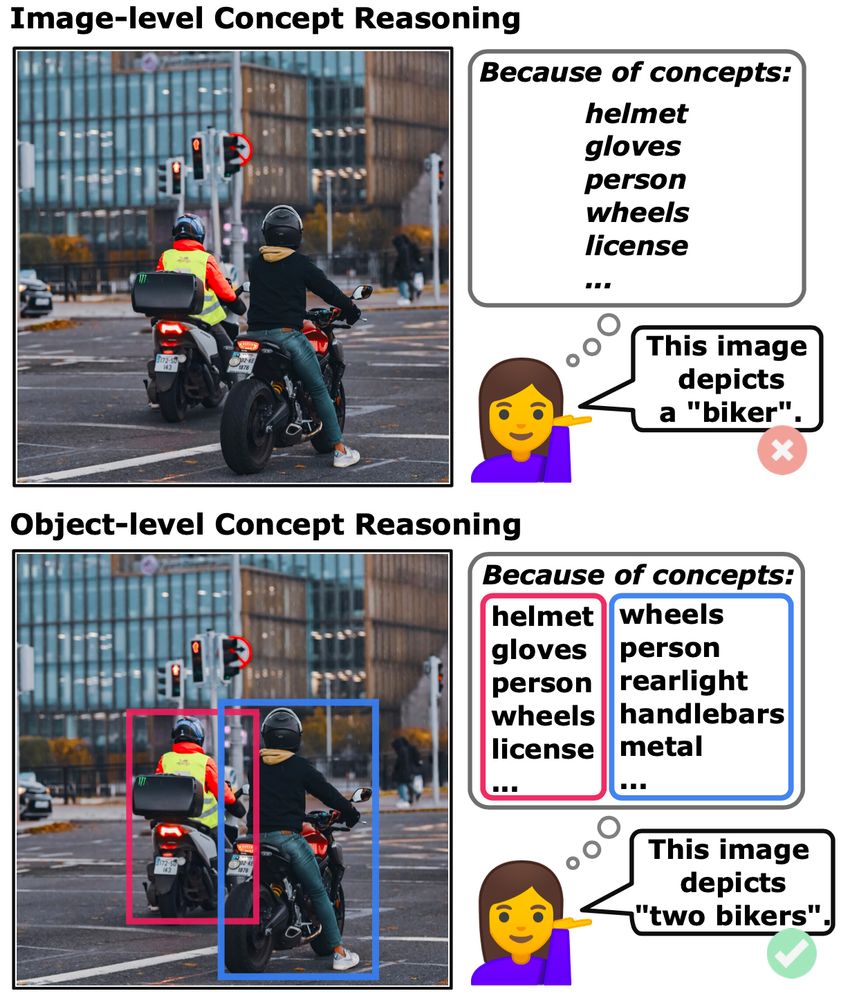

Can concept-based models handle complex, object-rich images? We think so! Meet Object-Centric Concept Bottlenecks (OCB) — adding object-awareness to interpretable AI. Led by David Steinmann w/ @toniwuest.bsky.social & @kerstingaiml.bsky.social .

📄 arxiv.org/abs/2505.244...

#AI #XAI #NeSy #CBM #ML

I'll be at #ICML2025 next week presenting our recent work on VLMs and Bongard Problems! Feel free to reach out, happy to have a chat ☺️

Work together with my amazing co-authors @philosotim.bsky.social

Lukas Helff @ingaibs.bsky.social @wolfstammer.bsky.social @devendradhami.bsky.social @c-rothkopf.bsky.social @kerstingaiml.bsky.social ! ✨

We also identified 10 particularly challenging Bongard Problems that none of the models could solve under any setting. The challenge remains wide open!

3 examples of the challenging BPs:

Interestingly, success in solving the BPs (Open Question) doesn't translate to correctly categorizing individual images 👉 the sets of BPs solved in each task are not the same!

This suggests that getting the right final answer doesn’t always mean genuine understanding 🤔

Our evaluation shows the top-performing model (o1) solved 43 out of 100 problems, with the others trailing far behind. There’s still a long way to go for current AI models!

Excited to share that our paper got accepted at #ICML2025!! 🎉

We challenge Vision-Language Models like OpenAI’s o1 with Bongard problems, classic visual reasoning challenges and uncover surprising shortcomings.

Check out the paper: arxiv.org/abs/2410.19546

& read more below 👇