In the mean time, here's a rule of thumb "if your project can be vibecoded in an hour, and amounts to O(10) LoC edits on something existing, or is a convergence proof that o4-mini can do with a bit of guidance, DO NOT write a paper about it":D

In the mean time, here's a rule of thumb "if your project can be vibecoded in an hour, and amounts to O(10) LoC edits on something existing, or is a convergence proof that o4-mini can do with a bit of guidance, DO NOT write a paper about it":D

I think that the current most bullet proof peer review has been "people will read/try your stuff, and if it works they build on it". But because it's not attached to a formal process on openreview we discard it as being non-scientific.

It seems to me that is totally misaligned with scientific discovery and progress. I don't believe this is a result of bad actors btw. It's just that huge, and complex systems that are O(100) years old take a long time to change, and readjust to new realities. We'll eventually figure it out.

it seems to me that mostly ML academia (i am part of it!) is a proponent of keeping peer review and mega ML conferences going & the bean counter running. We've not found a solution to reviews converging to random coin tosses, at a huge expense of human work hours.

If that's indeed the case (i believe we can measure that), and their key function is social, and a way for people to connect (that's great!), what's the point of having peer review, and using # neurips papers as a bean counter?

my post is a direct criticism to the 100k neurips submissions issue. It's beyond clear that research dissemination--for the most part--does not happen through conferences any more.

What if for most of your findings you just post a thread and share a GitHub repo, rather than submitting a 15 page NeurIPS paper with < 1/100 the reach?

LLMs learn world models, beyond a reasonable doubt. It's been the case since GPT-3, but now it should be even more clear. Without them "Guess and Check" would not work.

The fact that these "world models" are approximate/incomplete does not disqualify them.

Working on the yapping part :)

hmm.. temp has to be 0.6-0.8, this looks like very low temp outputs

I don’t see at all how this is intellectually close to what Shannon wrote. Can you clarify? I read it as computing statistics and how these are compatible with theoretical conjectures. There’s no language generation implicit in the article. Am I misreading it?

can you share the paper?

BTW for historical context, 1948, is very very very early to have these thoughts. So i actually think that every single sentence written is profound. This is kinda random, but here is how Greece looked back then. IT WAS SO EARLY :) x.com/DimitrisPapa...

it's not that profound. it just says, there's no wall, if all stars are aligned. it's an optimistic read of the setting.

Is 1948 widely acknowledged as the birth of language models and tokenizers?

In "A Mathematical Theory of Communication", almost as an afterthought Shannon suggests the N-gram for generating English, and that word level tokenization is better than character level tokenization.

🎉The Phi-4 reasoning models have landed on HF and Azure AI Foundry. The new models are competitive and often outperform much larger frontier models. It is exciting to see the reasoning capabilities extend to more domains beyond math, including algorithmic reasoning, calendar planning, and coding.

I am afraid to report, RL works.

I think 2-3 years ago, I said I will not work on two ML sub-areas. RL was one of them. I am happy to say that I am not strongly attached to my beliefs.

researchers

Re: The Chatbot Arena Illusion

Every eval chokes under hill climbing. If we're lucky, there’s an early phase where *real* learning (both model and community) can occur. I'd argue that a benchmark’s value lies entirely in that window. So the real question is what did we learn?

Also a sycophant etymologically means "the one who shows the figs"; the origin of the meaning is kinda debated, either refers to illegally importing figs, or to falsely accusing someone of hiding illegally imported figs

Fun trivia now that “sycophant” became common language to describe LLMs flattering users:

In Greek, συκοφάντης (sykophántēs) most typically refers to a malicious slanderer, someone spreading lies, not flattery!

Every time you use it, you’re technically using it wrong :D

Come work with us at MSR AI Frontiers and help us figure out reasoning!

We're hiring at the Senior Researcher level (eg post phd).

Please drop me a DM if you do!

jobs.careers.microsoft.com/us/en/job/17...

bsky doesn't like GIFs, here they are from the other site x.com/DimitrisPapa...

Super proud of this work that was led by Nayoung Lee and Jack Cai, with mentorship from Avi Schwarzschild and Kangwook Lee

link to our paper: arxiv.org/abs/2502.01612

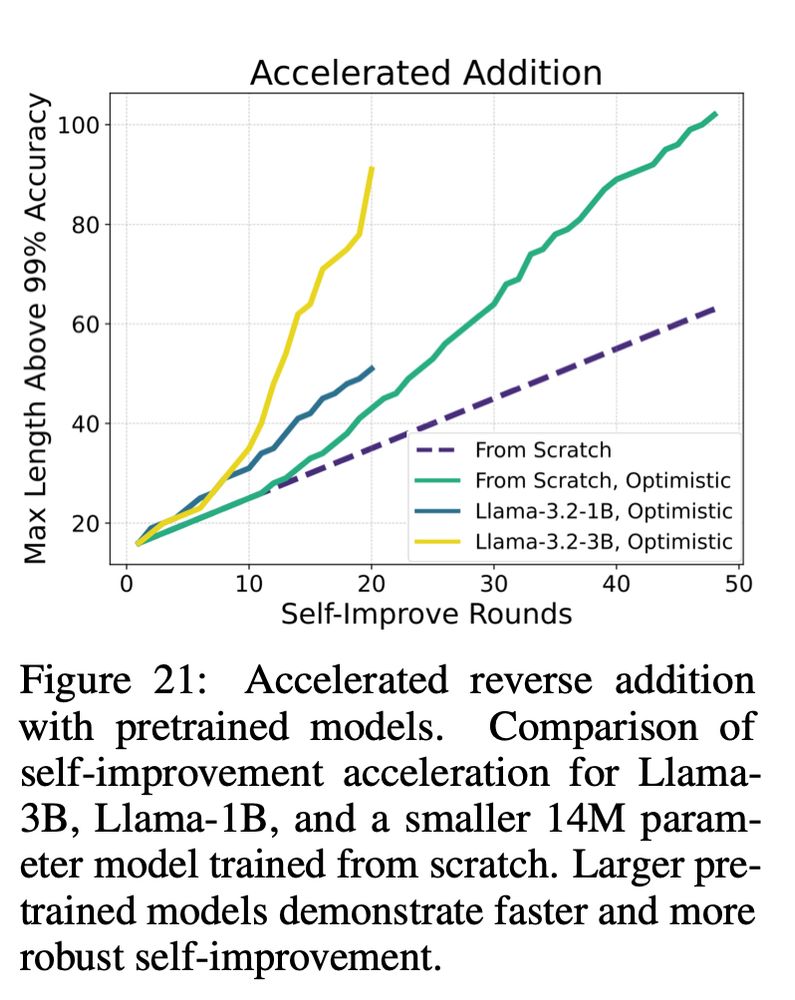

Oh btw, self improvement can become exponentially faster in some settings, ory when we apply it on pretrained models (again this is all for add/mul/maze etc)

An important aspect of the method is that you need to

1) generate problems of appropriate hardness

2) be able to filter our negative examples using a cheap verifier.

Otherwise the benefit of self-improvement collapses.

We test self-improvement across diverse algorithmic tasks:

- Arithmetic: Reverse addition, forward (yes forward!) addition, multiplication (with CoT)

- String Manipulation: Copying, reversing

- Maze Solving: Finding shortest paths in graphs.

It always works

Self-improvement is not new—this idea has been explored in various contexts and domains (like reasoning, mathematics, coding, and more).

Our results suggest that self-improvement is a general and scalable solution to length & difficulty generalization!

What if we leverage this?

What if we let the model label slightly harder data… and then train on them?

Our key idea is to use Self-Improving Transformers , where a model iteratively labels its own train data and learns from progressively harder examples (inspired by methods like STaR and ReST).

I was kind of done with length gen, but then I took a closer look at that figure above..

I noticed that there is a bit of transcendence, i.e the model trained on n-digit ADD can solve slightly harder problems, eg n+1, but not much more.

(cc on transcendence and chess arxiv.org/html/2406.11741v1)