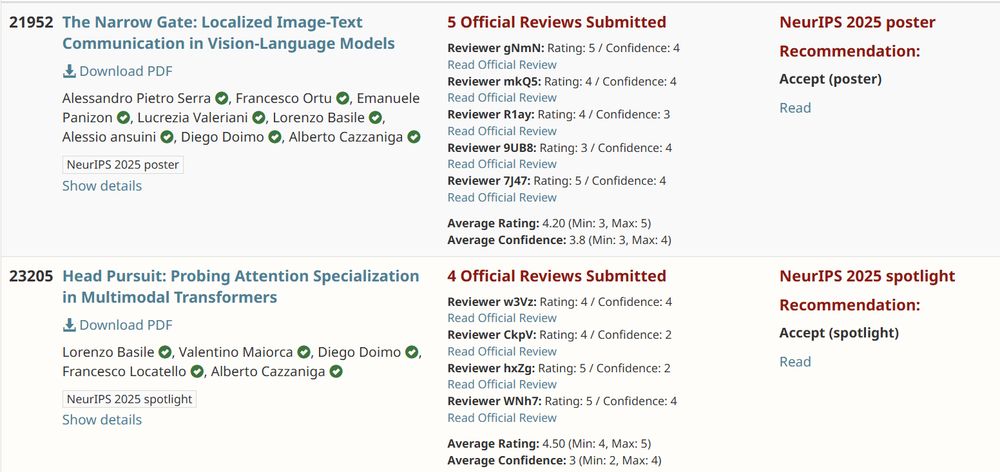

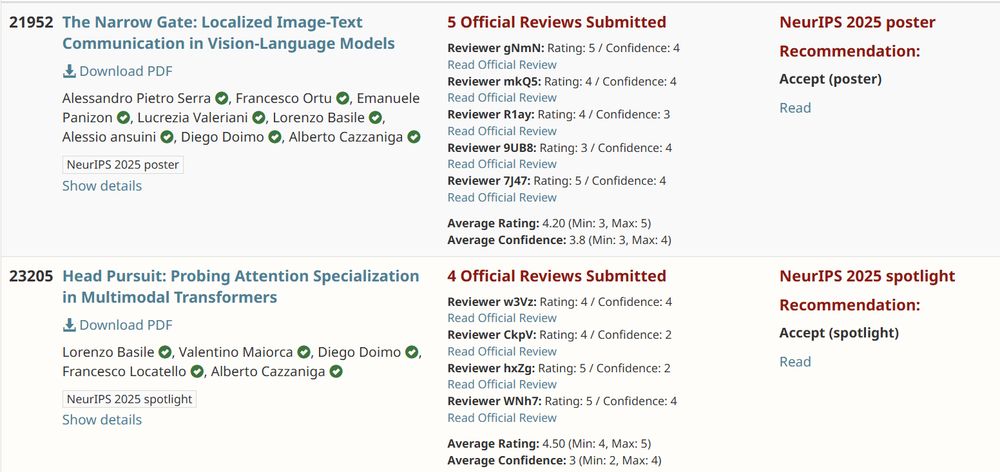

Excited to share that 2/2 papers from our Lab @AreaSciencePark were accepted to #NeurIPS2025 (one spotlight 🎉)

Great work everyone!

@alexpietroserra.bsky.social @francescortu.bsky.social @lbasile.bsky.social @lvaleriani.bsky.social @diegodoimo.bsky.social @maiorca.xyz @locatelf.bsky.social

22.09.2025 08:55

👍 7

🔁 2

💬 0

📌 0

Nice start of @neuripsconf.bsky.social!

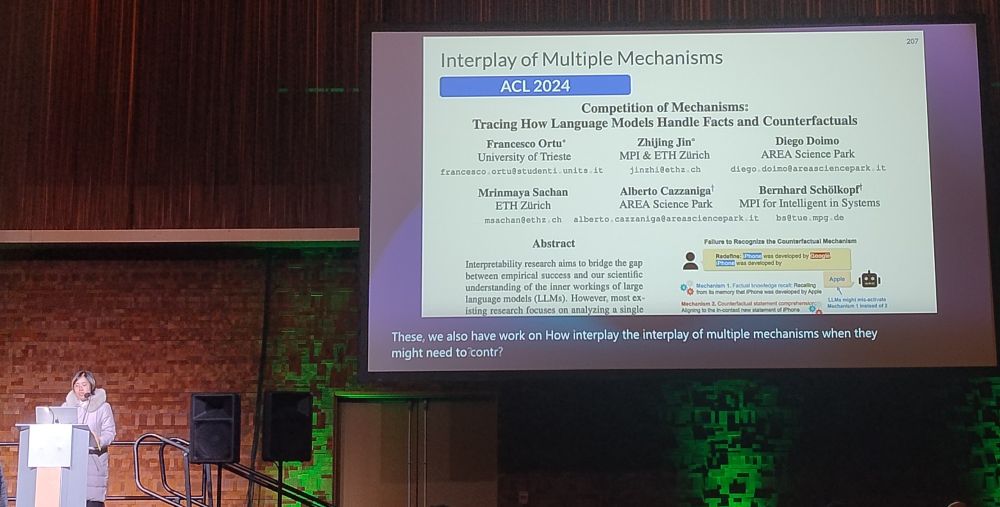

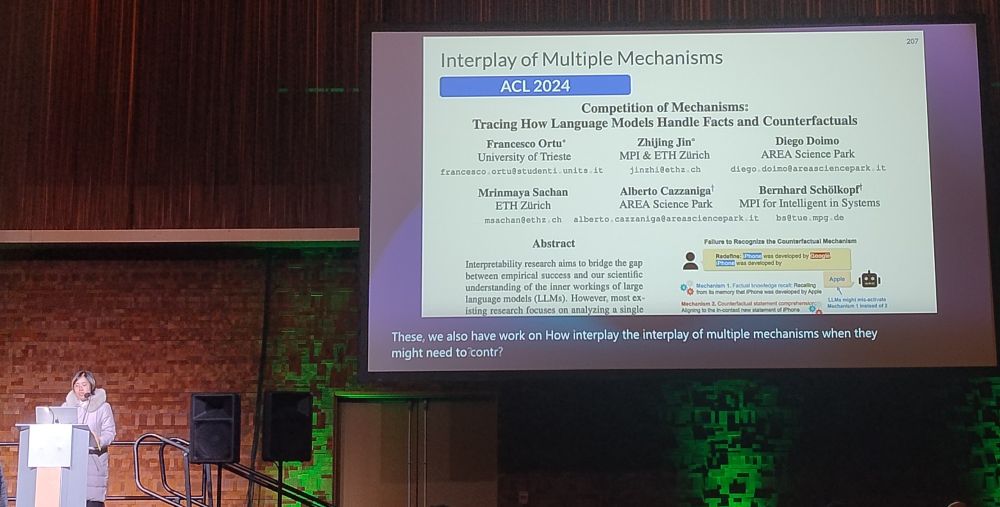

Our work with @francescortu.bsky.social and @diegodoimo.bsky.social on the Competition of Mechanisms to understand counterfactuality in LLMs featured in the "Causality for LLMs" workshop :-)

Check out our ACL2024 paper aclanthology.org/2024.acl-long.…

10.12.2024 20:19

👍 9

🔁 1

💬 0

📌 0

Thanks again, @diegodoimo.bsky.social and @albecazzaniga.bsky.social , for the fantastic mentorship and support! 🙏🎉 They are also attending #NeurIPS, so feel free to reach out to them to discuss our results. I’m excited to keep pushing forward on these topics! 🚀

10.12.2024 20:10

👍 1

🔁 0

💬 0

📌 0

Thanks to the amazing team at LADE @areasciencepark: @lvaleriani.bsky.social @lbasile.bsky.social @AlessioAnsuini @diegodoimo.bsky.social @albecazzaniga.bsky.social 🙏

10.12.2024 20:10

👍 2

🔁 0

💬 1

📌 0

It was super fun to take our first step in interpreting multimodal LLMs, working closely with the brilliant @alexpietroserra.bsky.social and @EmanuelePanizon

10.12.2024 20:10

👍 0

🔁 0

💬 1

📌 0

✅ This shows that, starting from the mid-layers, a single token effectively summarizes all 1024 image tokens!

❌ This does not occur in models fine-tuned for visual understanding (such as Pixtral).

10.12.2024 20:10

👍 1

🔁 0

💬 1

📌 0

Additionally, blocking communication from this token significantly disrupts performance on standard benchmarks, while blocking image-text communication does not

10.12.2024 20:10

👍 1

🔁 0

💬 1

📌 0

🎯 Key finding: In these models the hidden representations of images and text form disjoint clusters and the communication between modalities is mediated by the special token <end-of-image>!

10.12.2024 20:10

👍 1

🔁 0

💬 1

📌 0

🌐 Check out our code and data at: ritareasciencepark.github.io/Narrow-gate

10.12.2024 20:10

👍 0

🔁 0

💬 1

📌 0

🚨 🚨 Excited to share our latest paper, now on #arXiv!

🖼️ We studied how unified VLMs, trained to generate both text and images (e.g., Meta's Chameleon), exchange information between modalities, comparing them to standard VLMs.

📄 Paper: arxiv.org/abs/2412.06646

Deep dive: 👇

10.12.2024 20:10

👍 11

🔁 2

💬 1

📌 3

Screenshot of the paper.

Even as an interpretable ML researcher, I wasn't sure what to make of Mechanistic Interpretability, which seemed to come out of nowhere not too long ago.

But then I found the paper "Mechanistic?" by

@nsaphra.bsky.social and @sarah-nlp.bsky.social, which clarified things.

20.11.2024 08:00

👍 230

🔁 26

💬 7

📌 2

Thanks for creating the starter pack! I'd love to be added as well! 😊

20.11.2024 10:41

👍 2

🔁 0

💬 0

📌 0