🚀 Visit our #NeurIPS posters at @neuripsconf.bsky.social!

Meet and interact with our authors at all locations — San Diego, Mexico City, and Copenhagen.

Details in the thread.

👇👇👇

🚀 Visit our #NeurIPS posters at @neuripsconf.bsky.social!

Meet and interact with our authors at all locations — San Diego, Mexico City, and Copenhagen.

Details in the thread.

👇👇👇

Pleased to share new work with @sflippl.bsky.social @eberleoliver.bsky.social @thomasmcgee.bsky.social & undergrad interns at Institute for Pure and Applied Mathematics, UCLA.

Algorithmic Primitives and Compositional Geometry of Reasoning in Language Models

www.arxiv.org/pdf/2510.15987

🧵1/n

Very excited to receive this award and see this work at the intersection of AI and the Humanities recognized by the Heinz Billing Foundation of the @maxplanck.de!

Special thanks to @mpiwg.bsky.social, and M Valleriani and colleagues J Büttner & H El-Hajj, as well as KR Müller and G Montavon. 📜🤖

🥳 Super happy to have our work on multi-concept feature descriptions accepted at #NeurIPS2025!

📯 Come visit our #ICML25 Spotlight Poster and meet @taylorwwebb.bsky.social to discuss our work: "Toward an Algorithmic Evaluation and Understanding of Generative AI."

Paper: openreview.net/forum?id=eax...

Poster: icml.cc/media/Poster...

Our position paper on algorithmic explanations is out—excited to share it! 🙌

Proud of this collaborative effort toward a scientifically grounded understanding of generative AI.

@tuberlin.bsky.social @bifold.berlin @msftresearch.bsky.social @UCSD & @UCLA

🚨 New preprint! Excited to share our work on extracting and evaluating the potentially many feature descriptions of language models

👉 arxiv.org/abs/2506.15538

THE NEVERENDING CURE – new exhibition by kennedy + swan

🗓 May 28 | 🕕 6PM | 📍 UNI_VERSUM, TU Berlin

Art + AI + Snacks = A night not to miss!

With intros by BIFOLD scientists + artists.

www.bifold.berlin/news-events/...

#ArtOfEntanglement #ArtAndScience

@tuberlin.bsky.social

#ScheringStiftung

🖼️ At the Re-Align workshop, @tomneuhaeuser.bsky.social and I presented "Cat, Rat, Meow: On the Alignment of Language Model and Human Term-Similarity Judgments", joint work with Lenka Tětková and @eberleoliver.bsky.social .

📃 arxiv.org/abs/2504.07965

📜 🎉 Happy to announce our workshop "AI-based Methods for the Humanities"! Bringing together #ML and #Humanities researchers to discuss frontiers in #DigitalHumanities, #NLP, #XAI and more!

Hosted together with Matteo Valleriani and

@bifold.berlin @tuberlin.bsky.social @mpiwg.bsky.social

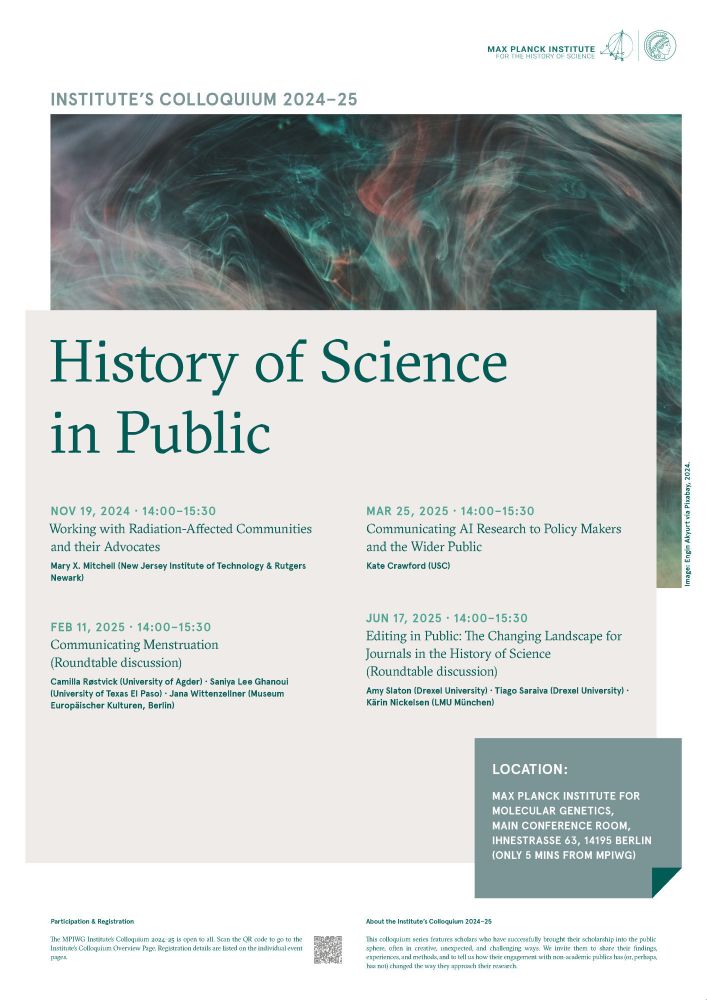

Poster showing the MPIWG Institute’s Colloquium 2024–25 program.

Next up in our Institute’s Colloquium Series “History of Science in Public”: Kate Crawford @katecrawford.bsky.social will talk on "Mapping #AI: How to See Planetary-Scale #ArtificialIntelligence.”

🗓️ Mar 25, 2025 (14:00 CET)

🔗 bitly.cx/m0KeS

📍 relocated: MPIWG Main conference room

#HistSci #SciComm

Danke für den Austausch @tuberlin.bsky.social – es bleibt spannend! 😉

Work with Jochen Büttner, Hassan El-Hajj, Grégoire Montavon, Klaus-Robert Müller, and Matteo Valleriani.

#ScienceAdvances

#DigitalHumanities

#HistSci

📜 History repeats itself: We investigated how early modern communities have embraced scholarly advancements, reshaping scientific views and exploring scientific roots amidst a changing world.

www.science.org/doi/10.1126/...

@mpiwg.bsky.social @tuberlin.bsky.social @bifold.berlin @science.org

First #jobalert on Bluesky! Postdoc (or PhD position with strong ML background), for a joint project with Gregoire Montavon’s lab at #BIFOLD #TU-Berlin .

Topic is explainable AI /ML for self supervised LLMs in multi/spatial omics & gene regulation.

www.jobs.tu-berlin.de/stellenaussc...

Here a gathered a complementary Explainable AI/Interpretability starter pack ;) Nice to see so many of us here now!

Welcome, you should be in it now :)

I am thrilled to share that our paper "Evaluating Webcam-based Gaze Data as an Alternative for Human Rationale Annotations" was accepted to LREC-COLING

📜 arxiv.org/pdf/2402.19133…

We analyse low-cost eye-tracking data as an alternative to human rationale annotations when evaluating XAI methods.