BayesVLM provides reliable uncertainty for pretrained vision-language models without retraining or inference-time sampling. It improves zero-shot calibration, reduces overconfident errors under domain shift, and enables more sample-efficient active learning with negligible overhead.

12.02.2026 13:08

👍 1

🔁 0

💬 0

📌 0

BayesVLM places a Laplace posterior over the final projection layers and analytically propagates uncertainty to cosine similarities.

This avoids Monte Carlo sampling while enabling efficient uncertainty-aware inference and active learning.

12.02.2026 13:08

👍 1

🔁 0

💬 1

📌 0

We introduce BayesVLM, a training-free post-hoc Bayesian method for uncertainty estimation in pretrained VLMs.

BayesVLM yields interpretable, well-calibrated uncertainty with virtually no inference overhead.

12.02.2026 13:08

👍 2

🔁 2

💬 1

📌 0

[Paper]: arxiv.org/pdf/2412.06014

[Project]: aaltoml.github.io/BayesVLM/

[Code]: github.com/AaltoML/Baye...

12.02.2026 13:08

👍 0

🔁 0

💬 1

📌 0

3/

Post-hoc Probabilistic Vision-Language Models

@antonbaumann.bsky.social, @ruili-pml.bsky.social, @marcusklasson.bsky.social, @smentu.bsky.social, @shyamgopal.bsky.social, @zeynepakata.bsky.social, @arnosolin.bsky.social , @trappmartin.bsky.social

12.02.2026 13:08

👍 1

🔁 0

💬 1

📌 0

While models are largely robust, recovery is inefficient and doubt expression plays a crucial role in recovery. Models are also not style-invariant, and suppression of doubt in a reasoning trace can lead to performance degradation.

12.02.2026 13:08

👍 0

🔁 0

💬 1

📌 0

To evaluate this, we developed a method to alter the chain of thought of a reasoning model at certain fixed time steps of the reasoning process. We then modified the reasoning by introducing various interventions and evaluated how the models reacted to them.

12.02.2026 13:08

👍 0

🔁 0

💬 1

📌 0

Robust reasoning is becoming ever more important as we deploy LLMs in critical settings. But how robust is their ability to recover from noisy or incorrect reasoning steps? Which recovery mechanisms do they employ? And can we potentially make this recovery process more robust?

12.02.2026 13:08

👍 0

🔁 0

💬 1

📌 0

2/

Are Reasoning LLMS Robust to Interventions

on Their Chain-of-thought?

Alexander von Recum, @lgirrbach.bsky.social, @zeynepakata.bsky.social

[Paper]: arxiv.org/pdf/2602.07470

12.02.2026 13:08

👍 0

🔁 0

💬 1

📌 0

This establishes the first large-scale empirical link proving that dataset composition is a primary driver of model bias, and also creates the foundation to study complex dynamics like second-order bias transfer and amplification through model architecture.

12.02.2026 13:08

👍 0

🔁 0

💬 1

📌 0

We demonstrate that a simple linear fit predicts 60-70% of the gender bias found in CLIP and Stable Diffusion directly from co-occurrences in the training data .

12.02.2026 13:08

👍 0

🔁 0

💬 1

📌 0

We use an ensemble of MLLMs and custom classifiers to generate 276M+ person-centric annotations across the full dataset . 🦾 With these labels, we measured "dataset bias" via co-occurrence frequencies, correlating them with "model bias" in CLIP and Stable Diffusion to see if data predicts the model .

12.02.2026 13:08

👍 0

🔁 0

💬 1

📌 0

We set out to change that! By auditing the massive LAION-400M dataset, we finally enable researchers to empirically test how well dataset statistics actually predict downstream model behavior .

12.02.2026 13:08

👍 0

🔁 0

💬 1

📌 0

Do foundation models merely reflect the bias in their data, or do they amplify it? So far, the link between dataset imbalances and model bias has been an assumption rather than a measurement .

12.02.2026 13:08

👍 0

🔁 0

💬 1

📌 0

1/

Person-Centric Annotations of LAION-400M: Auditing Bias and Its Transfer to Models

@lgirrbach.bsky.social, Stephan Alaniz, Genevieve Smith,Trevor Darrell, @zeynepakata.bsky.social

[Paper]: arxiv.org/pdf/2510.03721?

12.02.2026 13:08

👍 1

🔁 0

💬 1

📌 0

🥳Happy to share that we have three papers accepted to #ICLR2026. Congrats to our authors and see you in Rio🌴🇧🇷. Check the thread for highlights👇

12.02.2026 13:08

👍 6

🔁 2

💬 1

📌 0

4/

Invited talk: The Asymmetry of Adaptation: Reverse-Engineering Multimodal In-Context Learning

Yiran Huang & @zeynepakata.bsky.social

📍[🇺🇸 NeurIPS CCFM Workshop]: Sunday, December 7th 2025, 8:15 AM - 9:00 AM, San Diego Convention Center, Upper Level Room 25ABC

03.12.2025 11:52

👍 0

🔁 0

💬 0

📌 0

3/

Concept-Guided Interpretability via Neural Chunking

Shuchen Wu , Stephan Alaniz , @shyamgopal.bsky.social , Peter Dayan, Eric Schulz, @zeynepakata.bsky.social

📍[🇺🇸NeurIPS]: Fri, Dec 5, 2025 • 11:00 AM – 2:00 PM PST, Exhibit Hall C,D,E #2113

03.12.2025 11:52

👍 0

🔁 0

💬 1

📌 0

2/

Noise Hypernetworks: Amortizing Test-Time Compute in Diffusion Models

@lucaeyring.bsky.social , @shyamgopal.bsky.social , Alexey Dosovitskiy, @natanielruiz.bsky.social , @zeynepakata.bsky.social

📍[🇺🇸NeurIPS]: Thu, Dec 4 • 11:00 AM – 2:00 PM PST, Exhibit Hall C,D,E #3605

03.12.2025 11:52

👍 0

🔁 0

💬 1

📌 0

1/

Sparse Autoencoders Learn Monosemantic Features in Vision-Language Models

Mateusz Pach, @shyamgopal.bsky.social , @qbouniot.bsky.social , Serge Belongie, @zeynepakata.bsky.social

📍[🇺🇸NeurIPS]: Wed, Dec 3 • 4:30 PM – 7:30 PM PST, Exhibit Hall C,D,E #1007

📍[🇩🇰EurIPS]: Thu, Dec 4, #98

03.12.2025 11:52

👍 5

🔁 1

💬 1

📌 0

Our EML members have arrived in beautiful San Diego for #NeurIPS2025! 🌴

We’re excited to share our latest research — three poster presentations and one workshop presentation.

Check out the thread below 👇 and come say hi to our authors!

03.12.2025 11:52

👍 4

🔁 0

💬 1

📌 1

🎓PhD application season is back!

We’re hiring ONLY through the ELLIS @ellis.eu and the MCML

@munichcenterml.bsky.social

📌Please denote Prof. Zeynep Akata @zeynepakata.bsky.social as your preferred supervisor!

👉 Link to ELLIS (ellis.eu/news/ellis-p...) and MCML (mcml.ai/opportunitie...)

24.10.2025 10:21

👍 5

🔁 1

💬 0

📌 1

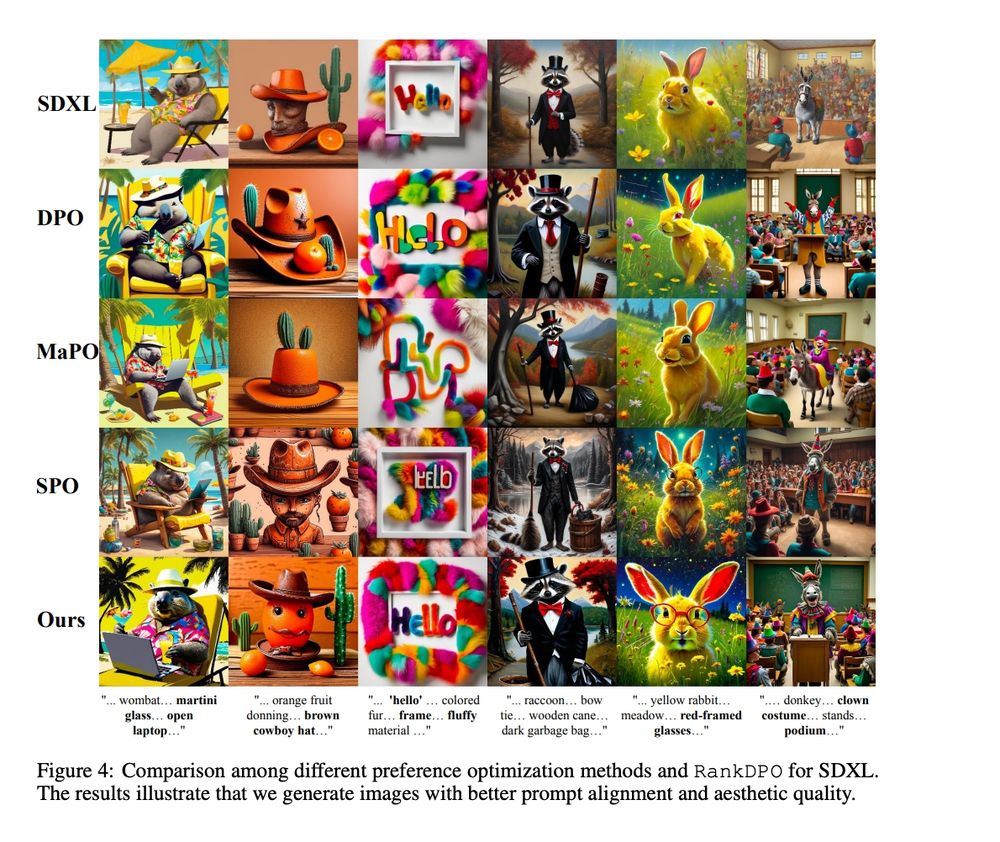

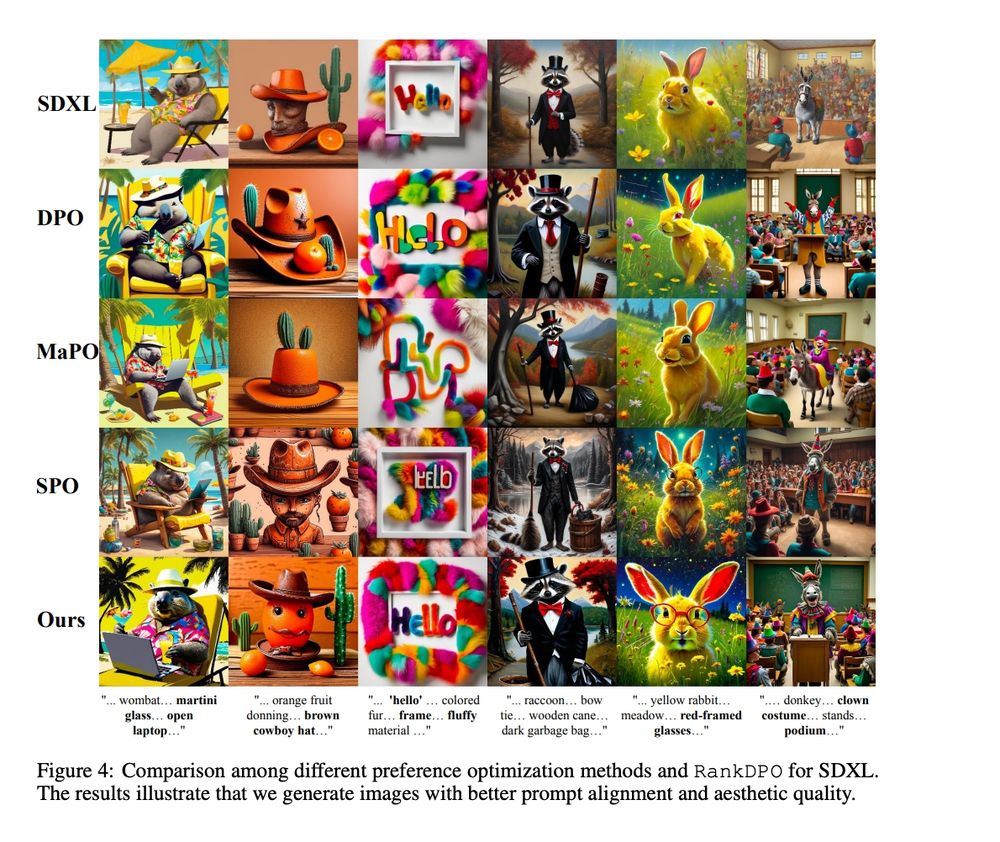

Results. GenEval: SDXL 0.55→0.61 (notable gains in two objects, counting, color attribution). T2I-CompBench: broad boosts (esp. Color/Texture). DPG-Bench (SDXL): DSG 74.65→79.26, Q-Align 0.72→0.81; user study: RankDPO wins over SDXL & DPO-SDXL.

20.10.2025 12:34

👍 1

🔁 0

💬 0

📌 0

We propose RankDPO—a listwise preference objective that weights pairwise denoising comparisons using DCG-style gains/discounts, optimizing the entire ranking per prompt rather than isolated pairs.

20.10.2025 12:34

👍 1

🔁 0

💬 1

📌 0

Direct Preference Optimization is strong for T2I—but human labels are pricey/outdated. We build Syn-Pic: a fully synthetic ranked preference dataset by ensembling 5 reward models to remove humans from the loop.

20.10.2025 12:34

👍 0

🔁 0

💬 1

📌 0

2/

Scalable Ranked Preference Optimization for Text-to-Image Generation

@shyamgopal.bsky.social , Huseyin Coskun, @zeynepakata.bsky.social , Sergey Tulyakov, Jian Ren, Anil Kag

[Paper]: arxiv.org/pdf/2410.18013

📍Hall I #1702

🕑Oct 22, Poster Session 4

20.10.2025 12:34

👍 0

🔁 0

💬 1

📌 0

SUB enables rigorous stress-testing of interpretable models. We find that CBMs fail to generalize to these novel combinations of known concepts.

20.10.2025 12:34

👍 0

🔁 0

💬 1

📌 0

To generate precise variations, we propose Tied Diffusion Guidance (TDG) — sharing noise across two parallel denoising processes to ensure correct class and attribute generation.

20.10.2025 12:34

👍 0

🔁 0

💬 1

📌 0

We introduce SUB, a fine-grained image & concept benchmark with 38,400 synthetic bird images 🦤.

Using 33 classes & 45 concepts (e.g., wing color, belly pattern), SUB tests how robust CBMs are to targeted concept variations.

20.10.2025 12:34

👍 0

🔁 0

💬 1

📌 0

Concept Bottleneck Models (CBMs) hold huge promise for making AI more transparent—especially in high-stakes fields like medicine. But how well do they hold up under distribution shifts? 🧠

20.10.2025 12:34

👍 0

🔁 0

💬 1

📌 0