We updated our LLM epistemic diversity study with a stronger search baseline closer to true search diversity.

It beats every model, even though it is underestimated.

In other words, one can expect less information from an LLM vs Google searching.

See the new results here! arxiv.org/pdf/2510.04226

03.02.2026 10:42

👍 3

🔁 0

💬 0

📌 1

News about our epistemic diversity paper!

💻 The code is now a python package! Installation instructions here: github.com/dwright37/ll...

🤗 All 1.6M model responses and 70M clustered claims are now available on HuggingFace! huggingface.co/datasets/dwr...

📄 Paper: arxiv.org/pdf/2510.04226

20.11.2025 11:04

👍 5

🔁 0

💬 0

📌 0

Attending EMNLP 2025 this week? So is CopeNLU -- come find us there! ⤵️

www.copenlu.com/news/8-paper...

@apepa.bsky.social @rnv.bsky.social @kirekara.bsky.social @shoejoe.bsky.social @dustinbwright.com @zainmujahid.me @lucasresck.bsky.social @iaugenstein.bsky.social

#NLProc #AI #EMNLP2025

04.11.2025 13:21

👍 4

🔁 3

💬 0

📌 0

Heading to #EMNLP2025? Interested in automatic summarization? Then come to our poster for "Unstructured Evidence Attribution for Long Context Query Focused Summarization" !

⏰ When: Fri. Nov 7 14:00-15:30

🗺️ Where: Hall C

I'm unable to attend but @iaugenstein.bsky.social will present our work!

03.11.2025 15:25

👍 11

🔁 4

💬 0

📌 0

Oh this is super neat! Its also nice that there’s more evidence here about the negative impact of model size. I think I mentioned at ACL but I’m also super interested in looking at the relationships between the training data and the results we get

13.10.2025 18:38

👍 3

🔁 0

💬 1

📌 0

And finally, work was done with amazing colleagues!

Sarah Masud, Jared Moore, @srishtiy.bsky.social, @mariaa.bsky.social, Peter Ebert Christensen, Chan Young Park, and @iaugenstein.bsky.social

10/10

13.10.2025 11:25

👍 4

🔁 0

💬 0

📌 0

🛣️Methodology can be used in the future to study epistemic diversity for any arbitrary topics, downstream tasks, and real-world use cases with open-ended plain-text LLM outputs. This allows researchers to answer research questions about which, whose, and how much knowledge LLMs are representing

9/10

13.10.2025 11:25

👍 4

🔁 1

💬 1

📌 0

📏 To measure diversity we use a statistically grounded measure commonly used to measure species diversity in ecology, in order to fairly compare the relative diversity of models in different settings.

8/10

13.10.2025 11:25

👍 3

🔁 0

💬 1

📌 0

🪛 Approach: we propose a new methodology which includes sampling plain text LLM outputs with 200 prompt variations from real chats across 155 topics, decomposing into individual claims, and clustering those claims based on entailment.

7/10

13.10.2025 11:25

👍 4

🔁 0

💬 1

📌 0

🌍 There are gaps in country specific knowledge. When matching claims to English and local language Wikipedia, no local language is statistically significantly more represented than English, and English language knowledge is statistically significantly more represented for 5 of 8 countries

6/10

13.10.2025 11:25

👍 3

🔁 0

💬 1

📌 0

🏗️ Model size has an unintuitive negative impact on diversity; smaller models tend to be more diverse

🔎 RAG has a positive impact on diversity, indicating its usefulness in making LLM outputs more diverse. However, the gains from RAG are not equal across topics about different countries

5/10

13.10.2025 11:25

👍 3

🔁 0

💬 1

📌 0

📈 Knowledge in LLMs across 3 of 4 model families has *expanded* since 2023 ✅ ; however, their absolute diversity is quite low compared to a very modest traditional search baseline 👎

4/10

13.10.2025 11:25

👍 4

🔁 0

💬 1

📌 0

👍 To assess this risk, we set out to measure to what extent LLMs are homogenous in terms of the *real-world claims* they generate. We perform a large study across 27 LLMs, 2 generation settings, with different model versions and sizes. In a nutshell, our findings are:

3/10

13.10.2025 11:25

👍 3

🔁 0

💬 1

📌 0

🤔 A lot of people are using LLMs. However, their outputs are not very diverse. What does this mean for the future of knowledge? Many speculate that overreliance on LLMs will lead to "knowledge collapse", where the diversity of human knowledge is narrowed by a reliance on homogenous LLMs.

2/10

13.10.2025 11:25

👍 6

🔁 0

💬 2

📌 0

Which, whose, and how much knowledge do LLMs represent?

I'm excited to share our preprint answering these questions:

"Epistemic Diversity and Knowledge Collapse in Large Language Models"

📄Paper: arxiv.org/pdf/2510.04226

💻Code: github.com/dwright37/ll...

1/10

13.10.2025 11:25

👍 89

🔁 26

💬 2

📌 1

🦾 We demonstrate across 5 LLMs and 4 datasets that LLMs adapted with SUnsET generate more relevant and factually consistent evidence, extract evidence from more diverse locations in their context, and can generate more relevant and consistent summaries than baselines.

25.08.2025 11:42

👍 1

🔁 0

💬 1

📌 0

🔎 We show for existing large language models that evidence is often copied incorrectly and "lost-in-the-middle". To help perform this task, we create the Summaries with Unstructured Evidence Text dataset (☀️SUnsET☀️), a synthetic dataset which can be used to train unstructured evidence citation.

25.08.2025 11:42

👍 0

🔁 0

💬 1

📌 0

💡 Normally when automatically generated summaries cite supporting evidence, they cite fixed-granular evidence e.g., individual sentences or whole documents. Our work proposes to extract spans of *any* length as more relevant and consistent evidence for long context query focused summaries.

25.08.2025 11:42

👍 0

🔁 0

💬 1

📌 0

🎉 Our work on attribution in summarization is now accepted to #EMNLP2025 main! 🎉

"Unstructured Evidence Attribution for Long Context Query Focused Summarization"

w/ @zainmujahid.me , Lu Wang, @iaugenstein.bsky.social , and @davidjurgens.bsky.social

25.08.2025 11:42

👍 23

🔁 7

💬 1

📌 0

There’s something really special about seeing a physical print copy of our work 🤩

You can read “Efficiency is Not Enough: A Critical Perspective on Environmentally Sustainable AI” now in CACM!!!

dl.acm.org/doi/10.1145/...

26.07.2025 19:51

👍 14

🔁 0

💬 0

📌 0

No fewer than three people were needed to cover all the aspects of our dialogue simulation paper. Thanks for the interest — check out the preprint. Link in Dustin’s post.

@dustinbwright.com @ic2s2.bsky.social #ic2s2

24.07.2025 15:01

👍 23

🔁 1

💬 1

📌 0

We had a great time talking about dialogue simulation with LLMs at @ic2s2.bsky.social !!! Amazing work by all of our colleagues at UMich.

See the preprint of this work here: arxiv.org/abs/2409.08330

24.07.2025 13:54

👍 16

🔁 0

💬 0

📌 1

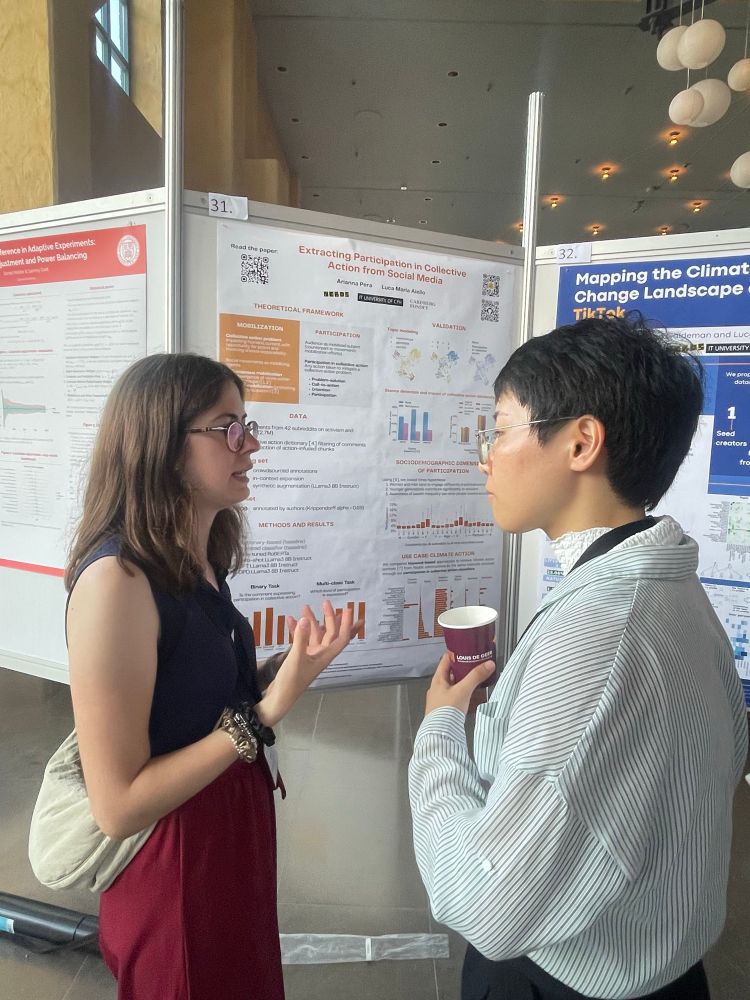

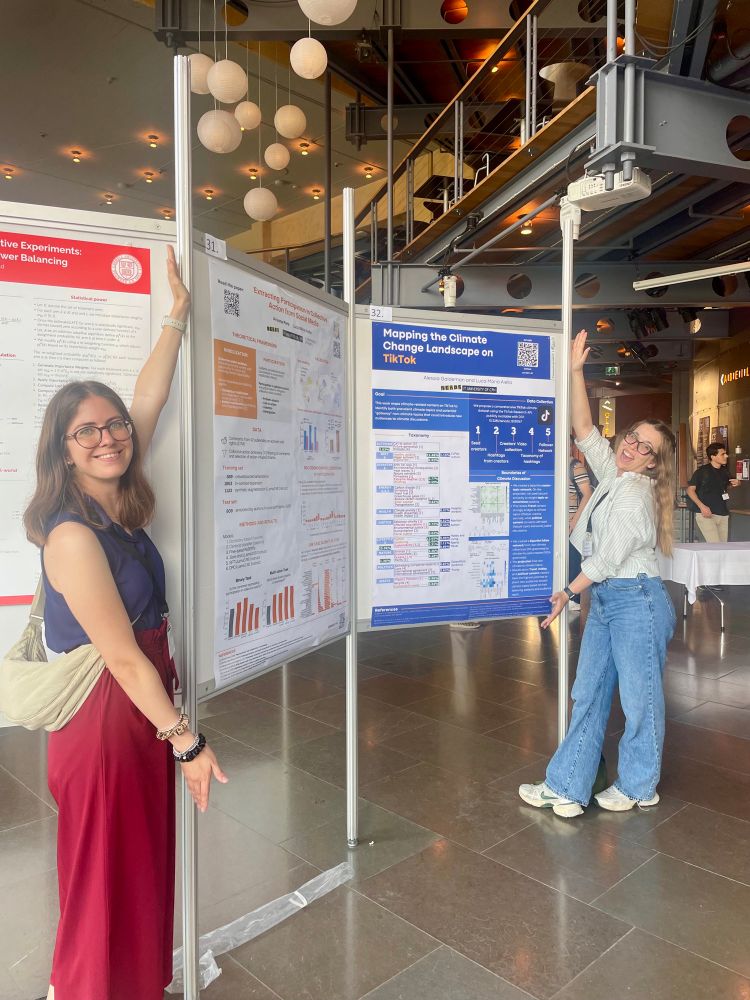

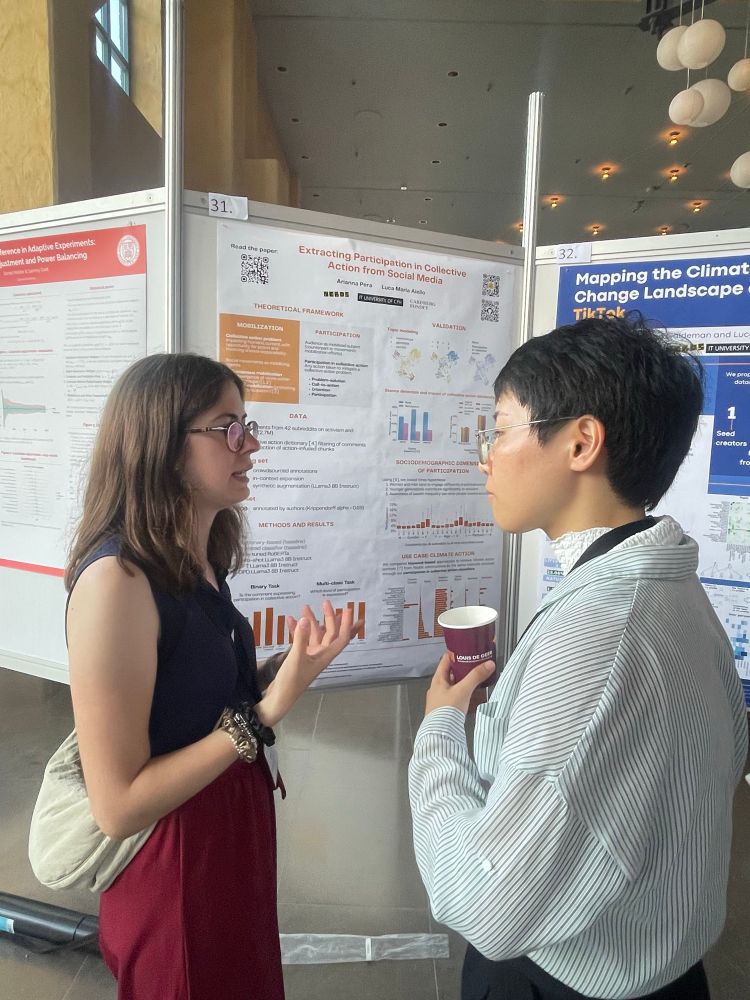

The work “Extracting Participation in Collective Action from Social Media”, in collaboration with @lajello.bsky.social, at @ic2s2.bsky.social today!

Check out the paper ojs.aaai.org/index.php/IC... and models huggingface.co/ariannap22

Feat. poster and research buddy @alessianetwork.bsky.social ♥️

23.07.2025 15:25

👍 32

🔁 4

💬 0

📌 0

Dara

Open PhD positions in Denmark! daracademy.dk/fellowship/f...

If you want to apply to work with me and Johannes Bjerva at @aau.dk Copenhagen, I'll be at @ic2s2.bsky.social this week and @aclmeeting.bsky.social next week! DM me if you'd like to meet :)

21.07.2025 13:26

👍 10

🔁 5

💬 0

📌 0

Pre-ACL 2025 Workshop | Event | Pioneer Centre for Artificial Intelligence

Join us for the Pre-ACL 2025 Workshop in Copenhagen, 26 July, 2025!

🇩🇰 With international NLP experts from Columbia, UCLA, University of Michigan, and more to Copenhagen to meet with the Danish NLP community. 🇩🇰

📅 Poster submission deadline: June 16, 2025

🔗 Register: www.aicentre.dk/events/pre-a...

16.06.2025 14:08

👍 13

🔁 1

💬 0

📌 0

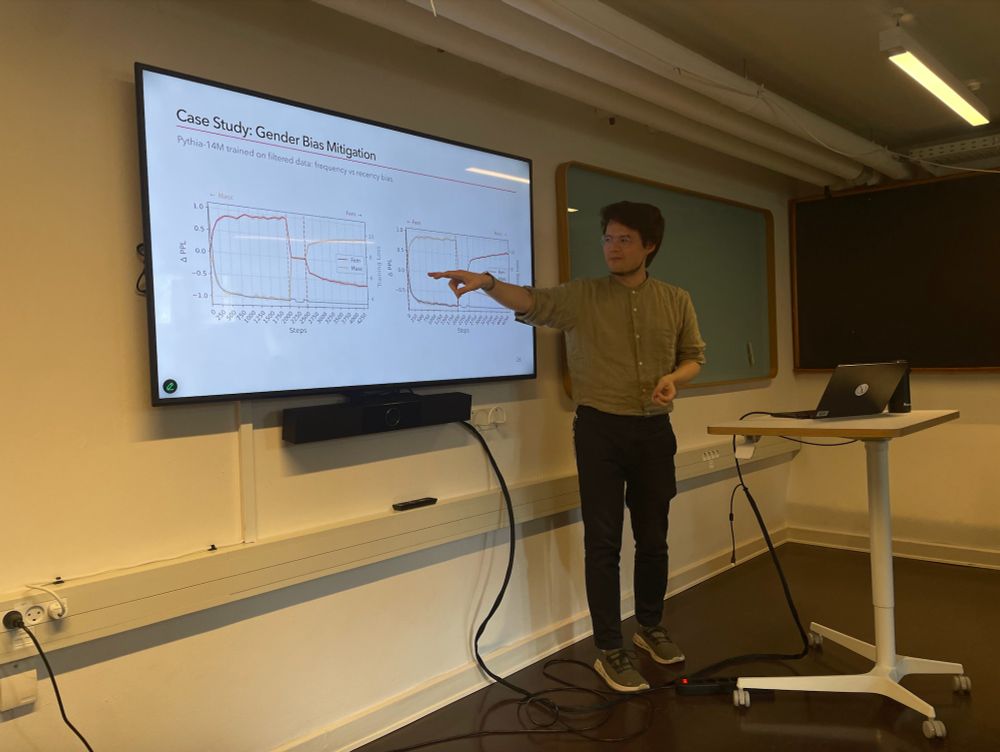

Thanks to @dustinbwright.com (@copenlu.bsky.social) and @mxij.me (@itu.dk) for sharing insights on your research within the collaboratory of Speech & Language, at the Last Fridays Talks!

25.04.2025 13:56

👍 16

🔁 2

💬 1

📌 0