I don’t need to keep as much context in my head’s “cache” as I did when I implemented features manually, and that makes context switching less costly.

I don’t need to keep as much context in my head’s “cache” as I did when I implemented features manually, and that makes context switching less costly.

And no, it hasn’t hurt code quality. Quite the opposite. Codex is good at implementing features that work as intended. I’m still picky about design details; I just handle those in review.

I work on tea-tasting (github.com/e10v/tea-tas...) in my spare time. I used to save bigger changes for holidays, when I had long uninterrupted coding time. Now I can do it in the evening or even while I’m eating 🙈

Codex doesn’t just save time when writing code. The bigger thing is that it lowers the cost per context switch.

With solid tests, linting, type checking, and an AGENTS md, one prompt is often enough to build a feature, update docstrings, and add tests.

Codex 5.3 built the last four tea-tasting (github.com/e10v/tea-tas...) features—and it’s been great! I shipped two package versions in one evening. I reviewed the code thoroughly—my comments were minor.

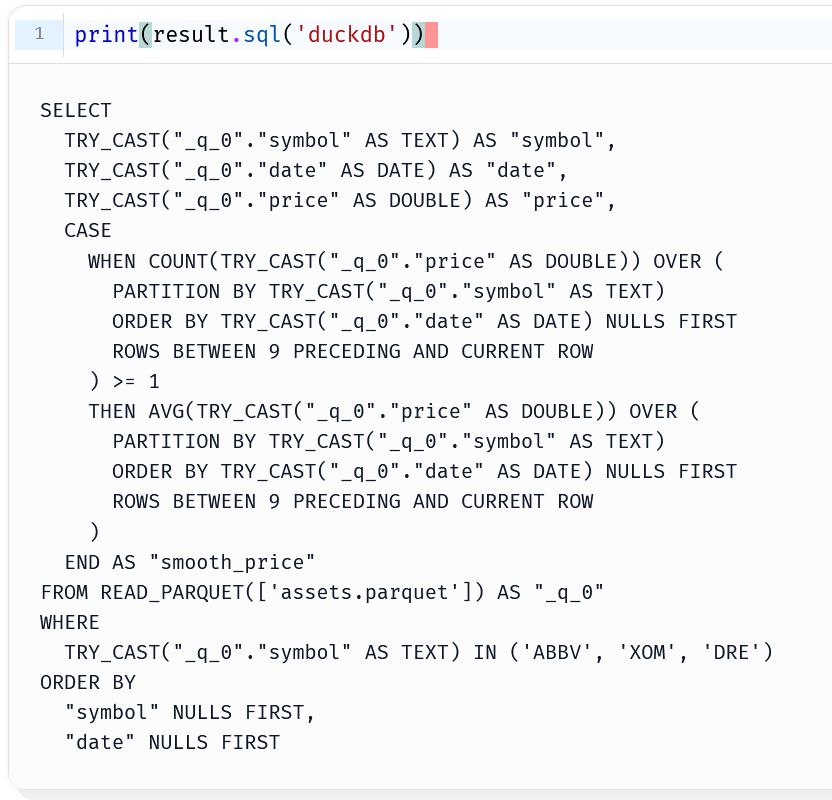

ClickHouse permission tweak + a missing SQL filter → ML feature pipeline down → the internet breaks 🤔

Another case supporting Taleb’s thesis that the world has become more fragile

Other examples that come to mind: the Amazon outage last month and the CrowdStrike-related outage last year

P.S. Paying to apply may sound provocative and require thoughtful consideration and careful testing. But here, I focus on why price signals may address this problem better than AI-based screening.

Then I asked myself what this solution has that AI doesn't. That’s how I arrived at the analogy that prices act as model weights: they encode market information. An important difference: prices incorporate signals from dispersed, hard-to-observe data that an AI/ML model may not access.

I was thinking about the labor market congestion problem and came up with a solution that is often used in service marketplaces: pay to apply... 🧵

Another perspective on marshmallow test: market risk and diminishing marginal utility

In NotebookLM, the output language is global. A per-notebook setting would be much more convenient

As a non-native English speaker, I don’t want YouTube auto-translating titles, chapters, and descriptions; I want the originals

It’s astonishing that Google employs so many multilingual people yet designs products as if users speak only one language

Some mentions of the quote "all models are wrong, but some are useful" should be followed by "the map is not the territory"

We wanted flying cars, instead we got scooter riders honking at our backs and knocking us off our feet

Pre-LLM era take-home assignments: focus on evaluating *answers*; LLM era: focus on evaluating *questions*.

"Here is the problem-solving case: […]. Use an LLM to solve it. Provide a summary of your conversation with the LLM, including the questions you asked and the final solution you obtained."

Every relevant cost can ultimately be framed as an opportunity cost. So, in essence, opportunity cost is the only cost that matters.

In statistics, answering a wrong question is sometimes called a Type III error. I've already mentioned it in a blog post: e10v.me/ranking-two-...

From my personal experience, people often skip steps 1 and 3, which can lead to bad decisions or mediocre solutions.

You probably read this in a statistical or data-analysis context. But I believe the framework applies more broadly.

...

4. What data do we need to apply the model?

5. What conclusions can we draw after applying the model?

A ladder of questions in analysis:

1. Which question should we ask to address a decision or a problem?

2. Which model should we choose to answer the question?

3. Which assumptions must hold for the model to be valid, and do they hold?

... 🧵

#DecisionIntelligence #ModelThinking #Logic

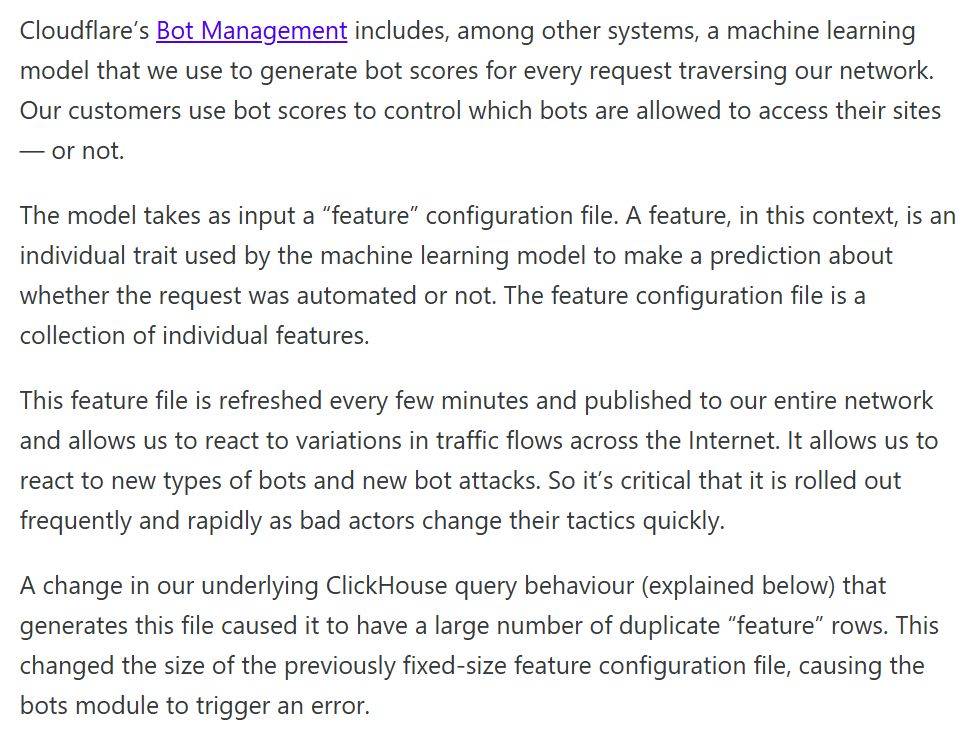

Demo of Narwhals dataframe-agnostic function which supports PySpark

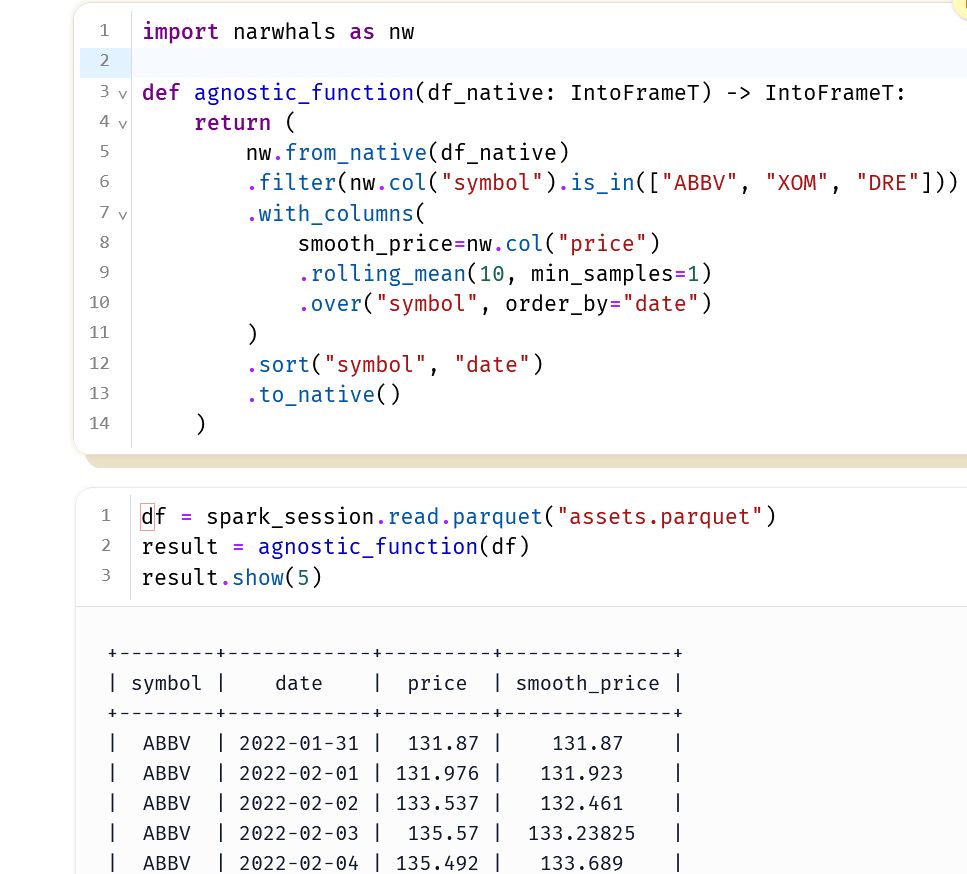

Plot of PySpark dataframe after converting it to PyArrow

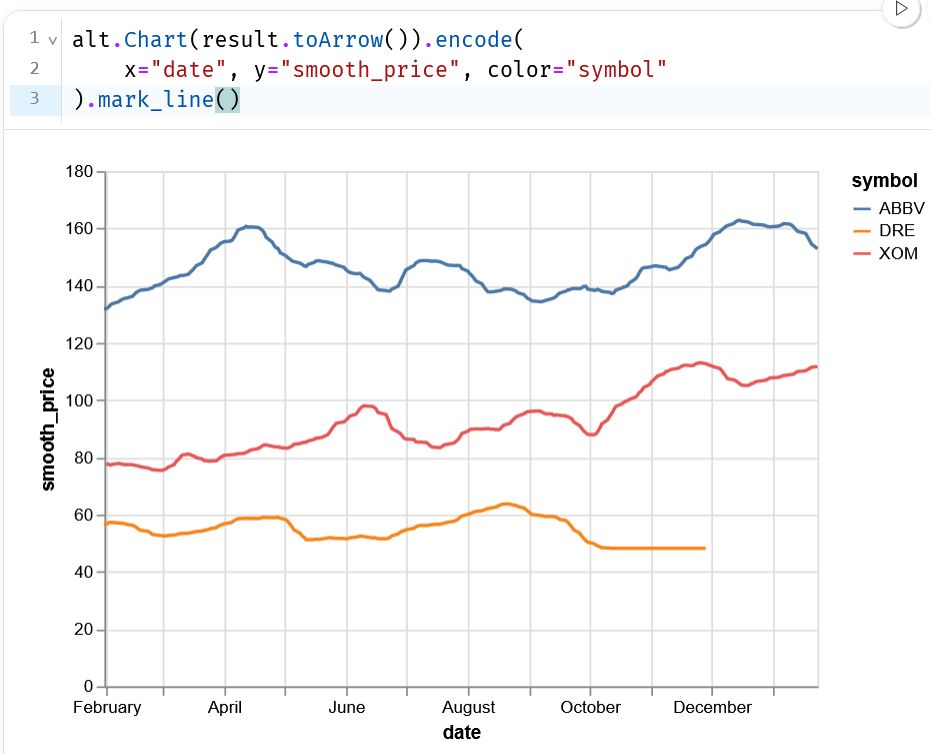

SQL generation from Polars syntax

✨ Narwhals now supports PySpark

🎇 If you have a dataframe-agnostic function, you can pass in `pyspark.sql.DataFrame`

📈 Here's a demo, made with @marimo.io

🎁 BONUS feature: combine with SQLFrame, to generate SQL from @pola.rs syntax 🪄

🔗🔗

- What’s new in tea-tasting: github.com/e10v/tea-tas...

- Guide on simulated experiments and A/A tests: tea-tasting.e10v.me/simulated-ex...

- Examples/guides as marimo notebooks: github.com/e10v/tea-tas...

A/A tests are useful for identifying potential issues before conducting the actual A/B test. Treatment simulations are great for power analysis—especially when you need a specific uplift distribution or when an analytical formula doesn’t exist.

@marimo.io is not only a great tool for reproducible and interactive research—it's also perfect for interactive documentation where users can play with examples. You can run them as WASM notebooks entirely in your browser—no local setup needed. I personally love marimo's attention to detail.

The version 1.0 of tea-tasting, a Python package for the statistical analysis of A/B tests, is now available. Notable improvements:

- Interactive user guides built with @marimo_io notebooks.

- Simulated experiments, including A/A tests.

#abtesting #statistics #datascience

Wow, ChatGPT is really smart now 😜

But better check for yourself: github.com/e10v/tea-tas...

#abtesting #statistics #chatgpt #ai