We can also use multiple types of contrast, and solve one Contrastive Eigenproblem for all of them. When applying this to the 'sentence polarity' and 'sentence truth' features, the top eigenvectors yield directions for both, and the polarity-sensitive truth direction first found by Bürger et. al.

03.12.2025 15:33

👍 0

🔁 0

💬 1

📌 0

But, how do we know we succeeded? What if the model does not represent features the way we think it will?

Solving the Contrastive Eigenproblem gives eigenvalues that show how many contrastive directions are captured by your activations.

Below, only 'amazon' isolates a single direction.

03.12.2025 15:33

👍 0

🔁 0

💬 1

📌 0

Contrastive probing methods use pairs of activations with no further supervision. The goal is for each pair to be based on inputs which differ in exactly one way: one has the feature of interest, and the other does not (without needing to know which).

03.12.2025 15:33

👍 0

🔁 0

💬 1

📌 0

How do you know your contrastive probing data identifies a unique feature?

How can we identify directions that model combinations of features?

We propose Contrastive Eigenproblems to tackle both of these issues.

Come see the poster at the MechInterp Workshop @ NeurIPS this sunday!

03.12.2025 15:33

👍 1

🔁 0

💬 1

📌 0

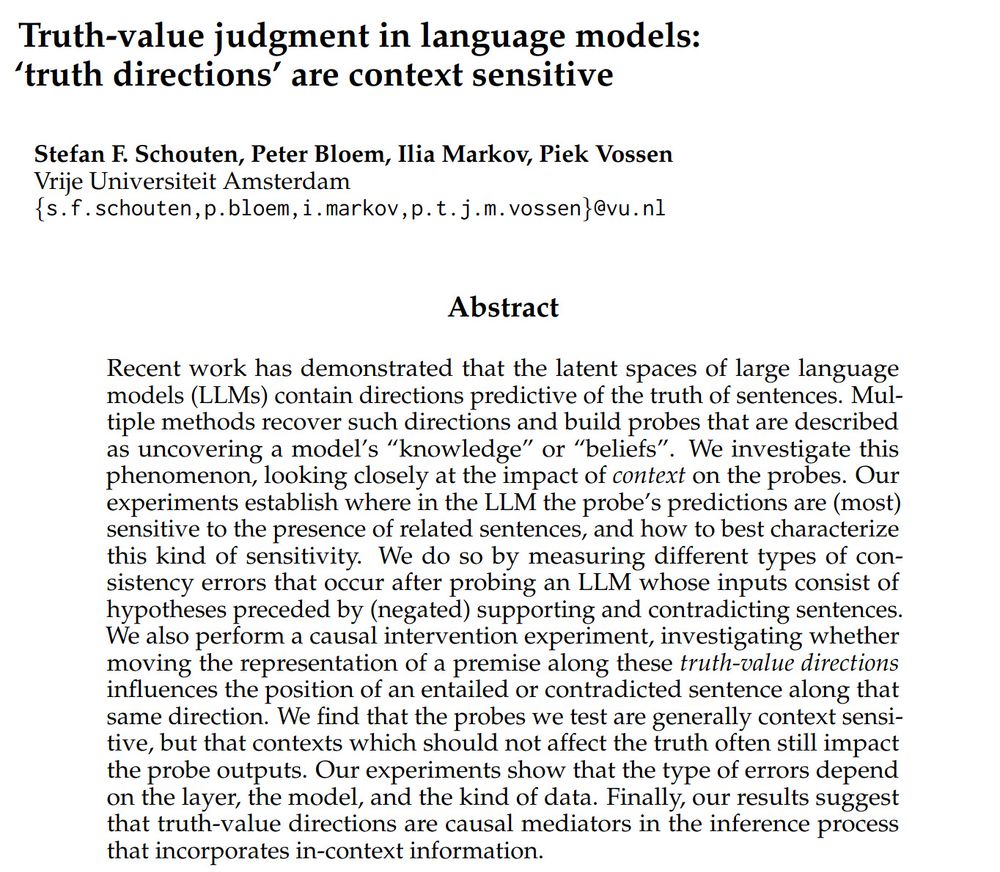

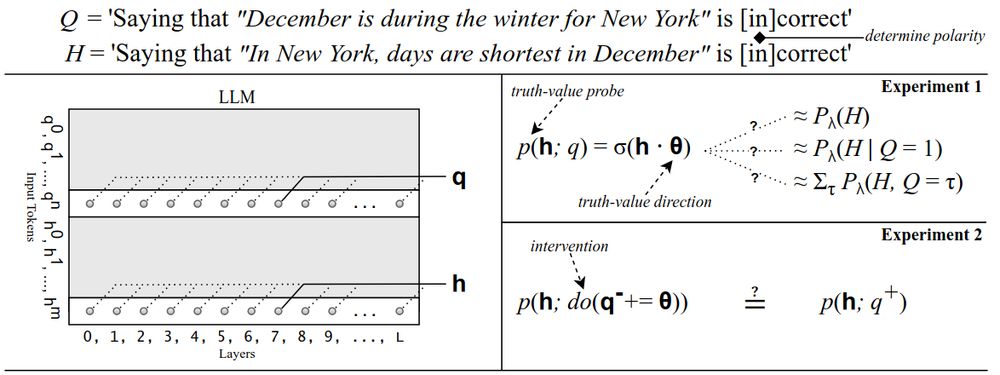

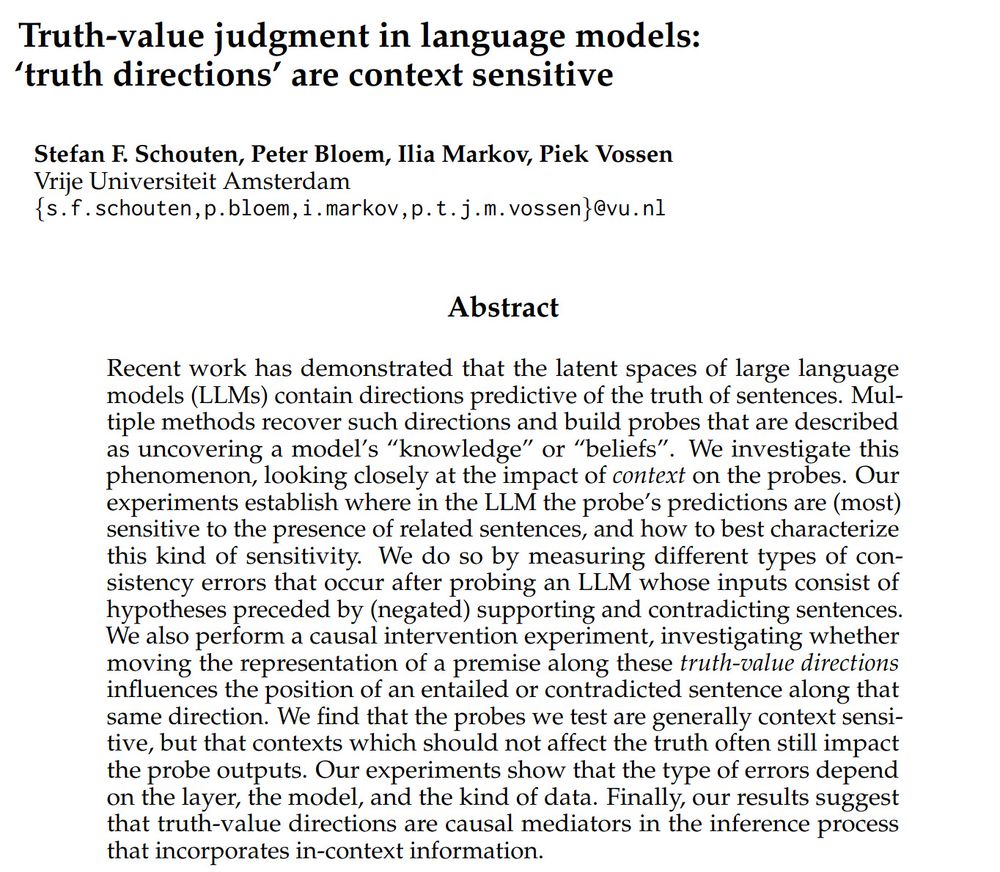

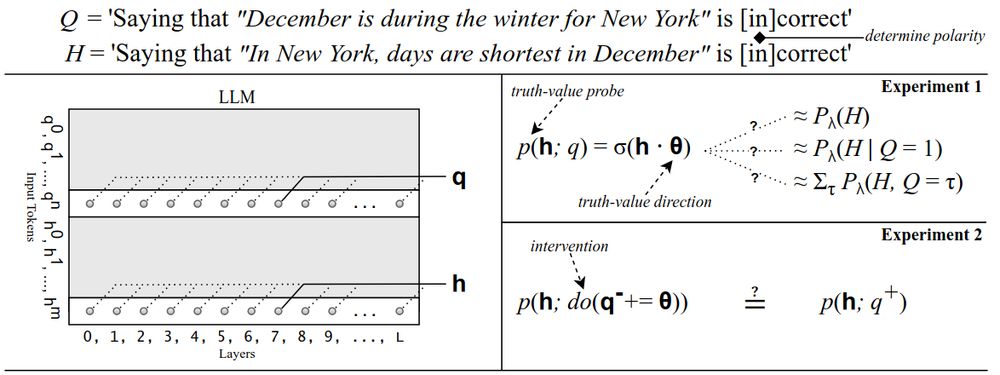

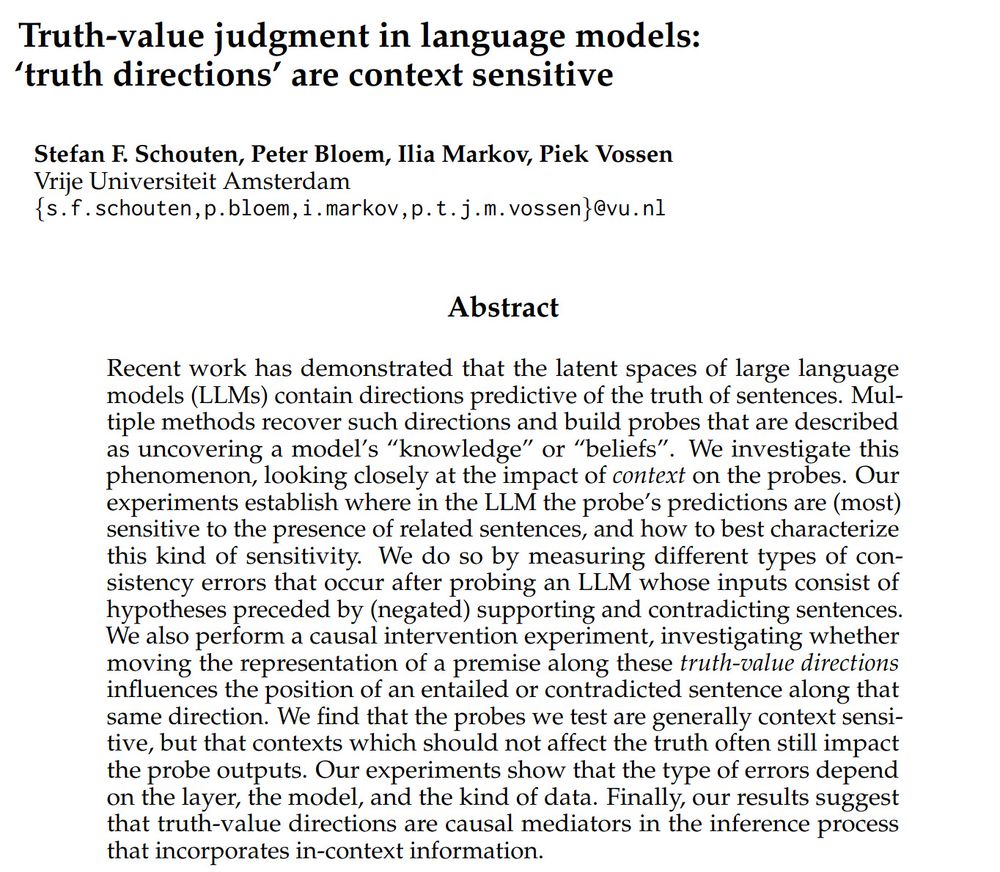

📢 Our paper on 'Truth-value Judgment in LLMs' was accepted to @colmweb.org #COLM2025!

In this paper, we investigate how LLMs keep track of the truth of sentences when reasoning.

14.07.2025 14:54

👍 7

🔁 1

💬 1

📌 0

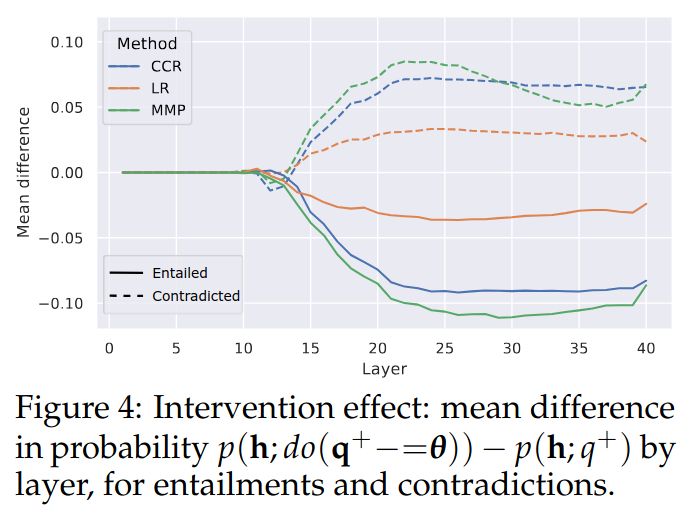

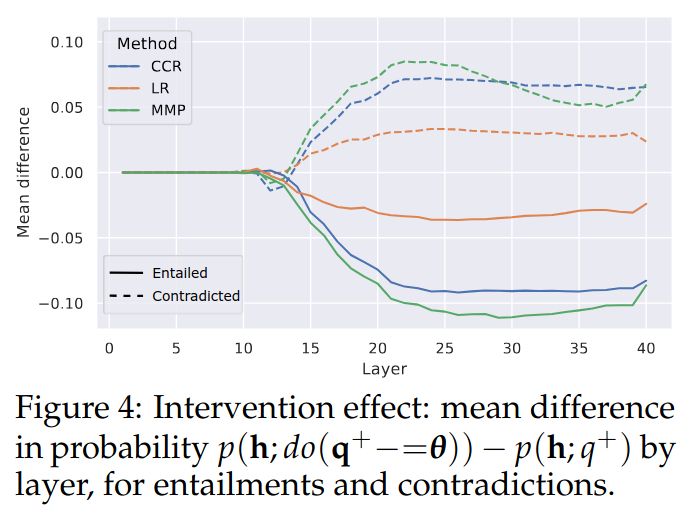

Finally, when intervening on hidden states, we find that the truth-value directions identified are causal mediators in the inference process.

14.07.2025 14:54

👍 1

🔁 0

💬 1

📌 0

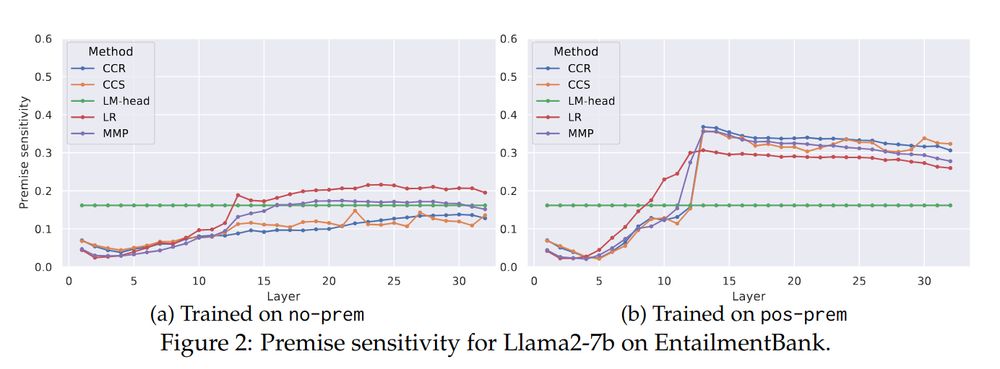

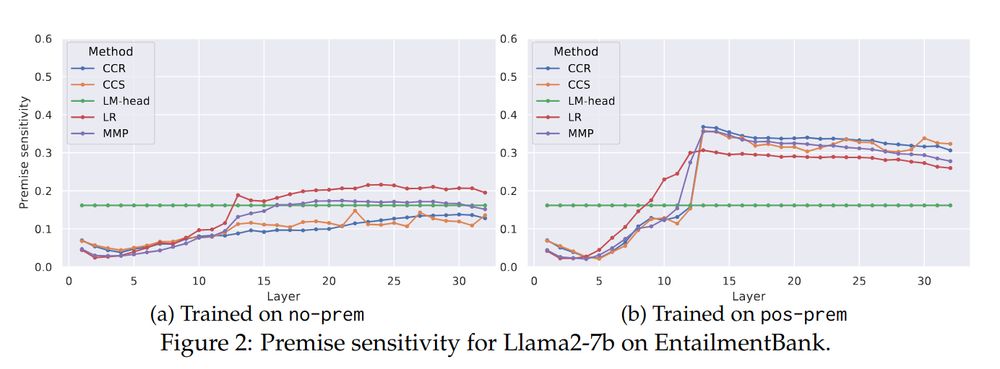

Even directions identified from single sentences show some sensitivity to the context, but sensitivity increases when probes are based on examples where sentences appear in inferential contexts.

14.07.2025 14:54

👍 0

🔁 0

💬 1

📌 0

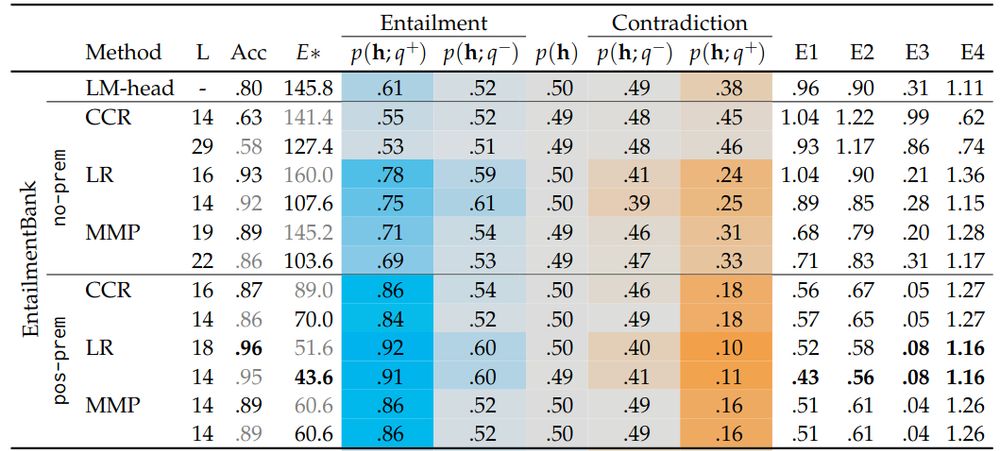

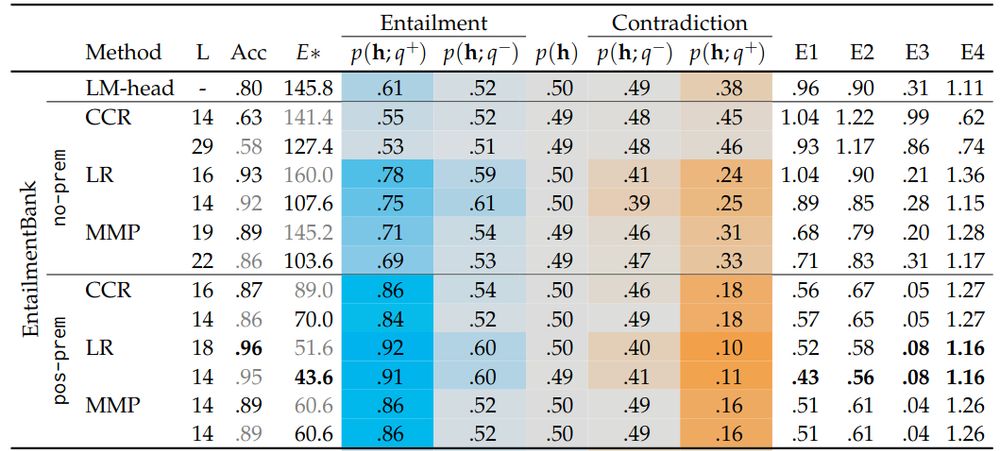

Regardless of probing method and dataset, truth-value directions are found to be sensitive to context. However, we also find they are sensitive to the presence of irrelevant information.

14.07.2025 14:54

👍 0

🔁 0

💬 1

📌 0

We use probing techniques that identify directions in the model's latent space which encode if sentences are more or less likely to be true. By manipulating inputs and hidden states we evaluate whether probabilities update in appropriate ways.

14.07.2025 14:54

👍 0

🔁 0

💬 1

📌 0

📢 Our paper on 'Truth-value Judgment in LLMs' was accepted to @colmweb.org #COLM2025!

In this paper, we investigate how LLMs keep track of the truth of sentences when reasoning.

14.07.2025 14:54

👍 7

🔁 1

💬 1

📌 0

Even truth-value directions identified from individual sentences still show some sensitivity to context, although the sensitivity increases when probes are based on sentences appearing in an inferential context.

14.07.2025 14:39

👍 0

🔁 0

💬 0

📌 0

We find that regardless of probing method and dataset, models are found to incorporate in-context information when assigning truth-values to sentences. However, we also find they are sensitive to irrelevant information.

14.07.2025 14:39

👍 0

🔁 0

💬 1

📌 0

We use probing techniques that identify directions in the model's latent space used to represent sentences as more or less likely to be true. By manipulating both inputs as well as hidden states, we test if probabilities update as expected.

14.07.2025 14:39

👍 0

🔁 0

💬 1

📌 0