(5/6) SNR naturally gives a way to improve benchmarks, we introduce 3 “interventions” in our work! For example:

❗️ Simply using the top 16 MMLU subtasks by SNR exhibits better decision accuracy and lower scaling law error than using the full task (only 6 for an AutoBencher task)

19.08.2025 16:46

👍 1

🔁 0

💬 1

📌 0

(4/6) 🧐 How do we know SNR is meaningful? We can (1) calculate % of models ranked correctly at small vs. 1B scale and (2) fit scaling laws to predict the task performance.

SNR is predictive of better decision accuracy, and tasks with lower noise have lower scaling law error!

19.08.2025 16:46

👍 1

🔁 0

💬 1

📌 0

(3/6) 🔎 We landed on a simple metric - the signal-to-noise ratio - the ratio between the dispersion of scores from models, and the variation of final checkpoints of a single model.

This allows estimating SNR with a small number of models (around 50 models) at any compute scale!

19.08.2025 16:46

👍 1

🔁 0

💬 1

📌 0

(2/6) Consider these training curves: 150M, 300M and 1B param models on 25 pretraining corpora. Many benchmarks can separate models, but are too noisy, and vice versa! 😧

We want – ⭐ low noise and high signal ⭐ – *both* low variance during training and a high spread of scores.

19.08.2025 16:46

👍 1

🔁 0

💬 1

📌 1

Evaluating language models is tricky, how do we know if our results are real, or due to random chance?

We find an answer with two simple metrics: signal, a benchmark’s ability to separate models, and noise, a benchmark’s random variability between training steps 🧵

19.08.2025 16:46

👍 15

🔁 4

💬 2

📌 0

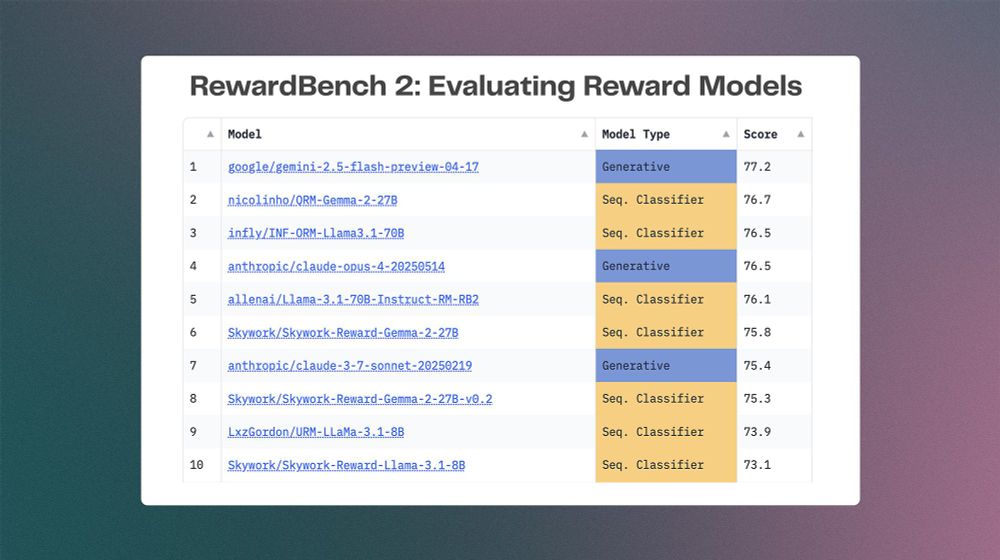

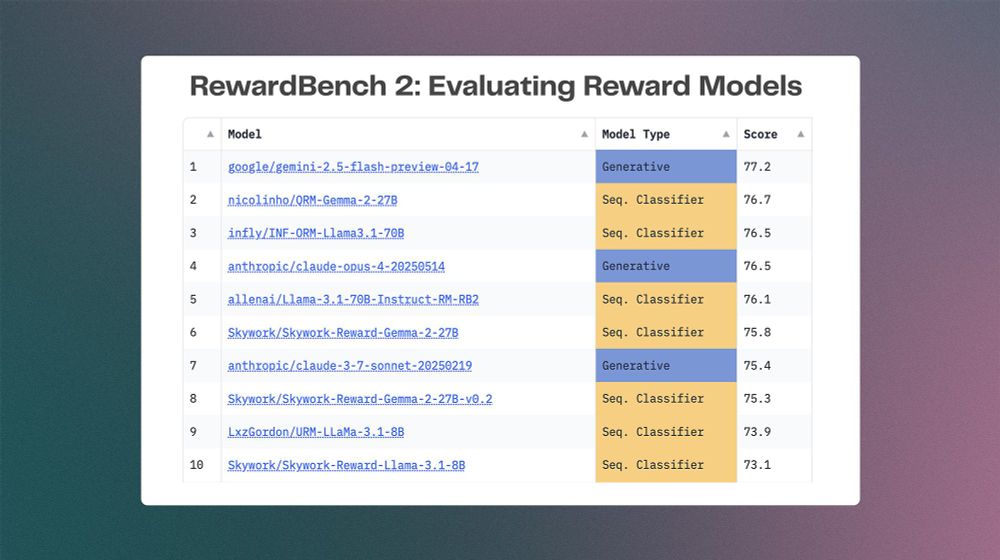

The RewardBench 2 Leaderboard on HuggingFace.

RewardBench 2 is here! We took a long time to learn from our first reward model evaluation tool to make one that is substantially harder and more correlated with both downstream RLHF and inference-time scaling.

02.06.2025 16:31

👍 20

🔁 8

💬 1

📌 1