Congratulations to the hardworking folks at UPenn!

Thank you Edward for including me and for all the nice discussions.

🌐 penn-pal-lab.github.io/aawr

📝 openreview.net/forum?id=Rkd...

💻 github.com/penn-pal-lab...

More theory details in Appendix A-E and on slide 30 (orbi.uliege.be/handle/2268/...)

20.02.2026 21:36

👍 0

🔁 0

💬 0

📌 0

As seen from the results and videos, AAWR improves significantly on (i) foundation policies, (ii) behavior cloning policies, and (iii) AWR policies, providing good policies even in partially observable environments with non Markovian inputs.

20.02.2026 21:36

👍 0

🔁 0

💬 1

📌 0

It is the case here, where we use additional cameras, position estimates, or bounding boxes from pretrained models.

These features i are used as additional input of the critic Q(i, z, a) to provide a better advantage estimate and policy improvement direction.

20.02.2026 21:36

👍 0

🔁 0

💬 1

📌 0

In fact, it is a common assumption in asymmetric RL, which distinguishes the execution information from the training information.

In practice, while we do not always know the exact state, it is common to have more information available about the state at training time.

20.02.2026 21:36

👍 1

🔁 0

💬 1

📌 0

Moreover, (s, z) is shown to be the Markovian state of an equivalent MDP, allowing us to rely on the Bellman equation and TD learning instead of MC learning.

Now, how realistic is it to assume that we know the state s in addition to the input z?

20.02.2026 21:36

👍 0

🔁 0

💬 1

📌 0

Unfortunately, we show that we cannot just learn the symmetric critic Q(z, a) = E[G | z, a]. Instead, we need an asymmetric critic Q(s, z, a) = E[G | s, z, a] for a valid policy iteration.

This is because, unlike for policy gradients, the AWR objective is not linear in Q.

20.02.2026 21:36

👍 0

🔁 0

💬 1

📌 0

To learn a good policy (for this specific input z), we may want to rely on existing RL algorithms such as policy gradient or policy iteration.

Here, because we perform offline-to-online training, we rely on AWR, a policy iteration algorithm going offline to online seamlessly.

20.02.2026 21:36

👍 0

🔁 0

💬 1

📌 0

When learning in real-world scenarios, it is common to have constraints on the input available to the policy at execution time (e.g., last observation only, wrist camera only, etc).

In the general case (POMDP), the input z is a function of the observation history h: z = f(h).

20.02.2026 21:36

👍 0

🔁 0

💬 1

📌 0

AAWR: Real World RL of Active Perception Behaviors @ NeurIPS2025

YouTube video by Jie Wang

At NeurIPS, we presented asymmetric RL algo: Asymmetric Advantage Weighted Regression (AAWR).

This time, the goal is to learn a policy pi(a | z) whose input z = f(h) is not necessarily Markovian. It is useful in robotics, for example.

So, how to adapt RL in POMDP to non Markovian input? 🧵

20.02.2026 21:36

👍 1

🔁 0

💬 1

📌 0

4) Off-Policy Maximum Entropy RL with Future State and Action Visitation Measures.

With Adrien Bolland and Damien Ernst, we propose a new intrinsic reward. Instead of encouraging visiting states uniformly, we encourage visiting *future* states uniformly, from every state.

06.10.2025 09:50

👍 2

🔁 0

💬 1

📌 0

3) Behind the Myth of Exploration in Policy Gradients.

With Adrien Bolland and Damien Ernst, we decided to frame the exploration problem for policy-gradient methods from the optimization point of view.

06.10.2025 09:50

👍 1

🔁 0

💬 1

📌 0

2) Informed Asymmetric Actor-Critic: Theoretical Insights and Open Questions.

With Daniel Ebi and Damien Ernst, we looked for a reason why asymmetric actor-critic was performing better, even when using RNN-based policies with the full observation history as input (no aliasing).

06.10.2025 09:50

👍 1

🔁 0

💬 1

📌 0

1) A Theoretical Justification for Asymmetric Actor-Critic Algorithms.

With Damien Ernst and Aditya Mahajan, we looked for a reason why asymmetric actor-critic algorithms are performing better than their symmetric counterparts.

06.10.2025 09:50

👍 1

🔁 0

💬 1

📌 0

At #EWRL, we presented 4 papers, which we summarize below.

- A Theoretical Justification for AsymAC Algorithms.

- Informed AsymAC: Theoretical Insights and Open Questions.

- Behind the Myth of Exploration in Policy Gradients.

- Off-Policy MaxEntRL with Future State-Action Visitation Measures.

06.10.2025 09:50

👍 4

🔁 1

💬 1

📌 0

Théo Vincent - Optimizing the Learning Trajectory of Reinforcement Learning Agents

YouTube video by Cohere

Had an amazing time presenting my research @cohereforai.bsky.social yesterday 🎤

In case you could not attend, feel free to check it out 👉

youtu.be/RCA22JWiiY8?...

19.07.2025 07:41

👍 7

🔁 3

💬 0

📌 0

Such an inspiring talk by @arkrause.bsky.social at #ICML today. The role of efficient exploration in Scientific discovery is fundamental and I really like how Andreas connects the dots with RL (theory).

17.07.2025 22:14

👍 15

🔁 2

💬 0

📌 0

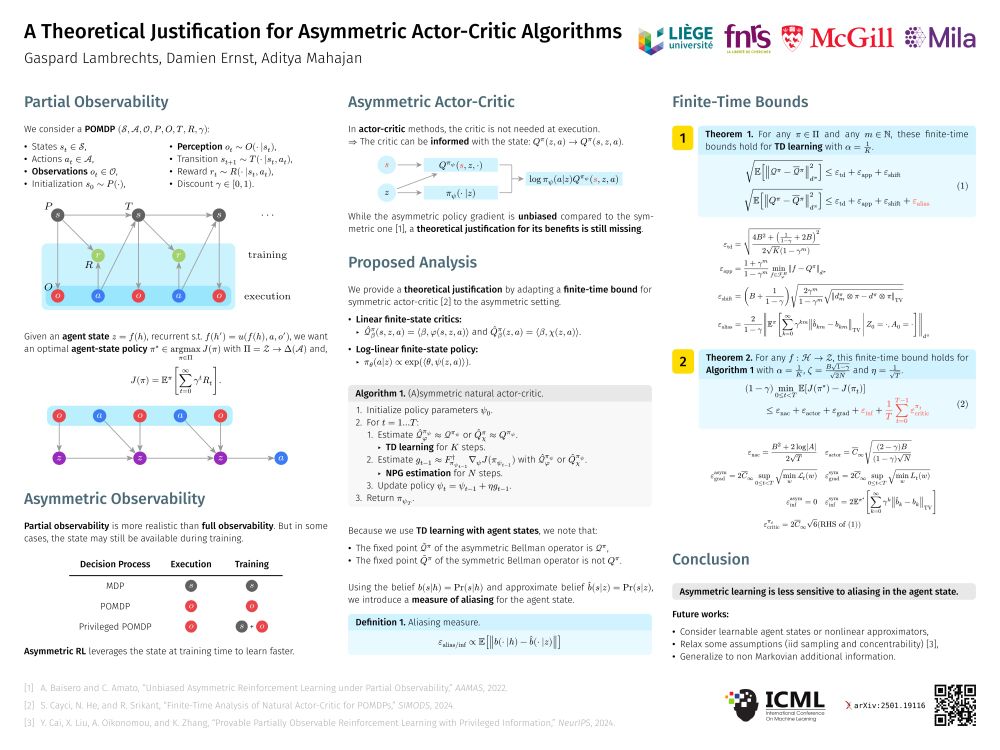

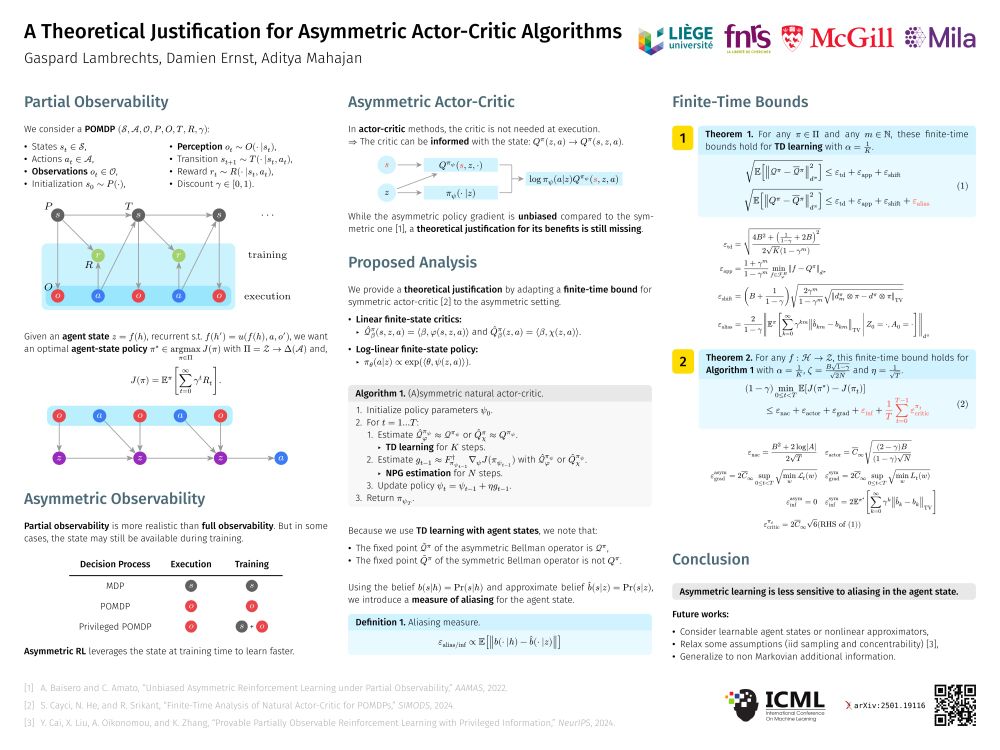

ICML poster of the paper « A Theoretical Justification for Asymmetric Actor-Critic Algorithms » by Gaspard Lambrechts, Damien Ernst and Aditya Mahajan.

At #ICML2025, we will present a theoretical justification for the benefits of « asymmetric actor-critic » algorithms (#W1008 Wednesday at 11am).

📝 Paper: hdl.handle.net/2268/326874

💻 Blog: damien-ernst.be/2025/06/10/a...

16.07.2025 14:32

👍 8

🔁 3

💬 0

📌 0

🌟🌟Good news for the explorers🗺️!

Next week we will present our paper “Enhancing Diversity in Parallel Agents: A Maximum Exploration Story” with V. De Paola, @mircomutti.bsky.social and M. Restelli at @icmlconf.bsky.social!

(1/N)

08.07.2025 14:04

👍 4

🔁 1

💬 1

📌 1

Last week, I gave an invited talk on "asymmetric reinforcement learning" at the BeNeRL workshop. I was happy to draw attention to this niche topic, which I think can be useful to any reinforcement learning researcher.

Slides: hdl.handle.net/2268/333931.

11.07.2025 09:21

👍 6

🔁 3

💬 0

📌 0

Cover page of the PhD thesis "Reinforcement Learning in Partially Observable Markov Decision Processes: Learning to Remember the Past by Learning to Predict the Future" by Gaspard Lambrechts

Two months after my PhD defense on RL in POMDP, I finally uploaded the final version of my thesis :)

You can find it here: hdl.handle.net/2268/328700 (manuscript and slides).

Many thanks to my advisors and to the jury members.

13.06.2025 11:44

👍 8

🔁 2

💬 0

📌 0

A Theoretical Justification for Asymmetric Actor-Critic Algorithms

In reinforcement learning for partially observable environments, many successful algorithms have been developed within the asymmetric learning paradigm. This paradigm leverages additional state inform...

TL;DR: Do not make the problem harder than it is! Using state information during training is provably better.

📝 Paper: arxiv.org/abs/2501.19116

🎤 Talk: orbi.uliege.be/handle/2268/...

A warm thank to Aditya Mahajan for welcoming me at McGill University and for his precious supervision.

09.06.2025 14:41

👍 2

🔁 1

💬 0

📌 0

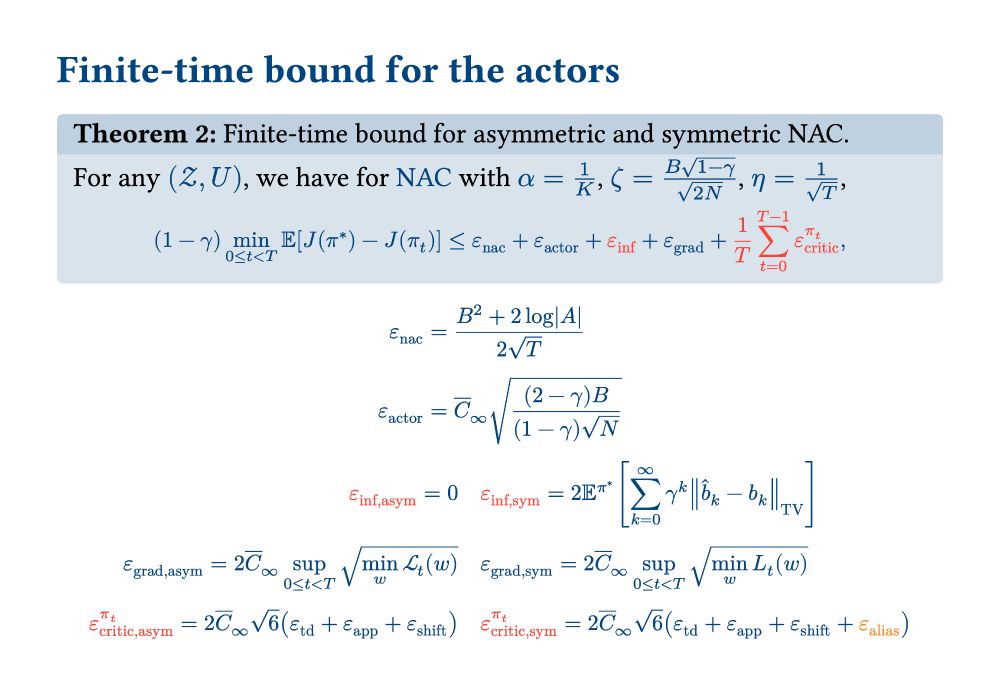

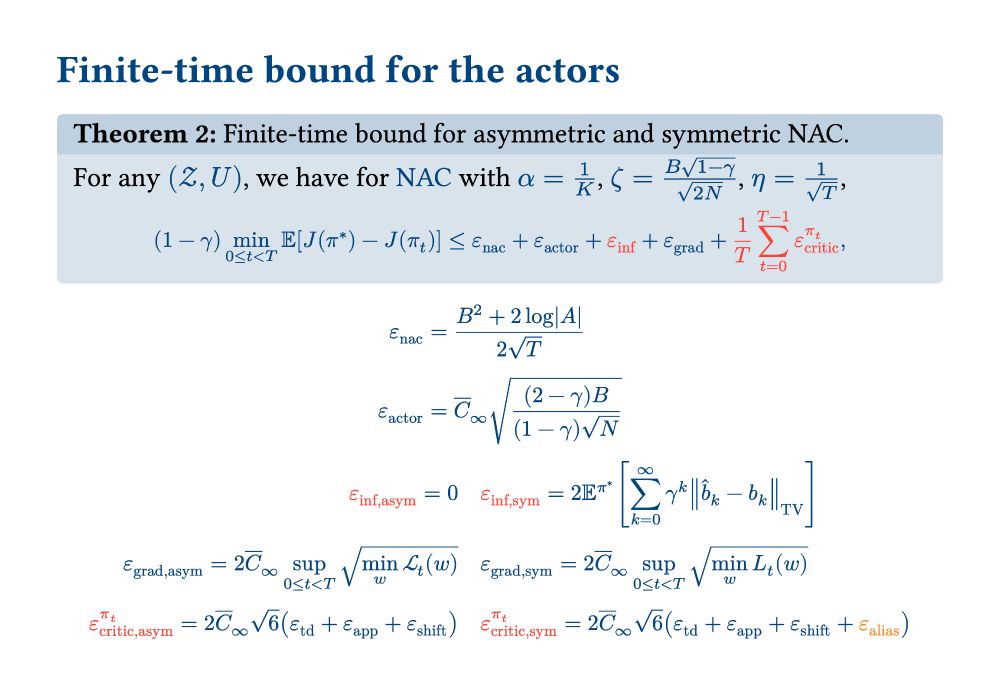

While this work has considered fixed feature z = f(h) with linear approximators, we discuss possible generalizations in the conclusion.

Despite not matching the usual recurrent actor-critic setting, this analysis still provides insights into the effectiveness of asymmetric actor-critic algorithms.

09.06.2025 14:41

👍 0

🔁 0

💬 1

📌 0

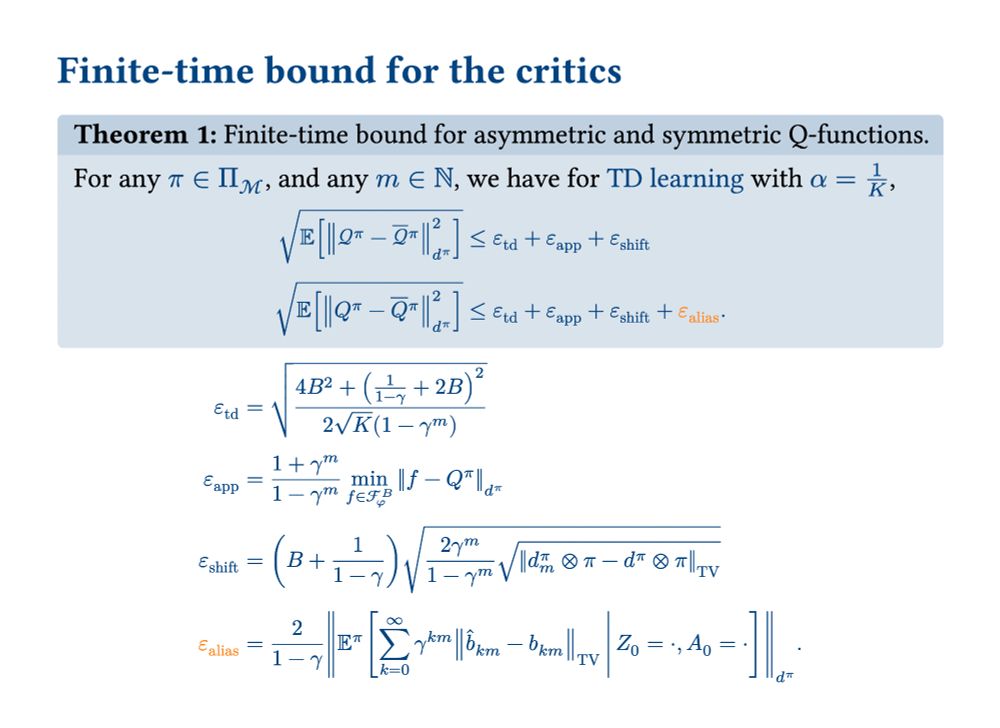

The conclusion is that asymmetric learning is less sensitive to aliasing than symmetric learning.

Now, what is aliasing exactly?

The aliasing and inference terms arise from z = f(h) not being Markovian. They can be bounded by the difference between the approximate p(s|z) and exact p(s|h) beliefs.

09.06.2025 14:41

👍 0

🔁 0

💬 1

📌 0

Theorem showing the finite-time suboptimality bound for the asymmetric and symmetric actor-critic algorithms. The asymmetric algorithm has four terms: the natural actor-critic term, the gradient estimation term, the residual gradient term, and the average critic error. The symmetric algorithm has an additional term: the inference term.

Now, as far as the actor suboptimality is concerned, we obtained the following finite-time bounds.

In addition to the average critic error, which is also present in the actor bound, the symmetric actor-critic algorithm suffers from an additional "inference term".

09.06.2025 14:41

👍 0

🔁 0

💬 1

📌 0

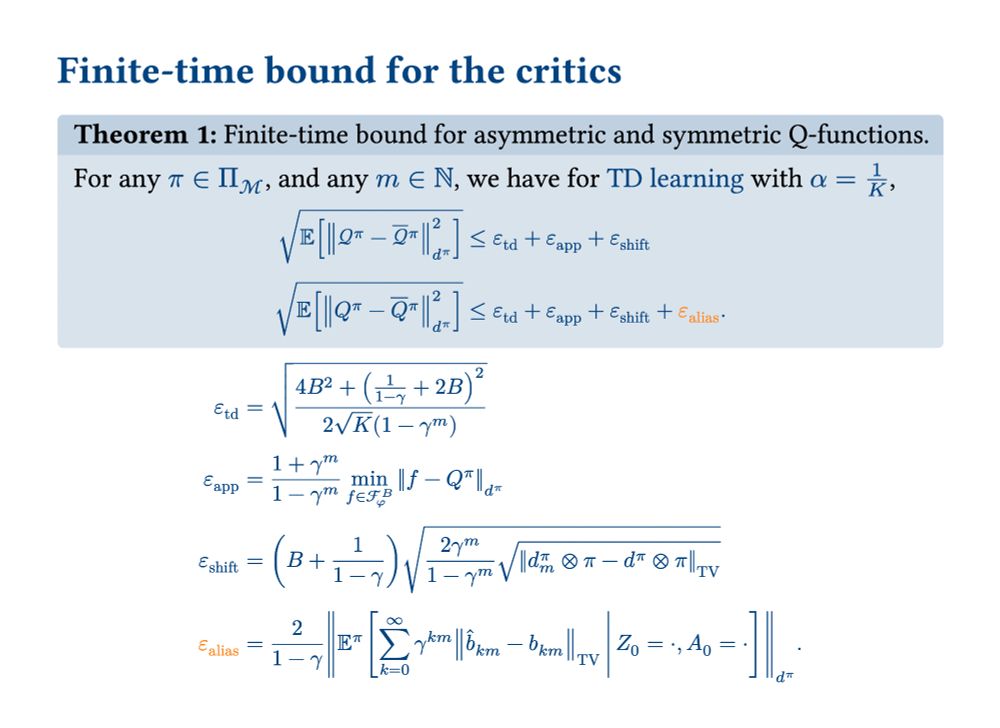

Theorem showing the finite-time error bound for the asymmetric and symmetric temporal difference learning algorithms. The asymmetric algorithm has three terms: the temporal difference learning term, the function approximation term, and the bootstrapping shift term. The symmetric algorithm has an additional term: the aliasing term.

By adapting the finite-time bound from the symmetric setting to the asymmetric setting, we obtain the following error bounds for the critic estimates.

The symmetric temporal difference learning algorithm has an additional "aliasing term".

09.06.2025 14:41

👍 0

🔁 0

💬 1

📌 0

Title page of the paper "A Theoretical Justification for Asymmetric Actor-Critic Algorithms", written by Gaspard Lambrechts, Damien Ernst and Aditya Mahajan.

While this algorithm is valid/unbiased (Baisero & Amato, 2022), a theoretical justification for its benefit is still missing.

Does it really learn faster than symmetric learning?

In this paper, we provide theoretical evidence for this, based on an adapted finite-time analysis (Cayci et al., 2024).

09.06.2025 14:41

👍 0

🔁 0

💬 1

📌 0