We built a thing! The Databricks Reranker is now in Public Preview. It's as easy as changing the arguments to your vector search call, and doesn't require any additional setup.

Read more: www.databricks.com/blog/reranki...

We built a thing! The Databricks Reranker is now in Public Preview. It's as easy as changing the arguments to your vector search call, and doesn't require any additional setup.

Read more: www.databricks.com/blog/reranki...

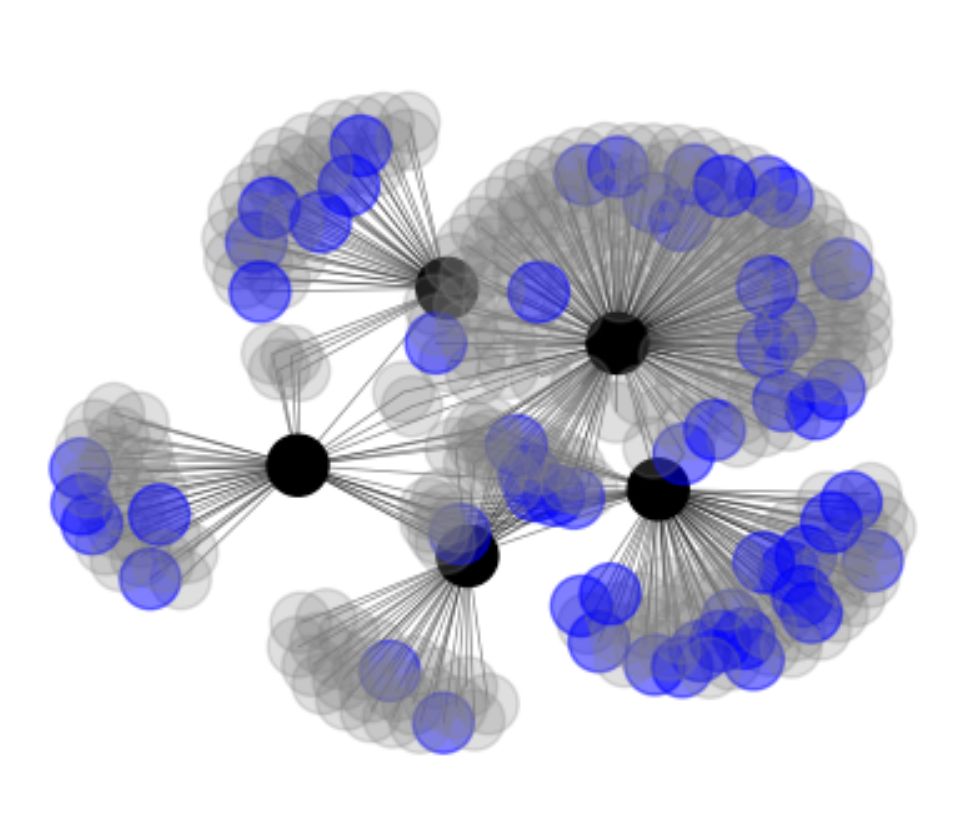

The transformer was invented in Google. RLHF was not invented in industry labs, but came to prominence in OpenAI and DeepMind. I took 5 of the most influential papers (black dots) and visualized their references. Blue dots are papers that acknowledge federal funding (DARPA, NSF).

LongEval is turning three this year!

This is a Call for Participation to our CLEF 2025 Lab - try out how your IR system does in the long term.

Check the details on our page:

clef-longeval.github.io

The PhD is pretraining. Interview prep is alignment. Take this to heart. :)

Leaderboard showing performance of language models on claim verification task over book-length input. o1-preview is the best model with 67.36% accuracy followed by Gemini 2.5 Pro with 64.17% accuracy.

We have updated #nocha, a leaderboard for reasoning over long-context narratives 📖, with some new models including #Gemini 2.5 Pro which shows massive improvements over the previous version! Congrats to #Gemini team 🪄 🧙 Check 🔗 novelchallenge.github.io for details :)

I think ARR used to do this? Seems like it’s missing in the recent cycle(s).

stats.aclrollingreview.org/iterations/2...

A corollary here is that a relevant context might not improve the probability of the right answer.

Perhaps the most misunderstood aspect of retrieval: For a context to be relevant, it is not enough for it to improve the probability of the right answer.

MLflow is on BlueSky! Follow @mlflow.org to keep up to date on new releases, blogs and tutorials, events, and more.

ris.utwente.nl/ws/portalfil...

---Born To Add, Sesame Street

---(sung to the tune of Bruce Springsteen’s Born to Run)

One, and two, and three police persons spring out of the shadows

Down the corner comes one more

And we scream into that city night: “three plus one makes four!”

Well, they seem to think we’re disturbing the peace

But we won’t let them make us sad

’Cause kids like you and me baby, we were born to add

"How Claude Code is using a 50-Year-Old trick to revolutionize programming"

Somehow my most controversial take of 2025 is that agents relying on grep are a form of RAG.

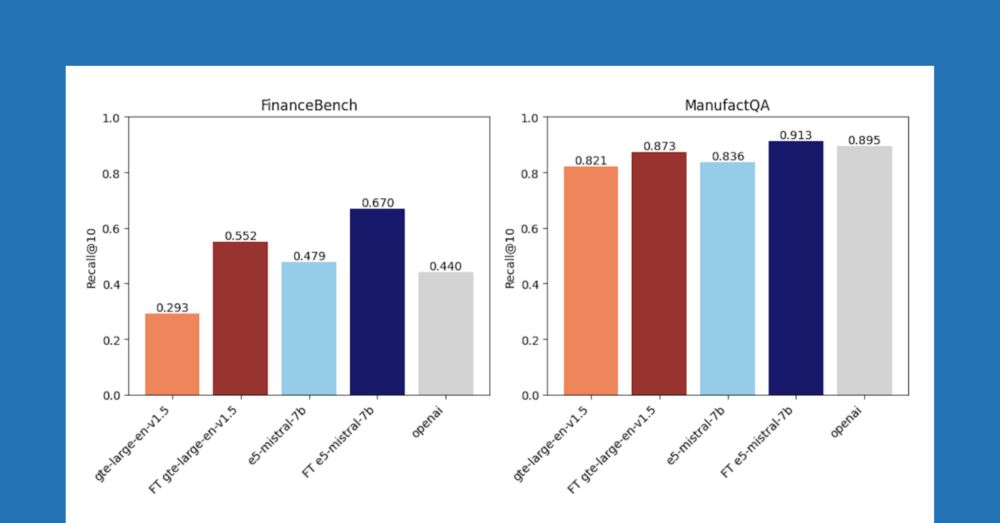

Embedding finetuning is not a new idea, but it's still overlooked IMO.

The promptagator work is one of the more impactful papers that show finetuning with synthetic data is effective.

arxiv.org/abs/2209.11755

Search is the key to building trustworthy AI and will only be more important as we build more ambitious applications. With that in mind, there's not nearly enough energy spent improving the quality of search systems.

Follow the link for the full episode:

www.linkedin.com/posts/data-b...

It was a real pleasure talking about effective IR approaches with Brooke and Denny on the Data Brew podcast.

Among other things, I'm excited about embedding finetuning and reranking as modular ways to improve RAG pipelines. Everyone should use these more!

We're probably a little too obsessed with zero-shot retrieval. If you have documents (you do), then you can generate synthetic data, and finetune your embedding. Blog post lead by @jacobianneuro.bsky.social shows how well this works in practice.

www.databricks.com/blog/improvi...

I do want to see aggregate stats about the model’s generation and total reasoning tokens is perhaps the least informative one.

"All you need to build a strong reasoning model is the right data mix."

The pipeline that creates the data mix:

After frequent road runs during a Finland visit I tend to feel the same

Using 100+ tokens to answer 2 + 3 =

It’s pretty obvious we’re in a local minima for pretraining. Would expect more breakthroughs in the 5-10 year range. Granted, it’s still incredibly hard and expensive to do good research in this space, despite the number of labs working on it.

Word of the day (of course) is ‘scurryfunging’, from US dialect: the frantic attempt to tidy the house just before guests arrive.

... didn't know this would be one of the hottest takes i've had ...

for more on my thoughts, see drive.google.com/file/d/1sk_t...

feeling a but under the weather this week … thus an increased level of activity on social media and blog: kyunghyuncho.me/i-sensed-anx...

Smarter, Better, Faster, Longer: A Modern Bidirectional Encoder for Fast, Memory Efficient, and Long Context Finetuning and Inference

Introduces ModernBERT, a bidirectional encoder advancing BERT-like models with 8K context length.

📝 arxiv.org/abs/2412.13663

👨🏽💻 github.com/AnswerDotAI/...

State Space Models are Strong Text Rerankers

Shows Mamba-based models achieve comparable reranking performance to transformers while being more memory efficient, with Mamba-2 outperforming Mamba-1.

📝 arxiv.org/abs/2412.14354

I’m being facetious, but the truth behind the joke is that OCR correction opens up the possibility (and futility) of language much like drafting poetry. For every interpreted pattern for optimizing OCR correction, exceptions arise. So, too, with patterns in poetry.

Wait can you say more