thank you @claudia-lopez.bsky.social for this clear and thoughtful coverage in @thetransmitter.bsky.social on some of our recent work on dynamic neural codes in speech processing! 🧠 🌀 ✨ www.thetransmitter.org/language/shi...

thank you @claudia-lopez.bsky.social for this clear and thoughtful coverage in @thetransmitter.bsky.social on some of our recent work on dynamic neural codes in speech processing! 🧠 🌀 ✨ www.thetransmitter.org/language/shi...

📢 PhD position in the NeuroAI of Language

Why can LLMs predict brain activity so well? We're hiring a PhD student to find out -- AI interpretability meets neuroimaging

Deadline March 20

Please RT 🙏

👇

mpi.nl/career-education/vacancies/vacancy/fully-funded-4-year-phd-position-neuroai-language

I did a MSc project on seal pup vocalization timing in long rehab centre recordings years ago, may still have some relevant refs scripties.uba.uva.nl/search?id=re... and I believe Koen de Reus' PhD thesis (to be defended this week) has a bunch more up-to-date work on this! www.mpi.nl/events/imprs...

Our paper has been accepted to EACL 2026!🎉 We systematically evaluate several vision-language (VLMs) and language-only models, measuring their alignment with brain responses to concept words. Our results show that vision-language models offer a promising tool to model human concept processing

Thrilled to announce the 1st Workshop on Computational Developmental Linguistics (CDL) at ACL 2026 🎉 A new venue at the intersection of development linguistics × modern NLP, spearheaded by @fredashi.bsky.social @marstin.bsky.social, and and outstanding team of colleagues!

A thread 🧵

If you're in the Netherlands or nearby, check out the Fourth Dutch Speech Tech Day. I'll be there.

www.eventbrite.com/e/4th-dutch-...

Commentary title: Linguists should learn to love speech-based deep learning models Authors: Marianne de Heer Kloots, Paul Boersma, Willem Zuidema Abstract: Futrell and Mahowald present a useful framework bridging technology-oriented deep learning systems and explanation-oriented linguistic theories. Unfortunately, the target article's focus on generative text-based LLMs fundamentally limits fruitful interactions with linguistics, as many interesting questions on human language fall outside what is captured by written text. We argue that audio-based deep learning models can and should play a crucial role.

'Tis the season to preprint BBS commentaries; I'm happy to share ours too! 🎄✨

The textual basis of current LLMs causes trouble, but linguistically relevant insights *can* be found in systems modelling the more natural form of human spoken language: the speech signal itself. arxiv.org/abs/2512.14506

New book! I have written a book, called Syntax: A cognitive approach, published by MIT Press.

This is open access; MIT Press will post a link soon, but until then, the book is available on my website:

tedlab.mit.edu/tedlab_websi...

New post! Last week I shared why I thought cognitive (neuro)science hasn’t contributed as much as one might hope to the design of AI systems; this week I'm sharing my thoughts on how methods and principles from these fields *have* been useful in my work. infinitefaculty.substack.com/p/how-cognit...

Poster title: Does multimodal pre-activation influence linguistic expectations in LLMs and humans? Authors: Sasha Kenjeeva, Giovanni Cassani, Noortje Venhuizen, Afra Alishahi

Poster title: Generalizing Without Evidence: How Transformer Models Infer Syntactic Rules From Sparse Input Authors: Mark van den Hoorn, Raquel G. Alhama

Poster title: Dependency Length, Syntactic Complexity & Memory: A Reading Time Benchmark for Sentence Processing Modeling Authors: Nina Nusbaumer, Corentin Bel, Iria de-Dios-Flores, Guillaume Wisniewski, Benoit Crabbé

Poster title: The success of Neural Language Models on syntactic island effects is not universal: strong wh-island sensitivity in English but not in Dutch Authors: Michelle Suijkerbuijk, Naomi Tachikawa Shapiro, Peter de Swart, Stefan L. Frank

Cool posters from day 2!

@sashakenjeeva.bsky.social openreview.net/forum?id=Vtd...

github.com/markvandenho... openreview.net/forum?id=rX3...

@nina-nusbaumer.bsky.social openreview.net/forum?id=GRz...

www.ru.nl/personen/sui... openreview.net/forum?id=NcJ...

Today we are time-travelling with @stefanfrank.bsky.social 😎

I’m really enjoying the Computational Psycholinguistics Meeting in Utrecht! cpl2025.sites.uu.nl 🧠

Yesterday MSc student Sven Terpstra (co-supervised w/ @wzuidema.bsky.social) presented his project on predicting the N400 with GPT-derived metrics beyond surprisal openreview.net/forum?id=MAl...

This is in response to the recent target article by @futrell.bsky.social & @kmahowald.bsky.social, a wonderful read! Thanks to the authors for writing such a clear and inspiring piece to start this discussion. doi.org/10.1017/S014...

Commentary title: Linguists should learn to love speech-based deep learning models Authors: Marianne de Heer Kloots, Paul Boersma, Willem Zuidema Abstract: Futrell and Mahowald present a useful framework bridging technology-oriented deep learning systems and explanation-oriented linguistic theories. Unfortunately, the target article's focus on generative text-based LLMs fundamentally limits fruitful interactions with linguistics, as many interesting questions on human language fall outside what is captured by written text. We argue that audio-based deep learning models can and should play a crucial role.

'Tis the season to preprint BBS commentaries; I'm happy to share ours too! 🎄✨

The textual basis of current LLMs causes trouble, but linguistically relevant insights *can* be found in systems modelling the more natural form of human spoken language: the speech signal itself. arxiv.org/abs/2512.14506

Why do humans have linguistic intuition? And why should you care?

A short thread about my new paper in @cadlin.bsky.social

This work has the most original insight I've ever had, a genuinely new idea about the nature of language

cadernos.abralin.org/index.php/ca...

1/20

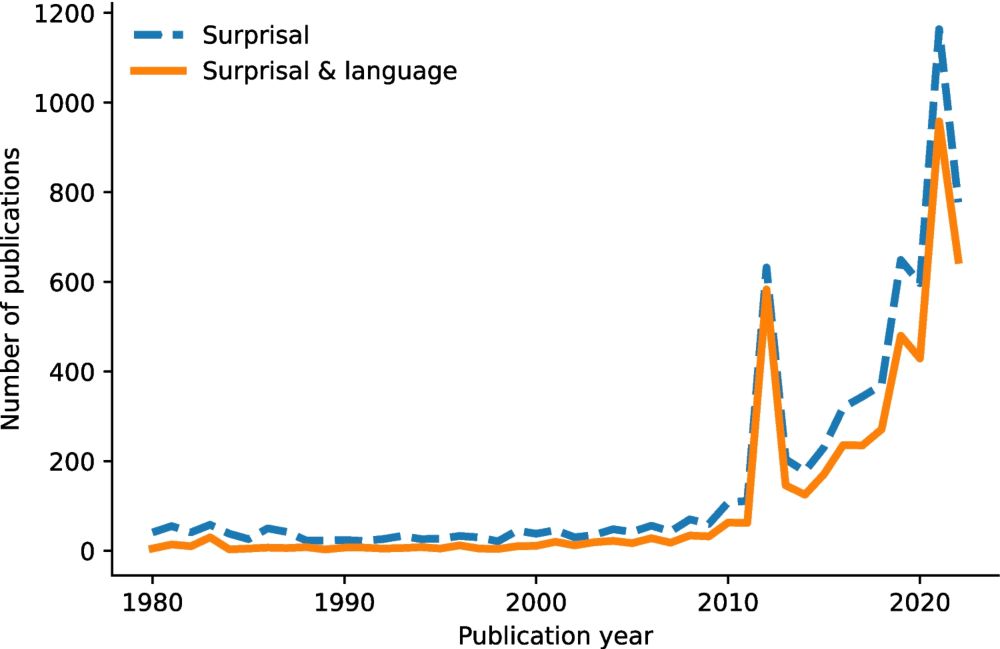

Many studies of naturalistic comprehension report that surprisal (often LLM derived) explains more of the variance in data than other predictors. Why is this? And why can it be problematic for our conclusions?

A 🧵 of takeaways from our paper doi.org/10.1007/s421... with @andreaeyleen.bsky.social

Why isn’t modern AI built around principles from cognitive science or neuroscience? Starting a substack (infinitefaculty.substack.com/p/why-isnt-m...) by writing down my thoughts on that question: as part of a first series of posts giving my current thoughts on the relation between these fields. 1/3

I had a wonderful time getting to know @gronlp.bsky.social last week while discussing linguistic structure and learning trajectories in speech models! ✨ Many thanks for the invite @frap98.bsky.social, already looking forward to catching up again soon :)

The title page

🚨NEW PUBLICATION ALERT!🚨

The 'Design Features' of Language Revisited (w/ @mperlman.bsky.social @glupyan.bsky.social Koen de Reus & @limorraviv.bsky.social)

Feature Review out now in #OpenAccess in @cp-trendscognsci.bsky.social! #language #linguistics

Paper: doi.org/10.1016/j.ti...

Interested in the evolution of human language? Check out our new paper in @science.org where we synthesize latest findings and outline a multifaceted, bio-cultural approach for studying how language evolved. Super proud of this work, and hoping it leads to exciting new research! tinyurl.com/ykacvanp

The Multilingual Minds & Machines Meetings call for abstracts is now open! Everything you need to know is here -> mmmm2026.github.io

happy to share our new paper, out now in Neuron! led by the incredible Yizhen Zhang, we explore how the brain segments continuous speech into word-forms and uses adaptive dynamics to code for relative time - www.sciencedirect.com/science/arti...

Delighted to share our new paper, now out in PNAS! www.pnas.org/doi/10.1073/...

"Hierarchical dynamic coding coordinates speech comprehension in the brain"

with dream team @alecmarantz.bsky.social, @davidpoeppel.bsky.social, @jeanremiking.bsky.social

Summary 👇

1/8

🌍Introducing BabyBabelLM: A Multilingual Benchmark of Developmentally Plausible Training Data!

LLMs learn from vastly more data than humans ever experience. BabyLM challenges this paradigm by focusing on developmentally plausible data

We extend this effort to 45 new languages!

Interesting paper suggesting a mechanism for why in-context learning happens in LLMs.

They show that LLMs implicitly apply an internal low-rank weight update adjusted by the context. It’s cheap (due to the low-rank) but effective for adapting the model’s behavior.

#MLSky

arxiv.org/abs/2507.16003

PhD Position: Accented Speech Processing - Apply now!

Come work with Mirjam Broersma, @davidpeeters.bsky.social, and me at the Centre for Language Studies, Radboud University in the Netherlands.

Application deadline: 19 October 2025

For more information, see

www.ru.nl/en/working-a...

Huge congrats to the envisionBOX team for the Open Science award nomination! 🎉

My tutorial on speech analysis tools in Python from the Unboxing Multimodality summer school (github.com/mdhk/unboxin...) is now also available at envisionbox.org

Thanks for the invitation & this great initiative! 👏

The 𝗜𝗟𝗖𝗕 𝗦𝘂𝗺𝗺𝗲𝗿 𝗦𝗰𝗵𝗼𝗼𝗹 in Marseille went beyond all my expectations! 💯

A week has already flown by since I had one of the most formative experiences of my PhD so far. 👩🎨

Thanks to all co-authors in the Dutch SSL training team @hmohebbi.bsky.social @cpouw.bsky.social @gaofeishen.com @wzuidema.bsky.social + Martijn Bentum

And to @itcooperativesurf.bsky.social (EINF-8324) for granting me the resources that enabled this project 👩💻✨

Check out the paper for more details:

📄 arxiv.org/abs/2506.00981

Or the model, dataset and code released alongside it:

🤗 huggingface.co/amsterdamNLP...

🗃️ zenodo.org/records/1554...

🔍 github.com/mdhk/SSL-NL-...

We hope these resources help further research on language-specificity in speech models!