SECOND CALL: SHREC'26 Challenge on 3D Reconstruction

Our dataset features intricate geometries, ideal for benchmarking of high-frequency detail recovery.

All participating will co-author a joint paper submitted to Computers & Graphics.

Track Details shapevision.dcc.uchile.cl/cllull-shrec2026

27.02.2026 16:53

👍 0

🔁 1

💬 0

📌 0

#CVPR2026 reviews are slowly being dispatched over email. Good luck!

22.01.2026 18:33

👍 8

🔁 1

💬 0

📌 1

Screenshot of a paper discussion page titled ‘mHC: Manifold-Constrained Hyper-Connections’. At the top is a card showing the paper title, a ‘View on arXiv’ link, and indicators for 7 posts and 7 researchers. Below are social-style posts referencing the paper: one from ‘NT 5.2 Pyongyang Official™’ linking to arXiv with the caption ‘THE WHALE IS BACK BABYYYY’ and an arXiv preview image, and another from ‘Hacker News’ linking to the same arXiv paper. The interface resembles a research discussion or social feed layout.

new year and @mariaa.bsky.social and I have some fun new things cooking for the atproto ecosystem...

01.01.2026 15:58

👍 116

🔁 14

💬 10

📌 4

Hey this worked! Import all of your old Twitter posts over to Bluesky (for a few bucks, depending on how irrepressible you were). Now I look like I've been posting on this platform longer than it has existed.

22.10.2024 13:42

👍 114

🔁 24

💬 11

📌 8

Choosing the right colormap is tricky, too often, they hide subtle details or distort the data. Our new method transforms colormaps to boost local contrast and reveal just noticeable differences, all while keeping the visualization perceptually accurate and accessible.

dl.acm.org/doi/10.1145/...

15.08.2025 15:44

👍 46

🔁 9

💬 1

📌 1

1/ Can open-data models beat DINOv2? Today we release Franca, a fully open-sourced vision foundation model. Franca with ViT-G backbone matches (and often beats) proprietary models like SigLIPv2, CLIP, DINOv2 on various benchmarks setting a new standard for open-source research.

21.07.2025 14:47

👍 83

🔁 21

💬 2

📌 3

How can one reconstruct the complete 3D interior of a wood block using only photos of its surfaces? 🪵

At SIGGRAPH'25 (Thursday!), Maria Larsson will present *Mokume*: a dataset of 190 diverse wood samples and a pipeline that solves this inverse texturing challenge. 🧵👇

08.08.2025 11:53

👍 76

🔁 15

💬 2

📌 1

New 3D foundation model dropped.

Note: Seems they might have messed up their image matching metrics (seems like acc rather than auc), but should be at least as good as mast3r.

24.07.2025 22:50

👍 11

🔁 2

💬 2

📌 0

Turns out that by default huggingface models run on the CPU...

20.07.2025 12:10

👍 1

🔁 0

💬 0

📌 1

Awesome initiative 🎉

This leaves me wondering though: how come authors attending #EurIPS still have to register for the main #NeurIPS (in the Americas) for their paper to be considered accepted?

You stopped so short of actually allowing ML researchers to fly less!

17.07.2025 14:12

👍 32

🔁 5

💬 5

📌 2

A meme where Anakin and Padme discuss the logics of allowing a NeurIPS event in Europe while forcing authors to also present in the US for publication

Sofar it doesn’t look good: neurips.cc/FAQ/AuthorRe...

“At least one author of each accepted paper must register for the main conference. A ‘Virtual Only Pass’ is not sufficient.”

17.07.2025 07:32

👍 7

🔁 2

💬 1

📌 0

WeTransfer just changed their TOS giving themselves permission to train AI on any content you transfer and produce derivative works based on content you transfer that they are allowed to monetize and you are not allowed payment for.

Stop using WeTransfer.

14.07.2025 23:05

👍 7590

🔁 5277

💬 128

📌 463

The code for our #CVPR2025 paper, PRaDA: Projective Radial Distortion Averaging, is now out!

Turns out distortion calibration from multiview 2D correspondences can be fully decoupled from 3D reconstruction, greatly simplifying the problem

arxiv.org/abs/2504.16499

github.com/DaniilSinits...

09.07.2025 13:54

👍 12

🔁 5

💬 1

📌 0

🦖 We present “Feed-Forward SceneDINO for Unsupervised Semantic Scene Completion”. #ICCV2025

🌍: visinf.github.io/scenedino/

📃: arxiv.org/abs/2507.06230

🤗: huggingface.co/spaces/jev-a...

@jev-aleks.bsky.social @fwimbauer.bsky.social @olvrhhn.bsky.social @stefanroth.bsky.social @dcremers.bsky.social

09.07.2025 13:17

👍 24

🔁 10

💬 1

📌 1

We just released COLMAP v3.12, which adds long-awaited, end-to-end support for multi-camera rigs and 360° panoramas 👀 COLMAP just got better at handling your robotics, AR/VR, or 360 data - try it yourself and let us know! github.com/colmap/colma... Kudos to Johannes & team for this great work 🚀

01.07.2025 16:33

👍 22

🔁 6

💬 1

📌 0

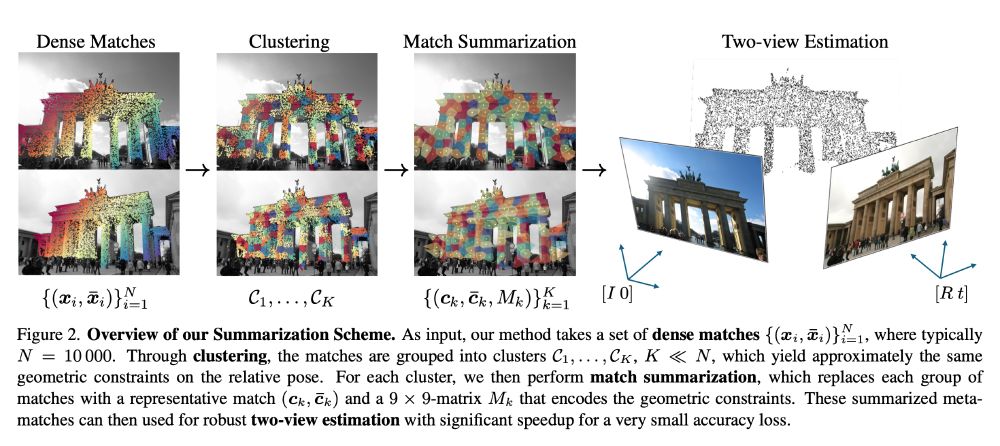

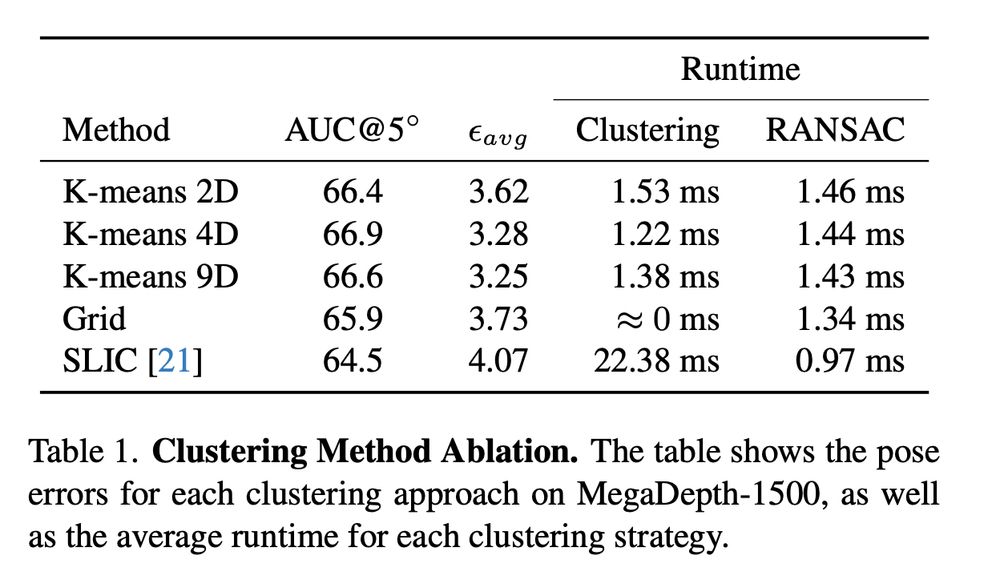

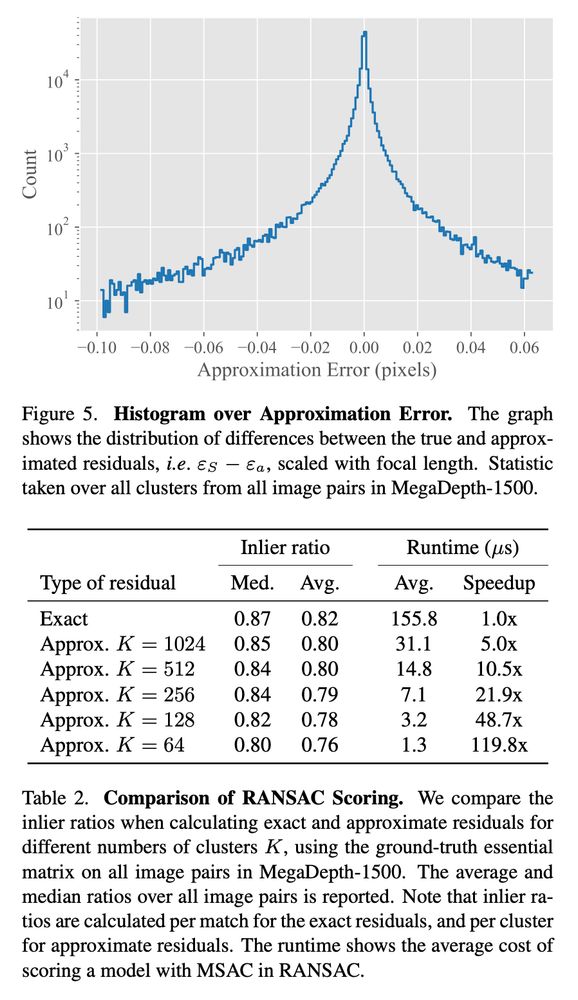

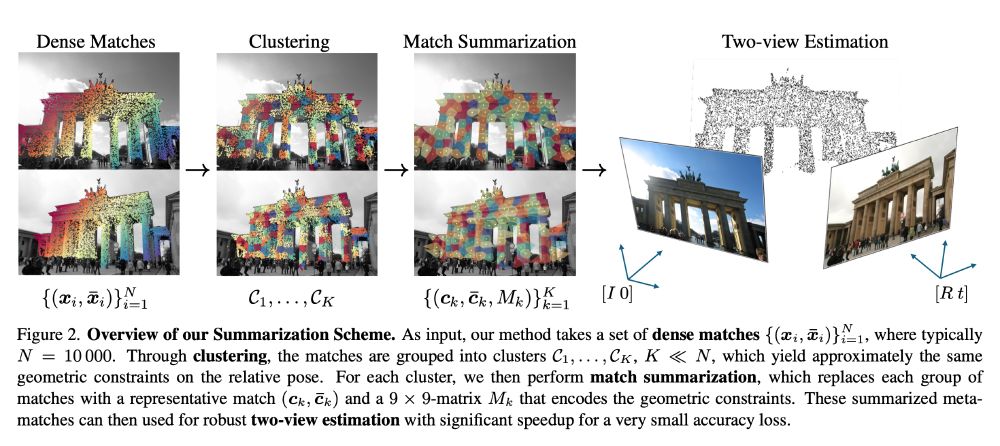

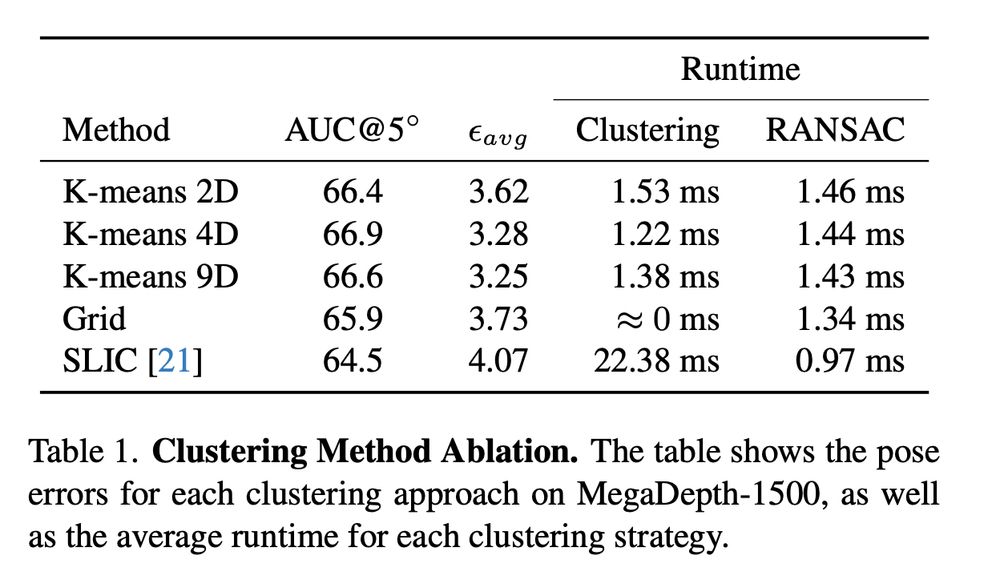

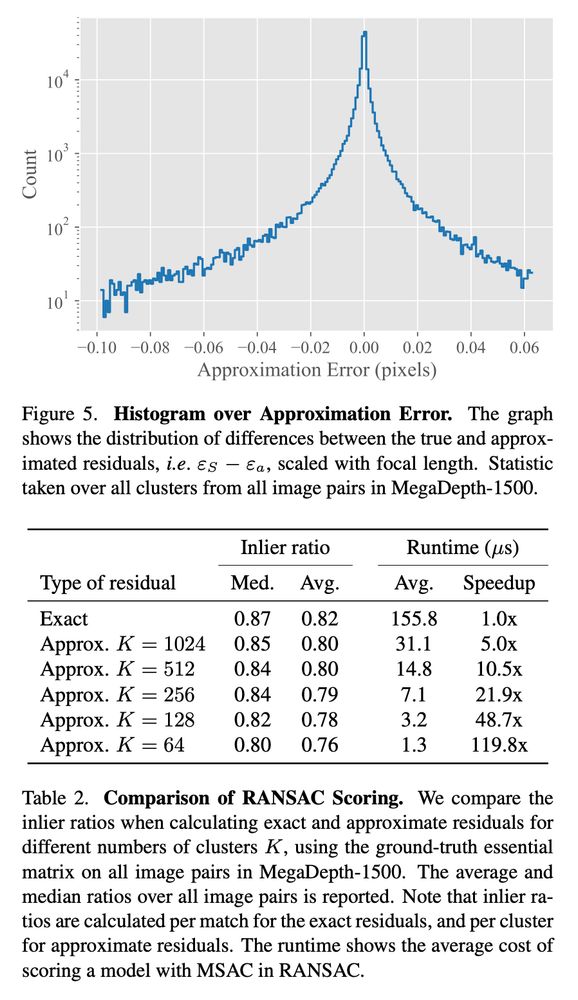

Dense Match Summarization for Faster Two-view Estimation

Jonathan Astermark, Anders Heyden, Viktor Larsson

tl;dr: use clustering to reduce RANSAC time when using dense methods like RoMa.

Kudos for eval on WxBS.

P.S. now the same, but for BA?

arxiv.org/abs/2506.028...

24.06.2025 12:22

👍 12

🔁 2

💬 2

📌 1

🤗 I’m excited to share our recent work: TwoSquared: 4D Reconstruction from 2D Image Pairs.

🔥 Our method produces geometry, texture-consistent, and physically plausible 4D reconstructions

📰 Check our project page sangluisme.github.io/TwoSquared/

❤️ @ricmarin.bsky.social @dcremers.bsky.social

23.04.2025 16:48

👍 9

🔁 3

💬 0

📌 1

Can we match vision and language representations without any supervision or paired data?

Surprisingly, yes!

Our #CVPR2025 paper with @neekans.bsky.social and @dcremers.bsky.social shows that the pairwise distances in both modalities are often enough to find correspondences.

⬇️ 1/4

03.06.2025 09:27

👍 27

🔁 12

💬 1

📌 0

Can you train a model for pose estimation directly on casual videos without supervision?

Turns out you can!

In our #CVPR2025 paper AnyCam, we directly train on YouTube videos and achieve SOTA results by using an uncertainty-based flow loss and monocular priors!

⬇️

13.05.2025 08:11

👍 25

🔁 10

💬 1

📌 1

We also found that this allows the CTM to decide to spend less time thinking on simpler images, thus saving energy. When identifying a gorilla, for example, the CTM’s attention moves from eyes to nose to mouth in a pattern remarkably similar to human visual attention.

12.05.2025 02:42

👍 18

🔁 2

💬 1

📌 0

📢 New paper CVPR 25!

Can meshes capture fuzzy geometry? Volumetric Surfaces uses adaptive textured shells to model hair, fur without the splatting / volume overhead. It’s fast, looks great, and runs in real time even on budget phones.

🔗 autonomousvision.github.io/volsurfs/

📄 arxiv.org/pdf/2409.02482

05.05.2025 13:00

👍 32

🔁 21

💬 1

📌 1

ZurichCV #9 | ZurichAI

Linus Scheibenreif (ETH Zurich) will talk about self-supervised learning for satellite imagery, and Pascal Chang (ETH Zurich/Disney Research) will present his recent work (topic to be announced).

8th ZurichCV is on the 29th of April. We have two fantastic speakers: Linus Scheibenreif (ETH Zurich) will talk about self-supervised learning for satellite imagery, and Pascal Chang (Disney Research) will give us a preview of his soon-to-be-published work.

RSVP: www.zurichai.ch/events/zuric...

20.04.2025 06:31

👍 17

🔁 3

💬 0

📌 0

No meal has ever sustained me for more than a few hours, a mere blip on the timeline of my life, 0.001% of my expected lifespan. So therefore I'll no longer be paying at restaurants

17.04.2025 11:53

👍 74

🔁 24

💬 2

📌 0

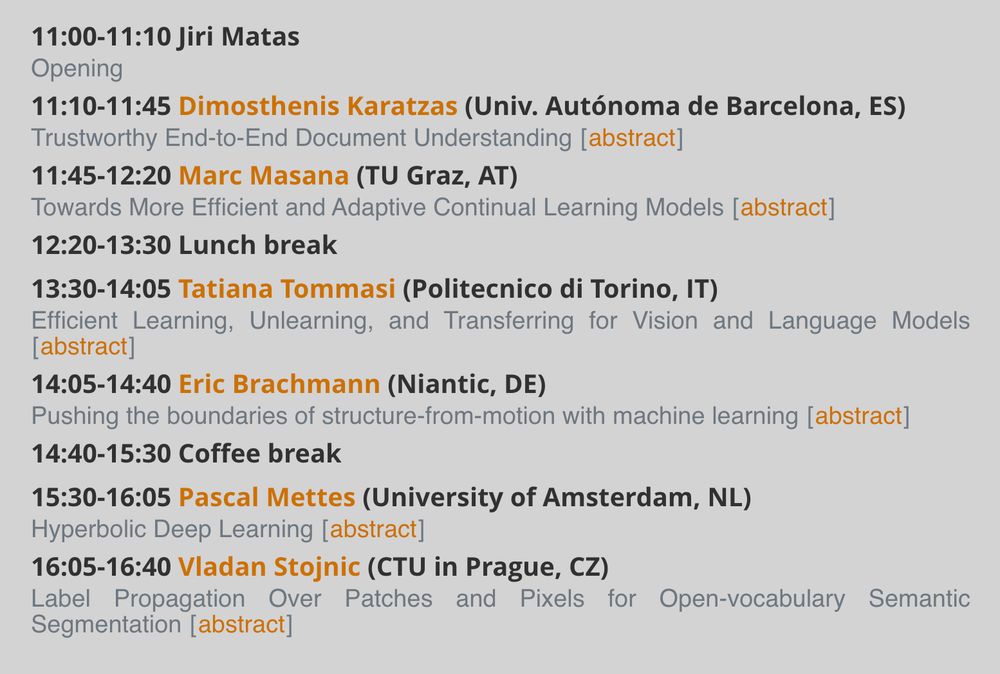

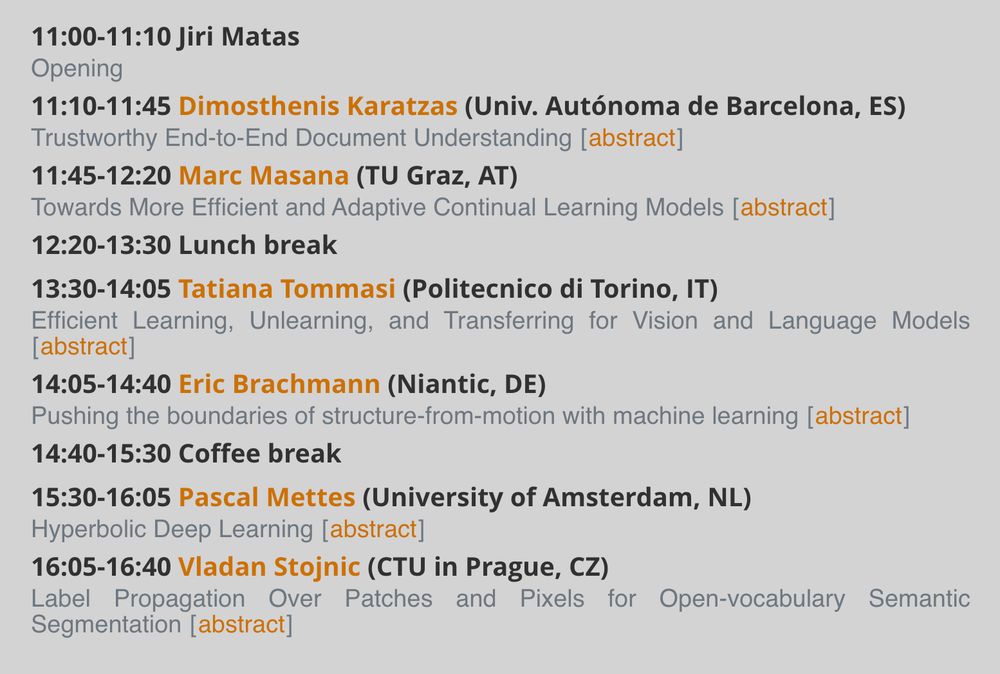

The Visual Recognition Group at CTU in Prague organizes the 49th Pattern Recognition and Computer Vision Colloquium with D. Karatzas, M. Masana, T. Tommasi, P. Mettes @pascalmettes.bsky.social , E. Brachmann @ericbrachmann.bsky.social and V. Stojnic @stojnicv.xyz

cmp.felk.cvut.cz/colloquium/#...

07.04.2025 13:57

👍 34

🔁 10

💬 2

📌 2

3D Gaussian splatting relies on depth-sorting of splats, which is costly and prone to artifacts (e.g., "popping"). In our latest work, "StochasticSplats", we replace sorted alpha blending by stochastic transparency, an unbiased Monte Carlo estimator from the real-time rendering literature.

07.04.2025 07:56

👍 52

🔁 13

💬 2

📌 2

𝗠𝗖𝗠𝗟 𝗕𝗹𝗼𝗴: Robots & self-driving cars rely on scene understanding, but AI models for understanding these scenes need costly human annotations. Daniel Cremers & his team introduce 🥤🥤 CUPS: a scene-centric unsupervised panoptic segmentation approach to reduce this dependency. 🔗 mcml.ai/news/2025-04...

03.04.2025 09:45

👍 6

🔁 1

💬 0

📌 1